3 The Binomial (and Related) Distributions

In this chapter, we illustrate probability and statistical inference concepts utilizing the binomial distribution, which governs the data that arise from binomial experiments: experiments with two discrete outcomes (like coin flips). Just like the normal distribution is the most commonly utilized continuous distribution in data analyses, the binomial is the most commonly utilized discrete distribution.

Let’s assume we are holding a coin. It may be a fair coin (meaning that the probabilities of observing heads or tails in a given flip of the coin are each 0.5)…or perhaps it is not. We decide that we are going to flip the coin some fixed number of times \(k\), and we will record the outcome of each flip: \[ \mathbf{Y} = \{Y_1,Y_2,\ldots,Y_k\} \,. \] Here, e.g., \(Y_1 = 1\) if we observe heads or \(0\) if we observe tails. This is an example of a Bernoulli process, where “process” denotes a sequence of observations, and “Bernoulli” indicates that there are two possible discrete outcomes for each observation.

A binomial experiment is one that generates Bernoulli process data through the running of \(k\) trials (e.g., \(k\) separate coin flips). The properties of such an experiment are that:

- The number of trials \(k\) is fixed in advance.

- Each trial has two possible outcomes, typically denoted as \(S\) (success) or \(F\) (failure).

- A trial may have no more than one realized outcome.

- The outcome of any one trial is independent of the outcomes of the others.

- The probability of success \(p\) remains constant throughout the experiment.

The random variable of interest for a binomial experiment, \(X\), is the total number of observed successes.

Now, about the probability of success remaining \(p\) throughout the experiment: binomial experiments rely on sampling with replacement…if we observe a head for a given coin flip, we can observe heads again in the future. In the real world, however, the reader will observe experiments in which data are sampled from finite, tangible populations without replacement. In such a situation, one would be carrying out a hypergeometric experiment, with data that are governed by the hypergeometric distribution. (See the section on related distributions below.)

Regarding the fourth point above, about the outcome of each trial being independent of the outcomes of the others: in a general process, each datum can be dependent on the data observed previously. How each datum is dependent on previous data defines the type of process that is observed: a Markov process, a Gaussian process, etc. A Bernoulli process is termed a memoryless process because it is comprised of independent data: e.g., what we observe on the next coin flip will not depend on what we have just observed on the current coin flip.

3.1 Binomial Distribution: Probability Mass Function

What to take away from this section:

The binomial distribution is a discrete distribution with a probability mass function whose shape and location along the real-number line is governed by two parameters: \(k\), the number of binomial trials, and \(p\), the expected proportion of “successes” observed over the \(k\) trials.

The shape of the binomial probability mass function tends to that of the normal when \(k\) is larger and \(p\) is sufficiently far from being 0 or 1, which has historically led analysts to utilize a normal approximation when making statistical inferences…but with computers, we can now easily make exact ones.

Let’s focus first on the outcome of a binomial experiment, with the random variable \(X\) being the number of observed successes in \(k\) trials. What is the probability of observing \(X=x\) successes, if the probability of observing a success in any one trial is \(p\)? We might start by noting that we have \[\begin{align*} \mbox{$x$ successes}&: p^x \\ \mbox{$k-x$ failures}&: (1-p)^{k-x} \,, \end{align*}\] and thus (because of independence) we would simply multiply the two probabilities together: \(P(X=x) = p^x (1-p)^{k-x}\). But, no, this isn’t quite right. Let’s start again and think this through. Assume \(k = 2\). The sample space of possible experimental outcomes is \[ \Omega = \{ SS, SF, FS, FF \} \,. \] If \(p\) = 0.5, then we can see that the probability of observing one success in two trials is 0.5…but our proposed probability mass function tells us that \(P(X=1) = (0.5)^1 (1-0.5)^1 = 0.25\). What are we missing? We are missing that there are two ways of observing a single success…and we need to count both. Because we ultimately do not care about the order in which successes and failures are observed, we utilize counting via combination: \[ \binom{k}{x} = \frac{k!}{x! (k-x)!} \,, \] where the exclamation point represents the factorial function \(x! = x(x-1)(x-2)\cdots 1\). So now we can correctly write down the binomial probability mass function: \[ P(X=x) = p_X(x) = \binom{k}{x} p^x (1-p)^{k-x} ~~~ x \in \{0,\ldots,k\} \,. \] We denote the distribution of the random variable \(X\) as \(X \sim\) Binomial(\(k\),\(p\)). Note that when \(k = 1\), we have a Bernoulli distribution.

Recall: a probability mass function is one way to represent a discrete probability distribution, and it has the properties (a) \(0 \leq p_X(x) \leq 1\) and (b) \(\sum_x p_X(x) = 1\), where the sum is over all values of \(x\) in the distribution’s domain.

(The reader should note that the number of trials is commonly denoted in textbooks as \(n\), not \(k\). However, since \(n\) is typically used to denote the sample size in an experiment, we will use \(k\) to denote the number of trials in this text to avoid any confusion.)

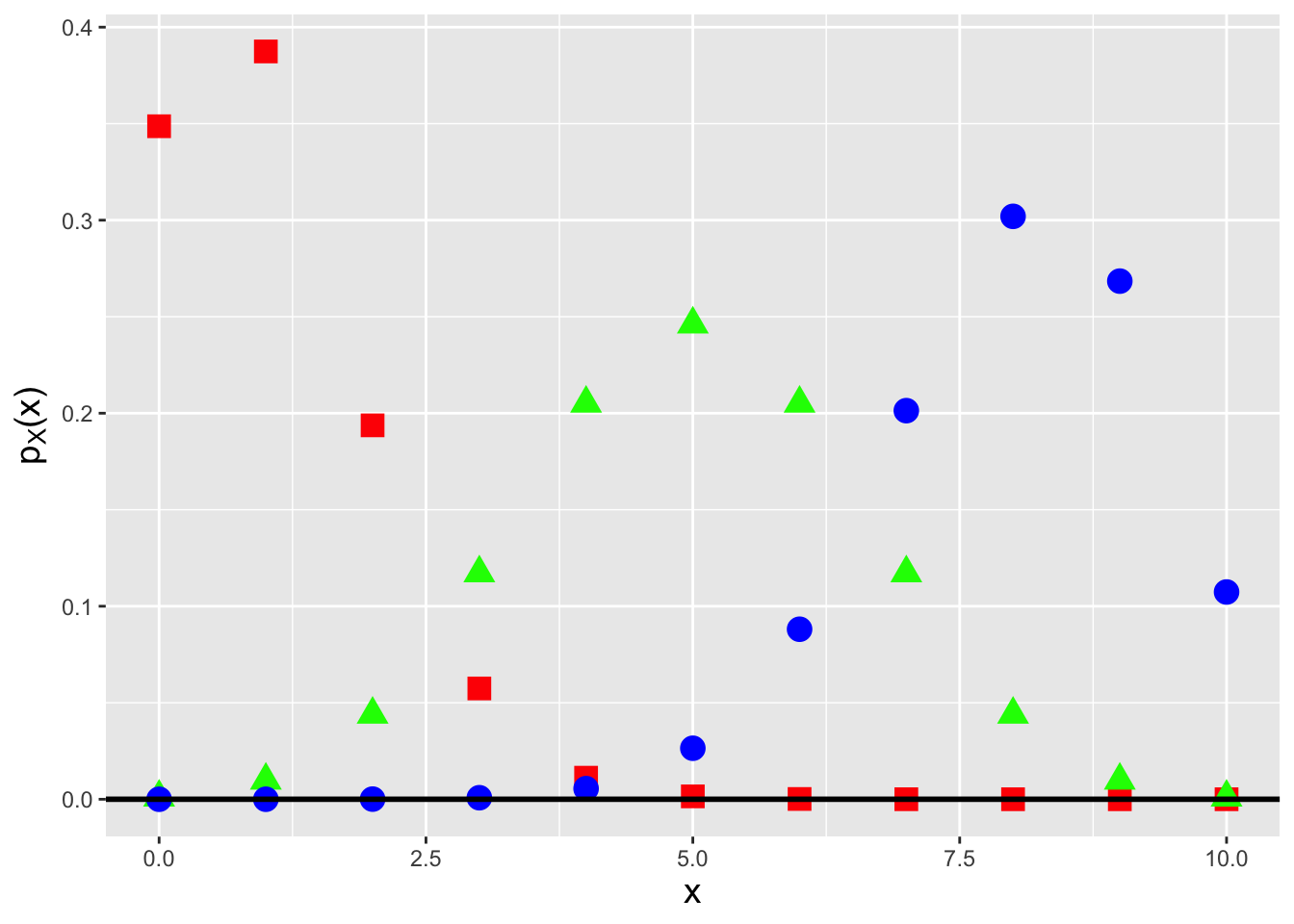

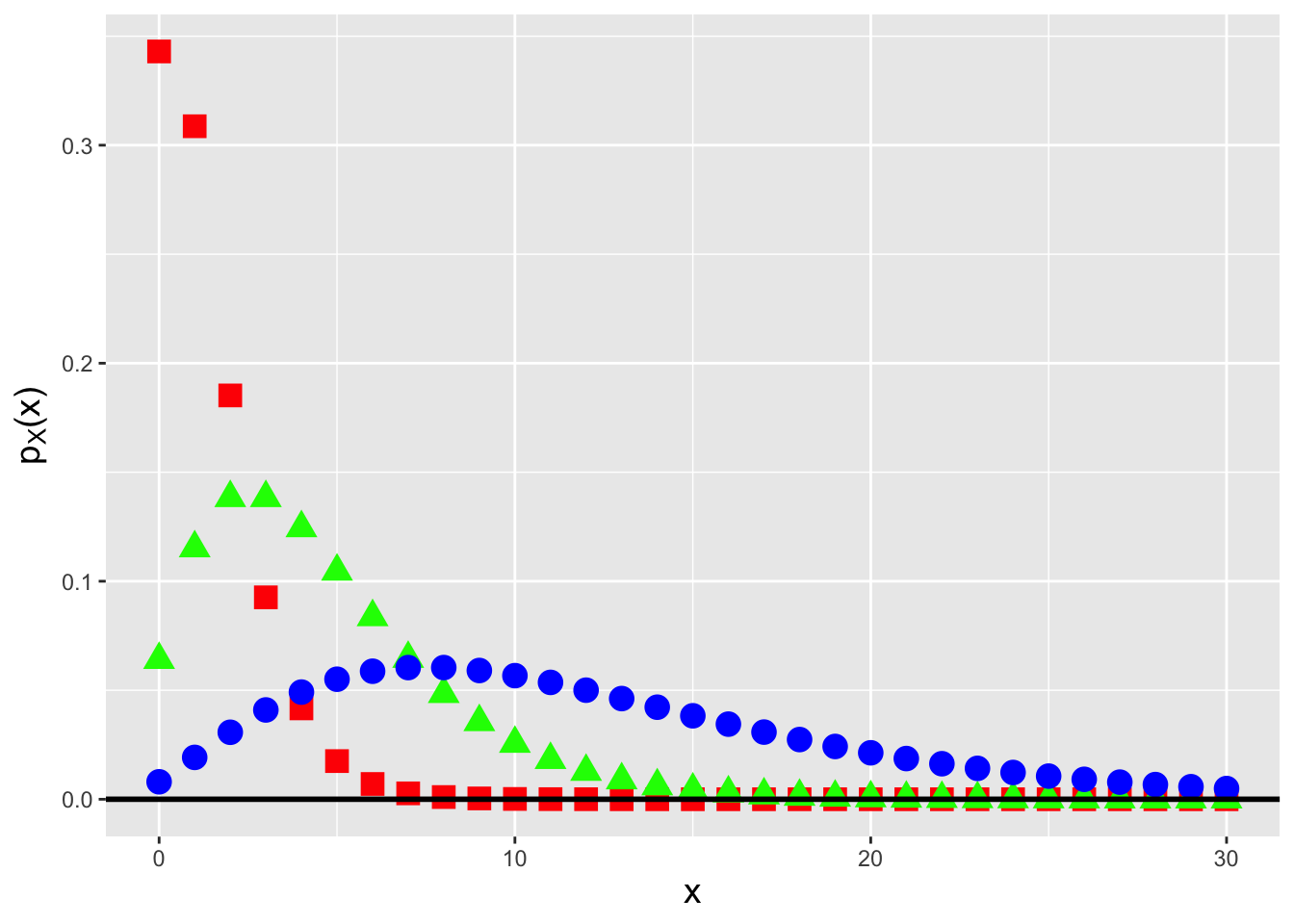

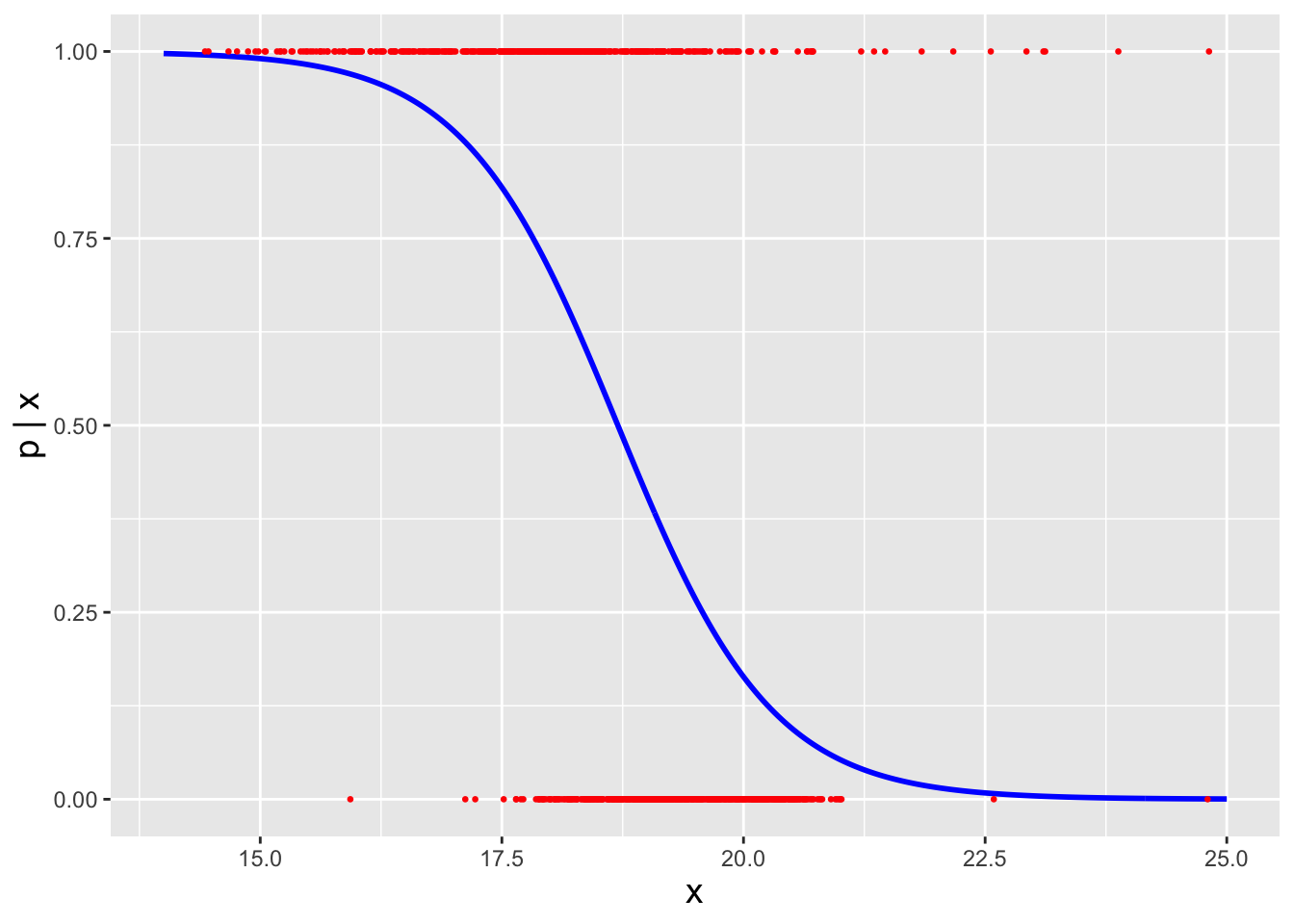

In Figure 3.1, we display three binomial pmfs, one each for probabilities of success 0.1 (red squares, to the left), 0.5 (green triangles, in the center), and 0.8 (blue circles, to the right). This figure indicates an important aspect of the binomial pmf, namely that it can attain a shape like that of a normal distribution. In fact, a binomial random variable converges in distribution to a normal random variable in certain limiting situations, as we show in an example below.

Figure 3.1: Binomial probability mass functions for number of trials \(k = 10\) and success probabilities \(p = 0.1\) (red squares), 0.5 (green triangles), and 0.8 (blue circles).

Recall: the expected value of a discretely distributed random variable is \[ E[X] = \sum_x x p_X(x) \,, \] where the sum is over all values of \(x\) within the domain of the pmf \(p_X(x)\). The expected value is equivalent to a weighted average, with the weight for each possible value of \(x\) being \(p_X(x)\).

For the binomial distribution, the expected value is \[ E[X] = \sum_{x=0}^k x \binom{k}{x} p^x (1-p)^{k-x} \,. \] At first, this does not appear to be easy to evaluate. One trick in our mathematical arsenal is to pull constants out of the summation such that whatever is left as the summand is a pmf (and thus sums to 1). Let’s try this here: \[\begin{align*} E[X] &= \sum_{x=0}^k x \binom{k}{x} p^x (1-p)^{k-x} = \sum_{x=1}^k x \frac{k!}{x!(k-x)!} p^x (1-p)^{k-x} \\ &= \sum_{x=1}^k \frac{k!}{(x-1)!(k-x)!} p^x (1-p)^{k-x} = kp \sum_{x=1}^k \frac{(k-1)!}{(x-1)!(k-x)!} p^{x-1} (1-p)^{k-x} \,. \end{align*}\] The summation appears almost like that of a binomial random variable. Let’s set \(y = x-1\). Then \[\begin{align*} E[X] &= kp \sum_{x=1}^k \frac{(k-1)!}{(x-1)!(k-x)!} p^{x-1} (1-p)^{k-x} = kp \sum_{y=0}^{k-1} \frac{(k-1)!}{y!(k-(y+1))!} p^y (1-p)^{k-(y+1)} \\ &= kp \sum_{y=0}^{k-1} \frac{(k-1)!}{y!((k-1)-y)!} p^y (1-p)^{(k-1)-y} \,. \end{align*}\] The summand is now the pmf for the random variable \(Y \sim\) Binomial(\(k-1\),\(p\)), summed over all values of \(y\) in the domain of the distribution. Thus the summation evaluates to 1, and \(E[X] = kp\). In an example below, we use a similar strategy to determine that the variance of a binomial random variable, \(V[X]\), is equal to \(kp(1-p)\).

3.1.1 Binomial Random Variable: Variance

Recall: the variance of a discretely distributed random variable is \[ V[X] = \sum_x (x-\mu)^2 p_X(x) = E[X^2] - (E[X])^2\,, \] where the sum is over all values of \(x\) in the domain of the pmf \(p_X(x)\). The variance represents the square of the “width” of a probability mass function, where by “width” we mean the range of values of \(x\) for which \(p_X(x)\) is effectively non-zero.

The variance of a random variable is given by the shortcut formula that we have been using since Chapter 1: \(V[X] = E[X^2] - (E[X])^2\). So we would expect that we would need to compute \(E[X^2]\) here, since we already know that \(E[X] = kp\). But for reasons that will become apparent below, it is actually far easier for us to compute \(E[X(X-1)]\), and to work with that to eventually derive the variance: \[\begin{align*} E[X(X-1)] &= \sum_{x=0}^k x(x-1) \binom{k}{x} p^x (1-p)^{k-x} = \sum_{x=0}^k x(x-1) \frac{k!}{x!(k-x)!} p^x (1-p)^{k-x} \\ &= \sum_{x=2}^k \frac{k!}{(x-2)!(k-x)!} p^x (1-p)^{k-x} = k(k-1) p^2 \sum_{x=2}^k \frac{(k-2)!}{(x-2)!(k-x)!} p^{x-2} (1-p)^{k-x} \,. \end{align*}\] The advantage to using \(x(x-1)\) was that it matches the first two terms of \(x! = x(x-1)\cdots(1)\), allowing easy cancellation. If we set \(y = x-2\), we find that the summand above will, in a similar manner as in the calculation of \(E[X]\), become the pmf for the random variable \(Y \sim\) Binomial(\(k-2,p\))…and thus the summation will evaluate to 1. Hence \[\begin{align*} E[X(X-1)] &= E[X^2] - E[X] = k(k-1)p^2 \\ \Rightarrow ~~~ E[X^2] &= k^2p^2-kp^2 + kp = V[X] + (E[X])^2 \\ \Rightarrow ~~~ V[X] &= k^2p^2-kp^2+kp-k^2p^2 = kp-kp^2 = kp(1-p) \,. \end{align*}\]

3.1.2 Binomial Distribution: Normal Approximation

In certain limiting situations, a binomial random variable converges in distribution to a normal random variable. In other words, if \[ P\left(\frac{X-\mu}{\sigma} < a \right) = P\left(\frac{X-kp}{\sqrt{kp(1-p)}} < a \right) \approx P(Z < a) = \Phi(a) \,, \] then we can state that \(X \overset{d}{\rightarrow} Y \sim \mathcal{N}(kp,kp(1-p))\), or that \(X\) converges in distribution to a normal random variable \(Y\). Now, what do we mean by “certain limiting situations”? For instance, if \(p\) is close to zero or one, then the binomial distribution is truncated at 0 or at \(k\), and the shape of the pmf does not appear to be like that of a normal pdf. One convention is that the normal approximation is adequate if \[ k > 9\left(\frac{\mbox{max}(p,1-p)}{\mbox{min}(p,1-p)}\right) \,. \] The reader might question why we would mention this approximation at all: if we have binomially distributed data and a computer, then we need not ever utilize such an approximation to, e.g., compute probabilities. This point is correct (and is the reason why, for instance, we do not mention the so-called continuity correction here; our goal is not to compute probabilities). The reason we mention this is that this approximation underlies a commonly used hypothesis test framework, the Wald interval, that we will mention later in the chapter.

3.1.3 Binomial Distribution: Exponential Family

Recall: the exponential family of distributions is the set of distributions whose probability mass or density functions can be expressed as \[ h(x) \exp\left[\left(\sum_{i=1}^p \eta_i(\boldsymbol{\theta}) T_i(x)\right) - A(\boldsymbol{\theta})\right] \,, \] where \(\boldsymbol{\theta}\) is a vector of parameters (e.g., \(\boldsymbol{\theta} = \{\mu,\sigma^2\}\) for a normal distribution). Note that none of the parameters can be a domain-specifying parameter.

Is the binomial distribution a member of the exponential family of distributions? It would initially appear that the answer is no (as there are no exponential functions in its pmf), but if we note that \[\begin{align*} p^x = \exp\left(\log p^x\right) = \exp\left(x \log p\right) \end{align*}\] and that \[\begin{align*} (1-p)^{k-x} = \exp\left[\log (1-p)^{k-x}\right] = \exp\left[(k-x) \log (1-p)\right] \,, \end{align*}\] we can see that \[\begin{align*} p_X(x) = \binom{k}{x} \exp\left( x [ \log(p) - \log(1-p) ] + k \log (1-p) \right) \end{align*}\] and thus that \[\begin{align*} h(x) &= \binom{k}{x} ~~~~~~ \eta(p) = \log(p) - \log(1-p) = \log \frac{p}{1-p} ~~~~~~ T(x) = x ~~~~~~ A(p) = -k \log(1-p) \,. \end{align*}\] The binomial distribution is indeed a member of the exponential family.

3.2 Binomial Distribution: Cumulative Distribution Function

What to take away from this section:

The cumulative distribution function, or cdf, for a binomial distribution is a step function that can be difficult to work with analytically (which historically motivated the application of the normal approximation, but which now leads us to use coding for exact inference).

Foreshadowing: the discrete nature of the binomial distribution leads to our having to take great care in how we pursue inference…as we will see, the question “do we include a specific probability mass in a calculation, or not” takes on great import when working with binomial-related sampling distributions.

Recall: the cumulative distribution function, or cdf, is another means by which to encapsulate information about a probability distribution. For a discrete distribution, it is defined as \(F_X(x) = \sum_{y\leq x} p_Y(y)\), and it is defined for all values \(x \in (-\infty,\infty)\), with \(F_X(-\infty) = 0\) and \(F_X(\infty) = 1\).

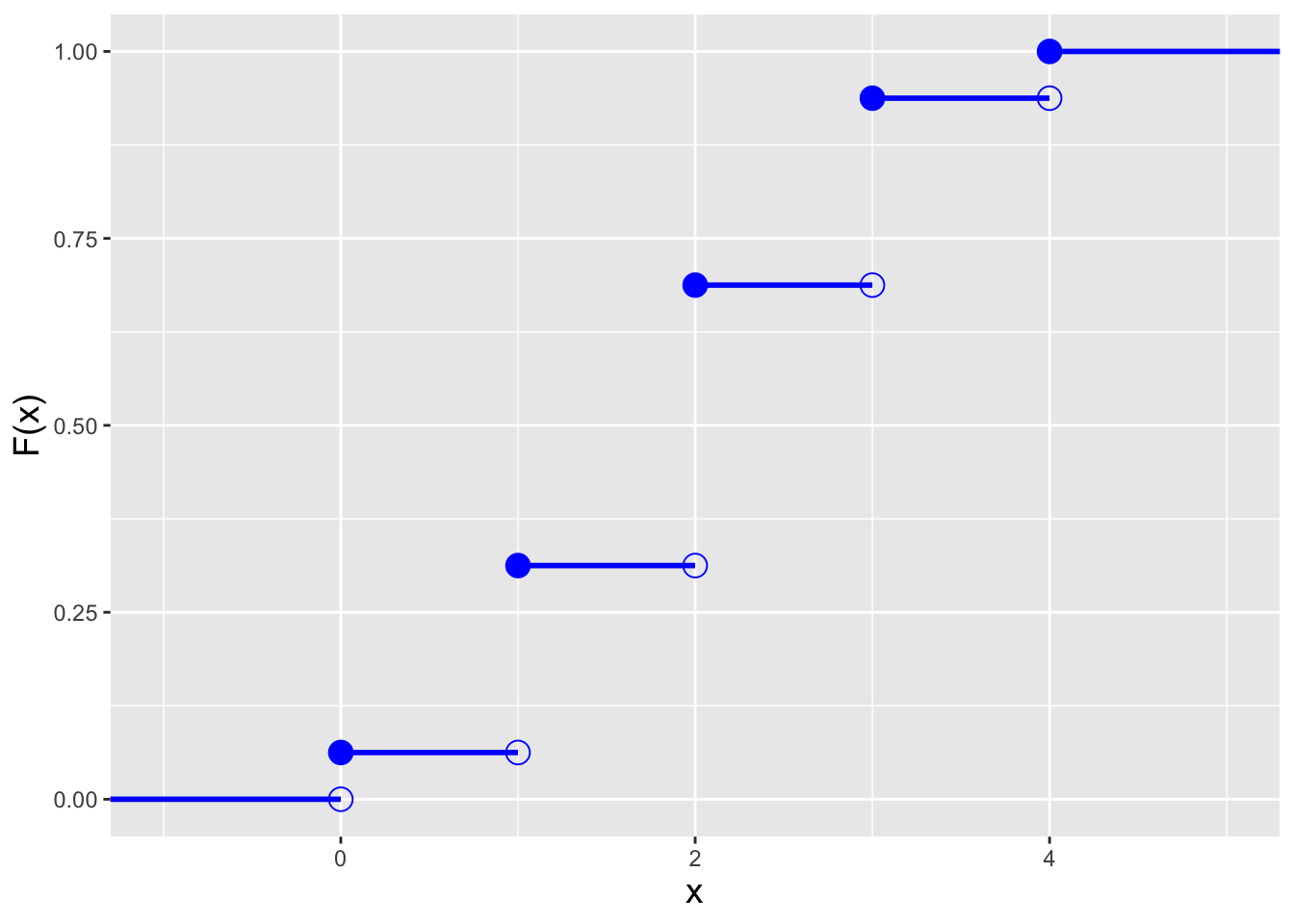

For the binomial distribution, the cdf is \[ F_X(x) = \sum_{y=0}^{\lfloor x \rfloor} p_Y(y) = \sum_{y=0}^{\lfloor x \rfloor} \binom{k}{y} p^y (1-p)^{k-y} = I_q\left(k - {\lfloor x \rfloor}, 1 + {\lfloor x \rfloor}\right) \,, \] where \(\lfloor x \rfloor\) denotes the floor function, which returns the largest integer that is less than or equal to \(x\) (e.g., if \(x\) = 6.75, \(\lfloor x \rfloor\) = 6). (\(I_q(\cdot)\) is the regularized incomplete beta function, which is not analytically easy to work with.) Also, because a pmf is defined at discrete values of \(x\), its associated cdf is a step function, as illustrated in the left panel of Figure 3.2. As we can see in this figure, the cdf steps up at each value of \(x\) in the domain of \(p_X(x)\), and unlike the case for continuous distributions, the form of the inequalities in a probabilistic statement matter: \(P(X < x)\) and \(P(X \leq x)\) will not be the same, if \(x\) is an integer with value \(\{0,1,2,\ldots,k\}\).

Recall: an inverse cdf function \(x = F_X^{-1}(q)\) takes as input a distribution quantile \(q \in [0,1]\) and returns the value of \(x\). A discrete distribution has no unique inverse cdf; it is convention to utilize the generalized inverse cdf, \[ x = \mbox{inf}\{x : F_X(x) \geq q\} \,, \] where “inf” indicates that the function is to return the smallest value of \(x\) such that \(F_X(x) \geq q\).

In the right panel of Figure 3.2, we display the inverse cdf for the same distribution used to generate the figure in the left panel (\(k=4\) and \(p=0.5\)). Like the cdf, the inverse cdf for a discrete distribution is a step function. Below, in an example, we show how we adapt the inverse transform sampler algorithm of Chapter 1 to accommodate the step-function nature of an inverse cdf.

Figure 3.2: Illustration of the cumulative distribution function \(F_X(x)\) (left) and inverse cumulative distribution function \(F_X^{-1}(q)\) (right) for a binomial distribution with number of trials \(k = 4\) and probability of success \(p=0.5\).

3.2.1 Computing Probabilities

Because computing the binomial pmf for a range of values of \(x\) can be laborious, we typically utilize

Rfunctions when computing probabilities.

- If \(X \sim\) Binomial(10,0.6), which is \(P(4 \leq X < 6)\)?

We first note that due to the form of the inequality, we do not include \(X=6\) in the computation. Thus \(P(4 \leq X < 6) = p_X(4) + p_X(5)\), which equals \[ \binom{10}{4} (0.6)^4 (1-0.6)^6 + \binom{10}{5} (0.6)^5 (1-0.6)^5 \,. \] Even computing this is unnecessarily laborious; instead, we call on

R:

## [1] 0.312(This utilizes

R’s vectorization feature: we need not explicitly define afor-loop to evaluatedbinom()for \(x=4\) and then at \(x=5\).) We can also utilize cdf functions here: \(P(4 \leq X < 6) = P(X < 6) - P(X < 4) = P(X \leq 5) - P(X \leq 3) = F_X(5) - F_X(3)\), which inRis computed via

## [1] 0.312As we can see, a direct summation approach is the more straightforward one to use.

- If \(X \sim\) Binomial(10,0.6), what is the value of \(a\) such that \(P(X \leq a) = 0.9\)?

First, we set up the inverse cdf formula: \[ P(X \leq a) = F_X(a) = 0.9 ~~ \Rightarrow ~~ a = F_X^{-1}(0.9) \] Note that we didn’t do anything differently here than we would have done in a continuous distribution setting…and we can proceed directly to

Rbecause it utilizes the generalized inverse cdf algorithm.

## [1] 8We can see immediately how the cdf for a discrete distribution is not a one-to-one function, as when we plug \(x = 8\) into the cdf, we will not recover the initial value \(q = 0.9\) (but, rather, a larger value):

## [1] 0.9543.2.2 Probability Mass Functions: Data Sampling

While we would always utilize

Rshortcut functions likerbinom()when they exist, there may be instances when we need to code our own functions for sampling data from discrete distributions. The code below shows such a function for an arbitrary probability mass function; we can easily adapt this code to any situation in which a pmf and its domain are concisely specified.

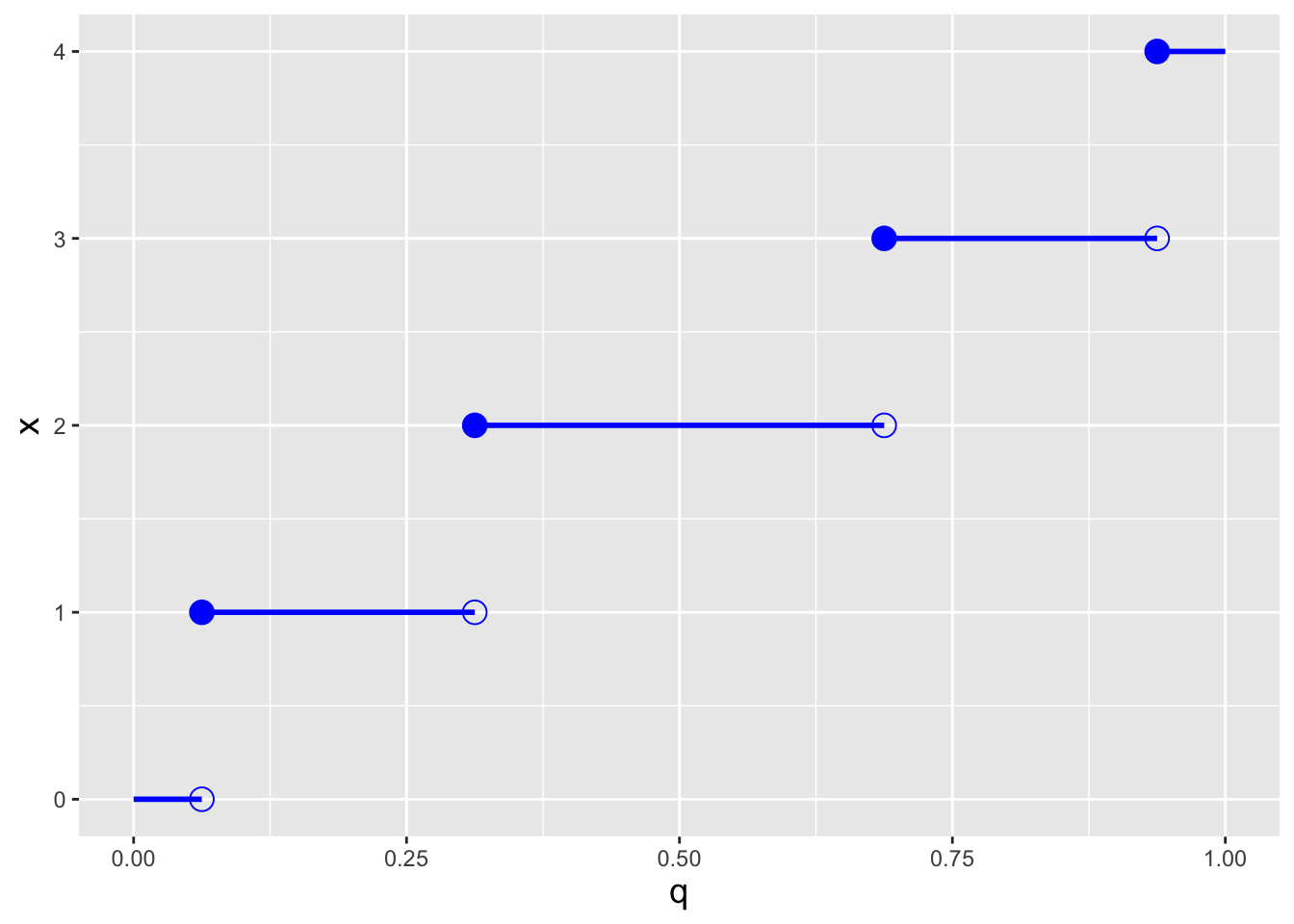

set.seed(101)

x <- c(1, 2, 4, 8) # domain of x

p.x <- c(0.2, 0.35, 0.15, 0.3) # p_X(x)

F.x <- cumsum(p.x) # cumulative sum -> produces F_X(x)

n <- 100

q <- runif(n) # we still ultimately need runif!

i <- findInterval(q, F.x)+1 # the output are bin numbers [0,3], and not [1,4]

# hence we add 1

# 1 means q is between 0 and F.x[1], etc.

x.sample <- x[i]

Figure 3.3: Histogram of \(n = 100\) iid data drawn using an inverse tranform sampler adapted to the discrete distribution setting. The red lines indicate the true density for each value of \(x\).

3.3 Binomial-Related Distributions

What to take away from this section:

There are several distributions related to the binomial distribution that are often seen in data analyses…

the negative binomial and geometric distributions, in which the random variable is the number of failures and the number of successes is fixed;

the hypergeometric distribution, which governs binomial-like trials in which data are sampled from finite tangible populations, without replacement;

the multinomial distribution, for which the number of outcomes is \(> 2\); and

the beta distribution, a continuous bounded distribution that one can use to model the binomial success proportion \(p\).

There are several probability distributions that are directly related to the binomial distribution and that are commonly utilized in statistical inference. Some of these are

- the negative binomial and geometric distributions;

- the hypergeometric distribution;

- the multinomial distribution; and

- the beta distribution.

Below, we introduce each in turn.

Negative Binomial/Geometric Distributions. A closely related alternative to a binomial experiment is a negative binomial experiment, in which the number of successes \(s\) is fixed in advance, as opposed to the number of trials \(k\). A simple example of such an experiment would be flipping a coin until we observe \(s\) heads. The random variable of interest is then the number of failures that we observe before achieving the last success (here, the number of observed tails).

The negative binomial distribution has probability mass function \[ p_X(x) = \binom{x+s-1}{x} p^s (1-p)^x ~~~ x \in \{0,1,\ldots,\infty\} \,. \] The form of this pmf follows from the fact that the underlying Bernoulli process would consist of \(x+s\) data, with the last datum being the observed success that ends the experiment. The first \(x+s-1\) data would feature \(s-1\) successes and \(x\) failures, with the order of success and failure not mattering…so we can view these data as being binomially distributed (albeit with \(x\) representing failures…hence the “negative” in negative binomial!): \[ p_X(x) = \underbrace{\binom{x+s-1}{x} p^{s-1} (1-p)^x}_{\mbox{first $x+s-1$ trials}} \cdot \underbrace{p}_{\mbox{last trial}} \,. \] Setting \(s = 1\) yields the geometric distribution, with probability mass function \[ p_X(x) = p (1-p)^x ~~~ x \in \{0,1,\ldots,\infty\} \,. \]

As stated above, \(X\) represents the number of failures in a

negative binomial experiment. Alternatively, \(X\) can represent the

overall number of trials in an experiment (i.e., the number of failures

plus the number of successes). Adopting either representation ultimately

yields the same statistical inferences for the success probability \(p\).

Here, we choose to follow how the negative binomial pmf is defined in R.

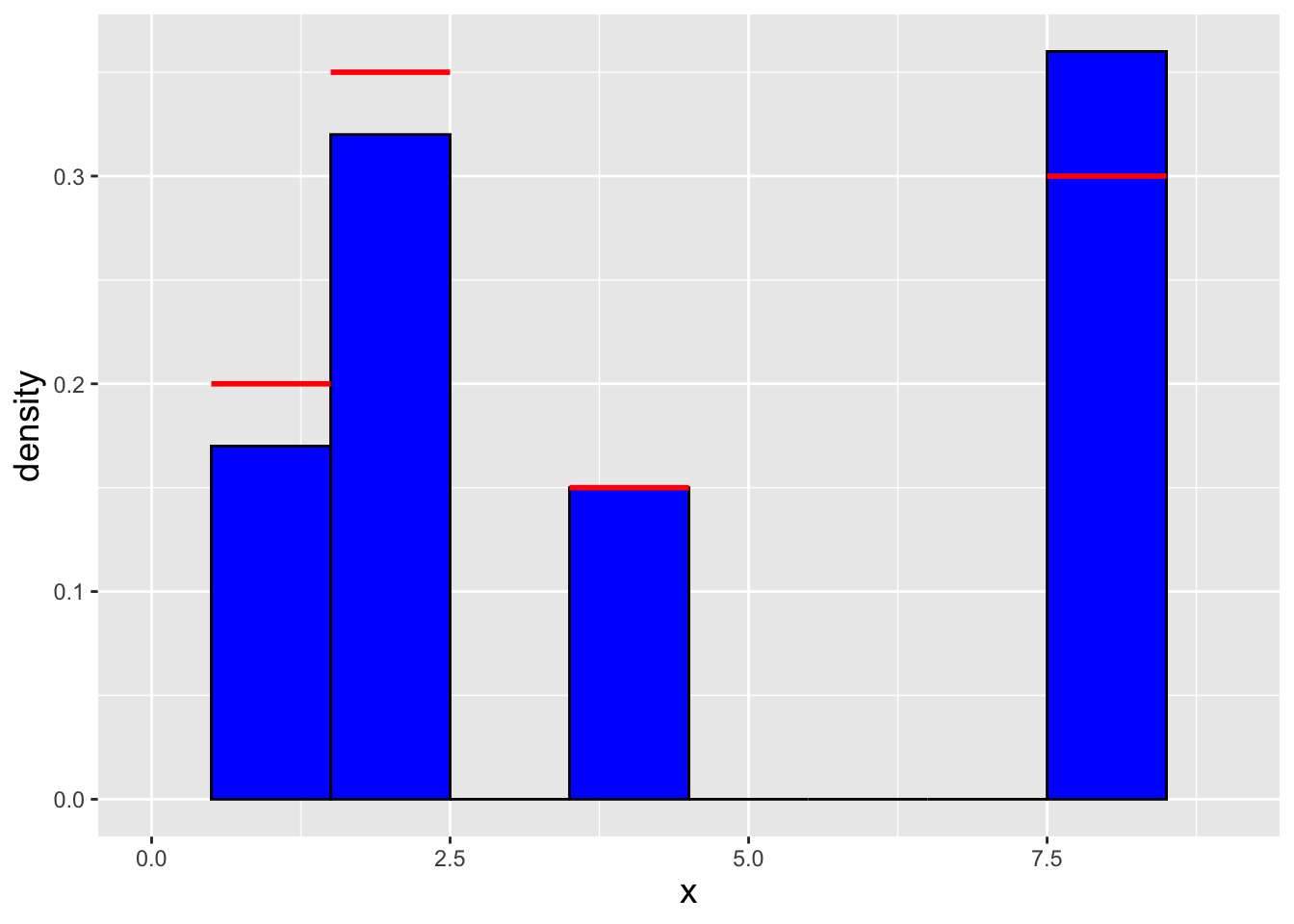

Figure 3.4: Negative binomial probability mass functions for the number of successes \(s = 2\) and success probabilities \(p = 0.7\) (red squares), 0.5 (green triangles), and 0.2 (blue circles).

Hypergeometric Distribution. Imagine that we are given \(K = 100\) widgets, of which ten are defective. This set of widgets comprises a finite, tangible population. If we were to sample \(k\) widgets with replacement, then the observed number of defective ones would be a binomially distributed random variable. However, a more typical situation would be one in which we grab a widget, check it to see if it is defective, and then set it aside…then grab and check another and then set that widget aside…etc. Perhaps we continue this process until all ten defective widgets are found. In this situation, we are sampling without replacement, and because of that, the probability of “success” (i.e., finding a defective widget) changes from an initial value of \(0.1\) to either \(0.091\) or \(0.101\), etc. Thus we are not carrying out a binomial experiment; rather, we are carrying out a hypergeometric experiment, where the data are governed by the hypergeometric distribution.

Assume that we draw \(k\) samples from a population of size \(K\), in which there are \(s\) “success” objects and \(K-s\) “failure” objects. The probability of observing \(X = x\) successes is \[ p_X(x) = \frac{\binom{s}{x} \binom{K-s}{k-x}}{\binom{K}{k}} \,, \] where \(x \in \{0, \ldots, {\rm min}(k,s)\}\). The expected value and variance are \[\begin{align*} E[X] &= \frac{ks}{K} \\ V[X] &= k \frac{s}{K} \frac{K-s}{K} \frac{K-k}{K-1} \,. \end{align*}\]

Historical convention holds that one can utilize the binomial distribution to model hypergeometric data if the number of trials \(k \lesssim K/10\). (This is the so-called “10% rule” of introductory statistics.) However, in the age of computers, there is no reason to do this…it is simple to apply the exact distribution instead.

Multinomial Distribution. The multinomial distribution is a generalization of the binomial distribution that we apply in situations in which each trial has more than two outcomes. The properties of a multinomial experiment are the following.

- It consists of \(k\) trials, with \(k\) chosen in advance.

- There are \(m\) possible outcomes for each trial.

- A trial may have no more than one realized outcome.

- The outcomes of each trial are independent.

- The probability of achieving the \(i^{th}\) outcome is \(p_i\), a quantity that remains constant throughout the experiment.

- The probabilities of each outcome sum to one: \(\sum_{i=1}^m p_i = 1\).

- The number of trials that achieve a particular outcome is \(X_i\), with \(\sum_{i=1}^m X_i = k\).

The probability of any single experimental outcome \(\{X_1,\ldots,X_m\}\) is given by the multinomial probability mass function: \[ p(x_1,\ldots,x_m \vert p_1,\ldots,p_m) = \frac{k!}{x_1! \cdots x_m!}p_1^{x_1}\cdots p_m^{x_m} \,. \] The distribution for any one value \(X_i\) is binomial, with \(E[X_i] = kp_i\) and \(V[X_i] = kp_i(1-p_i)\). This makes intuitive sense, as one either observes \(i\) as a trial outcome (success), or observes something else (failure). However, the \(X_i\)’s are not independent random variables; the covariance between \(X_i\) and \(X_j\), a metric of linear dependence, is not zero but rather \(-kp_ip_j\) if \(i \neq j\). (We will discuss the concept of covariance in Chapter 6.) This also makes intuitive sense: given that the number of trials \(k\) is fixed, observing more data having one outcome will usually mean observing fewer data achieving any other outcome.

Beta Distribution.

The beta distribution is a continuous distribution that is commonly used to

model random variables with finite, bounded domains.

Its probability density function is given by

\[

f_X(x) = \frac{x^{a-1}(1-x)^{b-1}}{B(a,b)} \,,

\]

where \(x \in [0,1]\), \(a\) and \(b\) are both \(> 0\), and the normalization constant \(B(a,b)\) is

\[

B(a,b) = \int_0^1 x^{a-1}(1-x)^{b-1} dx = \frac{\Gamma(a)\Gamma(b)}{\Gamma(a+b)} \,.

\]

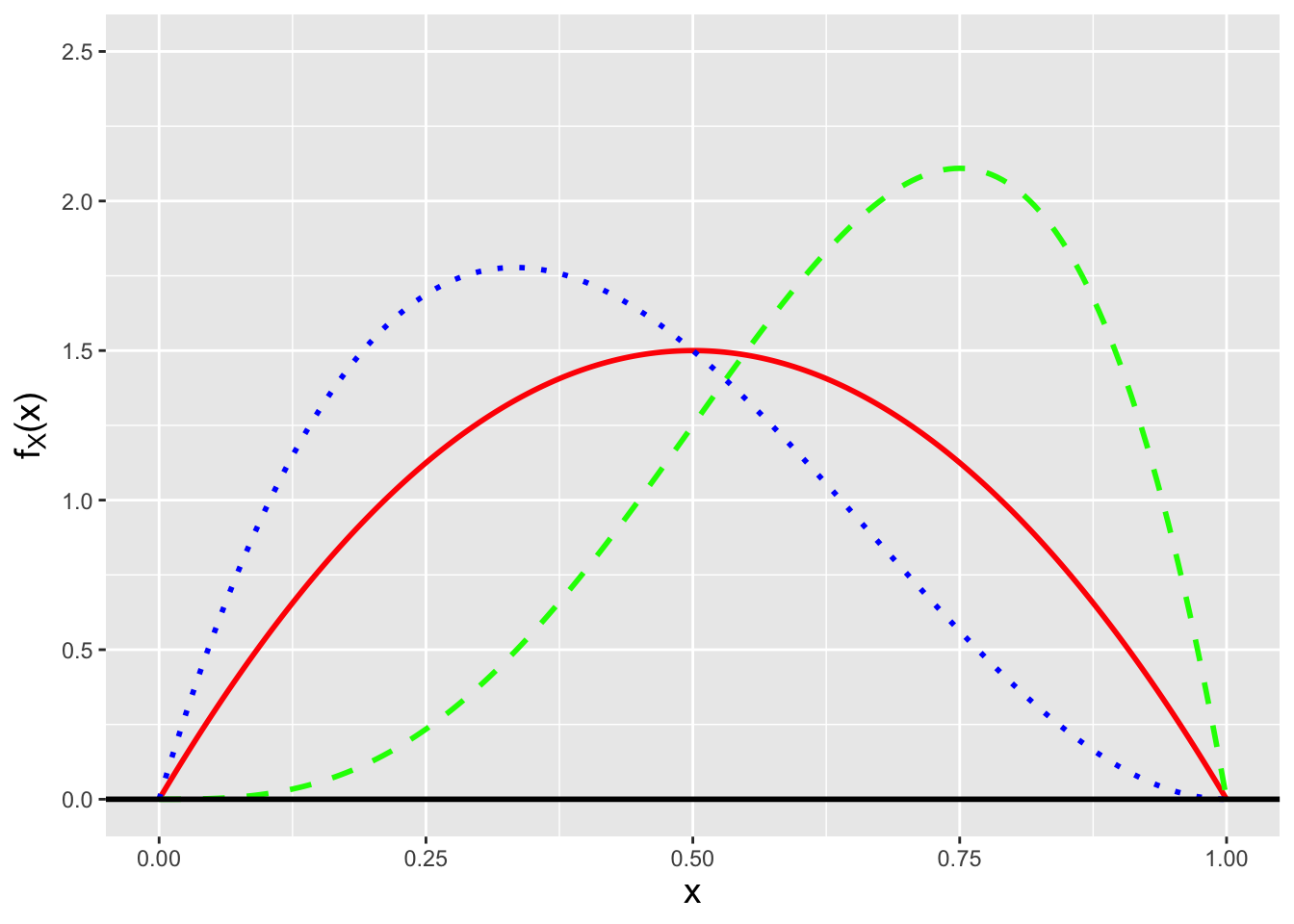

(See Figure 3.5.)

We have seen the gamma function, \(\Gamma(u)\), before; it is defined as

\[

\Gamma(u) = \int_0^\infty x^{u-1} e^{-x} dx \,.

\]

There are two things to note about the gamma function. The first is its

recursive property: \(\Gamma(u+1) = u \Gamma(u)\).

(This can be shown by applying integration by parts.) The second is that

when \(u\) is a positive integer, the gamma function takes on the value

\((u-1)! = (u-1)(u-2) \cdots 1\). (Note that \(\Gamma(1) = 0! = 1\).)

Regarding the statement above about “model[ing] random variables with finite, bounded domains”: if a set of \(n\) iid random variables \(\mathbf{X}\) has domain \([c,d]\), we can define a new set of random variables \(\mathbf{Y}\) via the transformation \[ \mathbf{Y} = \frac{\mathbf{X}-c}{d-c} \] such that the domain becomes \([0,1]\). We can then model the transformed data with the beta distribution. (Note the word can: we can model these data with the beta distribution, but we don’t have to, and it may be the case that there is another distribution bounded on the interval \([0,1]\) that ultimately better describes the data-generating process. The beta distribution just happens to be the one analysts most commonly use.)

But…in the end, what does this all have to do with the binomial distribution, the subject of this chapter? We know the binomial has a parameter \(p \in [0,1]\) and the domain of the beta distribution is \([0,1]\), but is there more? Let’s write down the binomial pmf: \[ \binom{k}{x} p^x (1-p)^{k-x} = \frac{k!}{x!(k-x)!} p^x (1-p)^{k-x} \,. \] This pmf dictates the probability of observing a particular value of \(x\) given \(k\) and \(p\). But what if we turn this around a bit…and examine this function if we fix \(k\) and \(x\) and vary \(p\) instead? In other words, let’s examine the likelihood function \[ \mathcal{L}(p \vert k,x) = \frac{k!}{x!(k-x)!} p^x (1-p)^{k-x} \,. \] We can see immediately that the likelihood has the form of a beta distribution if we set \(a = x+1\) and \(b = k-x+1\): \[ \mathcal{L}(p \vert k,x) = \frac{\Gamma(a+b-1)}{\Gamma(a)\Gamma(b)} p^{a-1} (1-p)^{b-1} \,, \] except that the normalization term is not quite right: here we have \(\Gamma(a+b-1)\) instead of \(\Gamma(a+b)\). But that’s fine: there is no requirement that a likelihood function integrate to one over its domain. (Here, as the interested reader can verify, the likelihood function integrates to \(1/(k+1)\).) So, in the end, if we observe a random variable \(X \sim\) Binomial(\(k,p\)), then the likelihood function \(\mathcal{L}(p \vert k,x)\) has the shape (if not the normalization) of a Beta(\(x+1,k-x+1\)) distribution.

Figure 3.5: Three examples of beta probability density functions: Beta(2,2) (solid red line), Beta(4,2) (dashed green line), and Beta(2,3) (dotted blue line).

3.3.1 Negative Binomial Random Variable: Expected Value

The calculation for the expected value \(E[X]\) for a negative binomial random variable is similar to that for a binomial random variable: \[\begin{align*} E[X] &= \sum_{x=0}^{\infty} x \binom{x+s-1}{x} p^s (1-p)^x = \sum_{x=0}^{\infty} x \frac{(x+s-1)!}{(s-1)!x!} p^s (1-p)^x = \sum_{x=1}^{\infty} \frac{(x+s-1)!}{(s-1)!(x-1)!} p^s (1-p)^x \,. \end{align*}\] Let \(y = x-1\). Then \[\begin{align*} E[X] &= \sum_{y=0}^{\infty} \frac{(y+s)!}{(s-1)!y!} p^s (1-p)^{y+1} = \sum_{y=0}^{\infty} s(1-p) \frac{(y+s)!}{s!y!} p^s (1-p)^y \\ &= \sum_{y=0}^{\infty} \frac{s(1-p)}{p} \frac{(y+s)!}{s!y!} p^{s+1} (1-p)^y = \frac{s(1-p)}{p} \sum_{y=0}^{\infty} \frac{(y+s)!}{s!y!} p^{s+1} (1-p)^y = \frac{s(1-p)}{p} \,. \end{align*}\] The summand is that of a negative binomial distribution for \(s+1\) successes, hence the summation is 1, and thus \(E[X] = s(1-p)/p\).

We leave it as an exercise to the reader to carry out a similar calculation, that involves evaluating \(E[X(X-1)]\), to derive the variance of a negative binomial random variable: \(V[X] = s(1-p)/p^2\).

3.3.2 Beta Random Variable: Expected Value

The expected value of a random variable sampled from a Beta(\(a,b\)) distribution is \[\begin{align*} E[X] = \int_0^1 x f_X(x) dx &= \int_0^1 x \frac{x^{a-1} (1-x)^{b-1}}{B(a,b)} dx = \int_0^1 \frac{x^{a} (1-x)^{b-1}}{B(a,b)} dx \\ &= \int_0^1 \frac{x^{a} (1-x)^{b-1}}{B(a+1,b)} \frac{B(a+1,b)}{B(a,b)} dx = \frac{B(a+1,b)}{B(a,b)} \int_0^1 \frac{x^{a} (1-x)^{b-1}}{B(a+1,b)} dx = \frac{B(a+1,b)}{B(a,b)} \,. \end{align*}\] The last result follows from the fact that the integrand is the pdf for a Beta(\(a+1,b\)) distribution, and the integral is over the entire domain, hence the integral evaluates to 1.

Continuing, \[\begin{align*} E[X] &= \frac{B(a+1,b)}{B(a,b)} = \frac{\Gamma(a+1) \Gamma(b)}{\Gamma(a+b+1)} \frac{\Gamma(a+b)}{\Gamma(a)\Gamma(b)} = \frac{a \Gamma(a)}{(a+b)\Gamma(a+b)} \frac{\Gamma(a+b)}{\Gamma(a)} = \frac{a}{a+b} \,. \end{align*}\] Here, we take advantage of the recursive property of the gamma function.

The reader can utilize a similar strategy to determine the variance of a beta random variable, starting by computing \(E[X^2]\) and utilizing the shortcut formula \(V[X] = E[X]^2 - (E[X])^2\). The final result is \[ V[X] = \frac{ab}{(a+b)^2(a+b+1)} \,. \]

3.4 Linear Functions of Binomial Random Variables

What to take away from this section:

The method of moment-generating functions allows us to determine that…

the sum of \(n\) iid binomial random variables is itself binomially distributed; and

the mean of \(n\) iid binomial random variables has a known but typically unutilized distribution: it is the sample sum distribution with a transformed domain.

Foreshadowing: the sample sum result will allow us to make statistical inferences about the binomial success proportion \(p\), given \(n\) iid normal random variables (and that \(k\) is fixed and known).

Let’s assume we are given \(n\) iid binomial random variables: \(X_1,X_2,\ldots,X_n \sim\) Binomial(\(k\),\(p\)). Can we determine the distribution of \(Y = \sum_{i=1}^n a_i X_i\)? Yes, we can…via the method of moment-generating functions.

Recall: the moment-generating function, or mgf, is a means by which to encapsulate information about a probability distribution. When it exists, the mgf is given by \(m_X(t) = E[e^{tX}]\). Also, if \(Y = \sum_{i=1}^n a_iX_i\), then \(m_Y(t) = m_{X_1}(a_1t) m_{X_2}(a_2t) \cdots m_{X_n}(a_nt)\); if we can identify \(m_Y(t)\) as the mgf for a known family of distributions, then we can immediately identify the distribution for \(Y\) and the parameters of that distribution.

The mgf for the binomial distribution is \[\begin{align*} m_X(t) = E[e^{tX}] &= \sum_{x=0}^k e^{tx} \binom{k}{x} p^x (1-p)^{k-x} \\ &= \sum_{x=0}^k \binom{k}{x} (pe^t)^x (1-p)^{k-x} \,. \end{align*}\] To achieve a closed-form expression, we utilize the binomial theorem: \[ (x+y)^k = \sum_{i=0}^k \binom{k}{i} x^i y^{k-i} \] to re-express \(m_X(t)\): \[ m_X(t) = [pe^t + (1-p)]^k \,. \] Note that one may see this written elsewhere as \((pe^t+q)^k\), where \(q = 1-p\).

The mgf for \(Y = X_+ = \sum_{i=1}^n X_i\) is thus \[ m_Y(t) = \prod_{i=1}^n m_{X_i}(t) = [m_X(t)]^n = [pe^t + (1-p)]^{nk} \,. \] We can see that this has the form of a binomial mgf: \(Y \sim\) Binomial(\(nk\),\(p\)), with expected value \(E[Y] = nkp\) and variance \(V[Y] = nkp(1-p)\). This makes sense, as the act of summing binomial data is equivalent to concatenating \(n\) separate Bernoulli processes into one longer Bernoulli process…whose data can subsequently be modeled using a binomial distribution.

While we can identify the distribution of the sum by name, we cannot

say the same about the sample mean.

We know that the expected value is \(E[\bar{X}] = \mu = kp\)

and that the variance is \(V[\bar{X}] = \sigma^2/n = kp(1-p)/n\),

but when we attempt to use the mgf method with

\(a_i = 1/n\) instead of \(a_i = 1\), we find that

\[

m_{\bar{X}}(t) = [pe^{t/n} + (1-p)]^{nk} \,.

\]

Changing \(t\) to \(t/n\) has the effect of creating an mgf that does not

have the form of any known mgf.

However, we do know the details of the distribution: its pmf is identical

to that of the binomial distribution, but its domain is

\(\{0,1/n,2/n,\ldots,k\}\) rather than \(\{0,1,\ldots,nk\}\).

(We can derive this result by making

the transformation \(X_+ \rightarrow X_+/n\),

as we see below in an example.)

We could define the sample mean cdf ourselves

using our own R function, as in, e.g.,

However, there is no particular need to do this: as we will see, if we wish to construct a confidence interval for \(p\), we can just use \(X_+\) as our statistic, and we will get the same result as if we had used the sample mean. (We note, for completeness, that we could also utilize the Central Limit Theorem if \(n \gtrsim 30\), but as we know the sampling distributions of \(X_+\) and \(\bar{X}\) there is no need to fall back upon approximations.)

3.4.1 Geometric Random Variable: Moment-Generating Function

Recall that a geometric distribution is equivalent to a negative binomial distribution with number of successes \(s = 1\); its probability mass function is \[ p_X(x) = p (1-p)^x \,, \] with \(x = \{0,1,\ldots\}\) and \(p \in [0,1]\). The moment-generating function for a geometric random variable is thus \[\begin{align*} m_X(t) = E[e^{tX}] &= \sum_{x=0}^{\infty} e^{tx} p (1-p)^x = p \sum_{x=0}^\infty e^{tx} (1-p)^x \\ &= p \sum_{x=0}^\infty [e^t(1-p)]^x = \frac{p}{1-e^t(1-p)} \,. \end{align*}\] The last equality utilizes the formula for the sum of an infinite geometric series: \(\sum_{i=0}^\infty x^i = (1-x)^{-1}\), when \(\vert x \vert < 1\). (If \(t < 0\) and \(p > 0\), then the condition that \(\vert e^t(1-p) \vert < 1\) holds.)

The sum of \(s\) iid geometric random variables has the moment-generating function \[ m_Y(t) = \prod_{i=1}^s m_{X_i}(t) = \left[ m_X(t) \right]^n = \left[\frac{p}{1-e^t(1-p)}\right]^s \,. \] This is the mgf for a negative binomial distribution for \(s\) successes. In the same way that the sum of \(k\) Bernoulli random variables is a binomially distributed random variable, the sum of \(s\) geometric random variables is a negative binomially distributed random variable.

3.4.2 Binomial Data: Sample Mean Distribution

Let’s assume we are given \(n\) iid binomial random variables: \(X_1,X_2,\ldots,X_n \sim\) Binomial(\(k\),\(p\)). As we observe above, the distribution of the sum \(X_+ = \sum_{i=1}^k X_i\) is binomial with mean \(nkp\) and variance \(nkp(1-p)\).

The sample mean is \(\bar{X} = X_+/n\), and so \[ F_{\bar{X}}(\bar{x}) = P(\bar{X} \leq \bar{x}) = P(X_+ \leq n\bar{x}) = \sum_{x_+=0}^{n\bar{x}} p_{X_+}(x_+) \,. \] Because \(n\bar{x}\) is integer-valued by definition, we do not need to round down here. Since we are dealing with a discrete distribution and not a continuous one, we cannot simply take the derivative of \(F_{\bar{X}}(\bar{x})\) to find \(f_{\bar{X}}(\bar{x})\)…but what we can do is assess the jump in the cumulative distribution function at each step, because that is the pmf. We find that \[ f_{\bar{X}}(\bar{x}) = P(X_+ \leq n\bar{x}) - P(X_+ \leq n\bar{x}-1) = f_{X_+}(x_+) = f_{X_+}(n\bar{x})\,. \] In other words, the pmf for \(\bar{X}\) is equivalent to that for \(X_+\), just with a different domain (\({0,1/n,2/n,\ldots,k}\) versus \({0,1,\ldots,nk}\).

3.4.3 Probability Generating Functions

Moment-generating functions are not the only means by which we can work with linear functions of discrete random variables. Another method is that of probability generating functions, or pgfs, in which we utilize the Law of the Unconcious Statistician to create power-series representations of probability mass functions: \[ g_X(t) = E[t^X] = \sum_{x=0}^\infty t^x p_X(x) \,. \] (Mathematicians would call this a Mellin transform.) We note immediately that we can only compute pgfs for distributions whose domains consist of the full set of, or a subset of, the values \(\{0,1,\ldots,\infty\}\). (For instance, the binomial distribution has domain \(\{0,1,\ldots,k\}\), so \(p_X(k+1) = p_X(k+2) = \cdots = 0\).)

What is the pgf for a binomial distribution? \[\begin{align*} g_X(t) &= \sum_{x=0}^k t^x p_X(x) \\ &= \sum_{x=0}^k t^x \binom{k}{x} p^x (1-p)^{k-x} \\ &= \sum_{x=0}^k \binom{k}{x} (tp)^x (1-p)^{k-x} \\ &= \left[ tp + (1-p) \right]^k \,, \end{align*}\] where in the last step we utilize the binomial theorem to achieve a closed-form expression for \(g_X(t)\).

What is the pgf good for? First, we can use a pgf to compute a factorial moment: \(\mu_{[i]} = E[X(X-1) \cdots (X-i+1)]\). (Earlier, we used the factorial moment \(E[X(X-1)]\) of the binomial distribution to compute the variance of binomial random variables.) We can compute such a moment from \(g_X(t)\) as follows: \[ E[X(X-1) \cdots (X-i+1)] = \mu_{[i]} = \left. \frac{d^j}{dt^j} g_X(t) \right|_{t=1} \,. \] For instance, we found that \(E[X(X-1)] = k(k-1)p^2\) for a binomial distribution, by playing tricks with summations. With the pgf, we find that \[\begin{align*} \left. \frac{d^2}{dt^2} \left[ tp + (1-p) \right]^k \right|_{t=1} &= \left. \frac{d}{dt} k \left[ tp + (1-p) \right]^{k-1} p \right|_{t=1} \\ &= \left. k (k-1) \left[ tp + (1-p) \right]^{k-2} p^2 \right|_{t=1} \\ &= k (k-1) p^2 \left[ p + (1-p) \right]^{k-2} = k(k-1)p^2 \,. \end{align*}\]

Second, we can use a pgf to generate probability mass function values: \[ p_X(i) = \left. \frac{1}{i!} \frac{d^j}{dt^j} g_X(t) \right|_{t=0} \,. \] Hence, for a binomial distribution, \[\begin{align*} p_X(1) &= \left. \frac{d}{dt} \left[ tp + (1-p) \right]^k \right|_{t=0} \\ &= \left. k \left[ tp + (1-p) \right]^{k-1} p \right|_{t=0} \\ &= k p (1-p)^{k-1} \,, \end{align*}\] etc. While this might not seem particularly useful at first glance, if \(\{X_1,\ldots,X_n\}\) are \(n\) independent (but not necessarily identically distributed) discrete random variables, and if \(Y = \sum_{i=1}^n a_i X_i\), then \[ g_Y(t) = g_{X_1}(t^{a_1}) g_{X_2}(t^{a_2}) \cdots g_{X_n}(t^{a_n}) \,, \] and one can use, e.g., symbolic differentiation to determine the full probability mass function for \(Y\), if one cannot just recognize \(Y\)’s distribution. (For instance, if \(X_1,X_2 \sim \mbox{Binom}(k,p)\), then the pgf for \(Y = X_1 + X_2\) is \([tp + (1-p)]^{k+k}\), meaning that \(Y \sim \mbox{Binom}(2k,p)\).

To be clear: in the context of this book, probability-generating functions do not offer any utility to the reader that moment-generating functions don’t already provide. (Their primary advantage is to allow us to compute pmfs for less straightforward linear functions of discrete random variables, which is useful to know\(-\)this allows us to perform statistical inference given such statistics\(-\)but doing this is beyond the book’s current scope.)

3.5 Order Statistics

What to take away from this section:

Order statistics are the observed data of our sample sorted into ascending order: e.g., \(X_{(1)}\) is the datum with the smallest observed value, and \(X_{(n)}\) is the datum with the largest observed value.

The properties of the binomial distribution allow us to derive the probability mass or density function (and cumulative distribution function) for an order statistic.

Foreshadowing: by being able to express the cdf of an order statistic, we are in a position to, e.g., make statistical inferences about a distribution parameter \(\theta\) using the sample median or the sample range.

Let’s suppose that we have sampled \(n\) iid random variables \(\{X_1,\ldots,X_n\}\) from some arbitrary distribution. Previously, we have summarized such data with the sample mean and the sample variance. However, there are other summary statistics, some of which are only calculable if we sort the data into ascending order: \(\{X_{(1)},\ldots,X_{(n)}\}\). These are dubbed order statistics and the \(j^{th}\) order statistic is the sample’s \(j^{th}\) smallest value (i.e., the smallest-valued datum in the sample is \(X_{(1)}\) and the largest-valued datum is \(X_{(n)}\)). Examples of statistics based on ordering include \[\begin{align*} \mbox{Range:}& ~~X_{(n)} - X_{(1)} \\ \mbox{Median:}& ~~X_{(n+1)/2} ~ \mbox{if $n$ is odd} \\ & ~~(X_{n/2}+X_{(n+1)/2})/2 ~ \mbox{if $n$ is even} \,. \end{align*}\] The most important point to keep in mind is that the probability mass and density functions for order statistics differ from the pmfs and pdfs for their constituent iid data. For instance, if we sample \(n\) data from a \(\mathcal{N}(0,1)\) distribution, we would not expect the minimum value to be distributed the same way; if anything, the mean should take on smaller and smaller values, and the variance of those values should decrease, as \(n\) increases.

So: why are we discussing order statistics here, in the middle of a discussion of the binomial distribution? It is because we can derive, e.g., the cdf for an order statistic by utilizing the binomial probability mass function.

![\label{fig:order}If we have, e.g., a probability density function $f_X(x)$ whose domain is $[a,b]$, and we view success as sampling a datum less than a given value $x$, then when we sample $n$ data, the number that have values $\leq x$ is a binomial random variable with $k=n$ and $p = F_X(x)$.](figures/order.png)

Figure 3.6: If we have, e.g., a probability density function \(f_X(x)\) whose domain is \([a,b]\), and we view success as sampling a datum less than a given value \(x\), then when we sample \(n\) data, the number that have values \(\leq x\) is a binomial random variable with \(k=n\) and \(p = F_X(x)\).

See Figure 3.6. Without loss of generality, we can assume that \(f_X(x) > 0\) for \(x \in [a,b]\) and that we sample \(n\) data from this distribution. The number of data \(X\) that have value less than some arbitrarily chosen \(x\) is a binomial random variable: \[ Y \sim \mbox{Binomial}(n,p=F_X(x)) \] What is the probability that the \(j^{th}\) ordered datum has a value \(\leq x\)? That’s equivalent to asking for the probability that \(Y \geq j\), i.e., did we see at least \(j\) successes in \(n\) trials? \[ F_{(j)}(x) = P(X_{(j)} \leq x) = P(Y \geq j) = \sum_{i=j}^n \binom{n}{i} [F_X(x)]^i [1 - F_X(x)]^{n-i} \,. \] \(F_{(j)}(x)\) is the cdf for the \(j^{th}\) ordered datum.

What is \(f_{(j)}(x)\) if \(X\) is a continuous random variable?

Recall: a continuous distribution’s pdf is the derivative of its cdf.

Ignoring algebraic details, we can write down the pdf for \(X_{(j)}\) as \[ f_{(j)}(x) = \frac{d}{dx}F_{(j)}(x) = \frac{n!}{(j-1)!(n-j)!} f_X(x) [F_X(x)]^{j-1} [1 - F_X(x)]^{n-j} \,, \] with simplified expressions for the pdfs for the minimum and maximum data values being: \[ f_{(1)}(x) = n f_X(x) [1 - F_X(x)]^{n-1} ~~\mbox{and}~~ f_{(n)}(x) = n f_X(x) [F_X(x)]^{n-1} \,. \]

If, on the other hand, \(X\) is a discrete random variable, then the probability mass function \(p_{(j)}\) is given by the “jumps” seen in the cdf at those values of \(x\) that belong to the distribution’s domain: \[ p_{(j)}(x) = F_X(x) - F_X(x-\Delta x) \,, \] where \(\Delta x\) is the step back to the next smaller value within the domain. For instance, if \(X\) is a binomial random variable, then \(p_{(j)}(x) = F_X(x) - F_X(x-1)\). In an example below, we provide code for computing the vector of pmf values for a discrete order statistic.

3.5.1 Exponential Distribution: Minimum Value

The probability density function for an exponential random variable is \[ f_X(x) = \frac{1}{\theta} \exp\left(-\frac{x}{\theta}\right) \,, \] for \(x \geq 0\) and \(\theta > 0\), and the expected value of \(X\) is \(E[X] = \theta\). What is the pdf for the smallest value among \(n\) iid data sampled from an exponential distribution? What is the expected value for the smallest value?

First, if we do not immediately recall the cumulative distribution function \(F_X(x)\), we can easily derive it: \[ F_X(x) = \int_0^x \frac{1}{\theta} e^{-y/\theta} dy = 1 - \exp\left(-\frac{x}{\theta}\right) \,. \] We plug \(F_X(x)\) into the expression of the pdf of the minimum datum given above: \[\begin{align*} f_{(1)}(x) &= n \frac{1}{\theta} \exp\left(-\frac{x}{\theta}\right) \left[ 1 - \left(1-\exp\left(-\frac{x}{\theta}\right)\right) \right]^{n-1} = n \frac{1}{\theta} \exp\left(-\frac{x}{\theta}\right) \exp\left(-\frac{(n-1)x}{\theta}\right) \\ &= \frac{n}{\theta} \exp\left(-\frac{nx}{\theta}\right) \,. \end{align*}\] \(X_{(1)}\) is thus an exponentially distributed random variable with parameter \(\theta/n\) and expected value \(\theta/n\). We can verify the expected value result as follows. We recognize \[ E[X_{(1)}] = \int_0^\infty x \frac{n}{\theta} e^{-nx/\theta} dx \] as almost having the form of the gamma-function integral \[ \Gamma(u) = \int_0^\infty x^{u-1} e^{-x} dx \,. \] So we implement the variable transformation \(y = nx/\theta\); for this transformation, \(dy = (n/\theta)dx\), and if \(x = 0\) or \(\infty\), \(y = 0\) or \(\infty\) (meaning the integral bounds are unchanged). Our new integral is \[ E[X_{(1)}] = \int_0^\infty \frac{\theta y}{n} \frac{n}{\theta} e^{-y} \frac{\theta}{n} dy = \frac{\theta}{n} \int_0^\infty y e^{-y} dy = \frac{\theta}{n} \Gamma(2) = \frac{\theta}{n} 1! = \frac{\theta}{n} = \frac{E[X]}{n} \,. \]

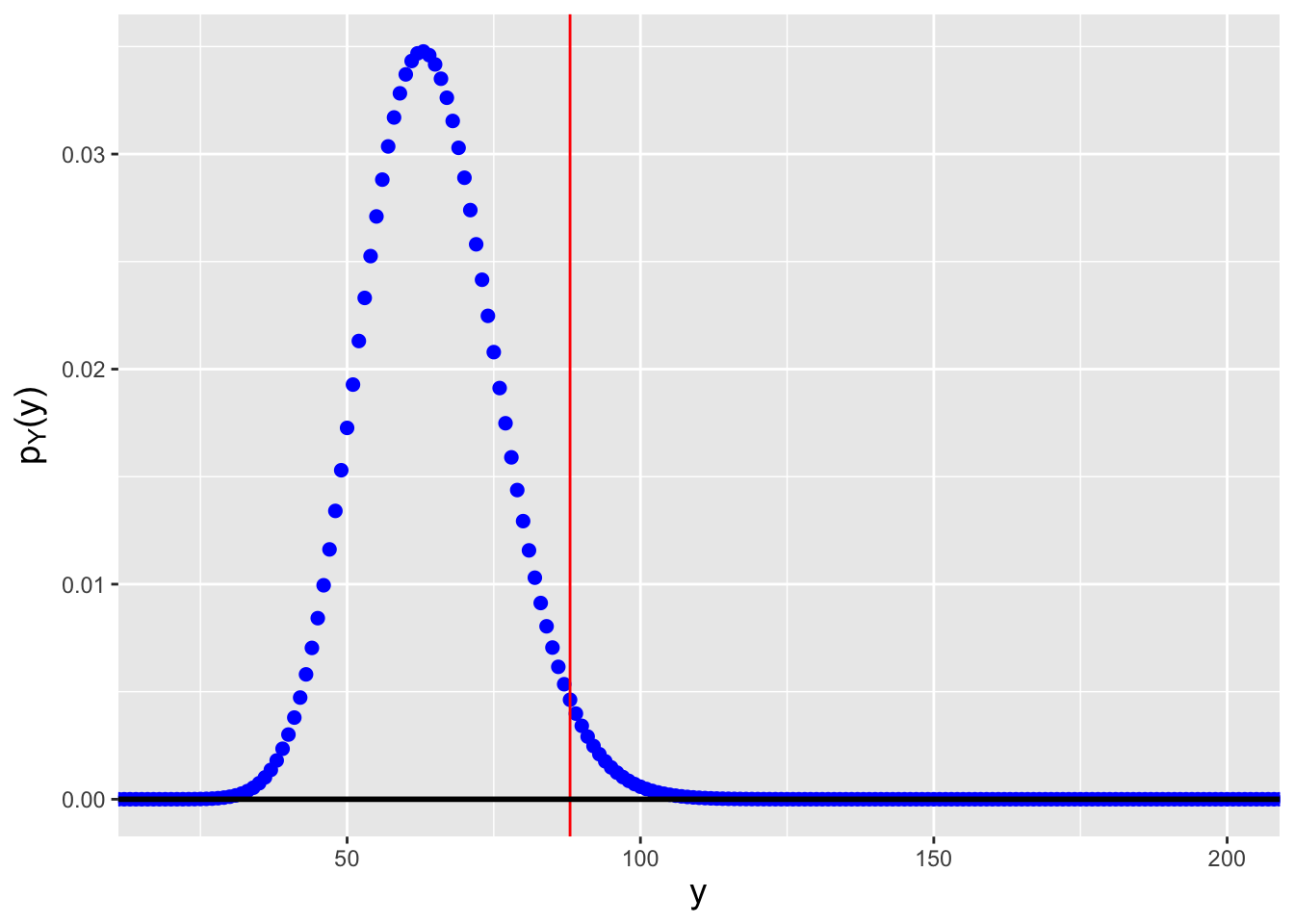

3.5.2 Uniform(0,1) Distribution: Sample Median

The Uniform(0,1) distribution is \[ f_X(x) = 1 ~~~ x \in [0,1] \,. \] Let’s assume that we draw \(n\) iid data from this distribution, with \(n\) odd. Then we can write down the pdf of the sample median, for which \(j = (n+1)/2\): \[ f_{((n+1)/2)}(x) = \frac{n!}{\left(\frac{n-1}{2}\right)! \left(\frac{n-1}{2}\right)!} f_X(x) \left[ F_X(x) \right]^{(n-1)/2} \left[ 1 - F_X(x) \right]^{(n-1)/2} \,. \] Given that \[ F_X(x) = \int_0^x dy = x ~~~ x \in [0,1] \,, \] we can write \[ f_{((n+1)/2)}(x) = \frac{n!}{\left(\frac{n-1}{2}\right)! \left(\frac{n-1}{2}\right)!} x^{(n-1)/2} (1-x)^{(n-1)/2} \,, \] for \(x \in [0,1]\). This function has both the form of a beta distribution (with \(\alpha = \beta = (n+1)/2\)) and the domain of a beta distribution, so \(\tilde{X} \sim\) Beta\(\left(\frac{n+1}{2},\frac{n+1}{2}\right)\).

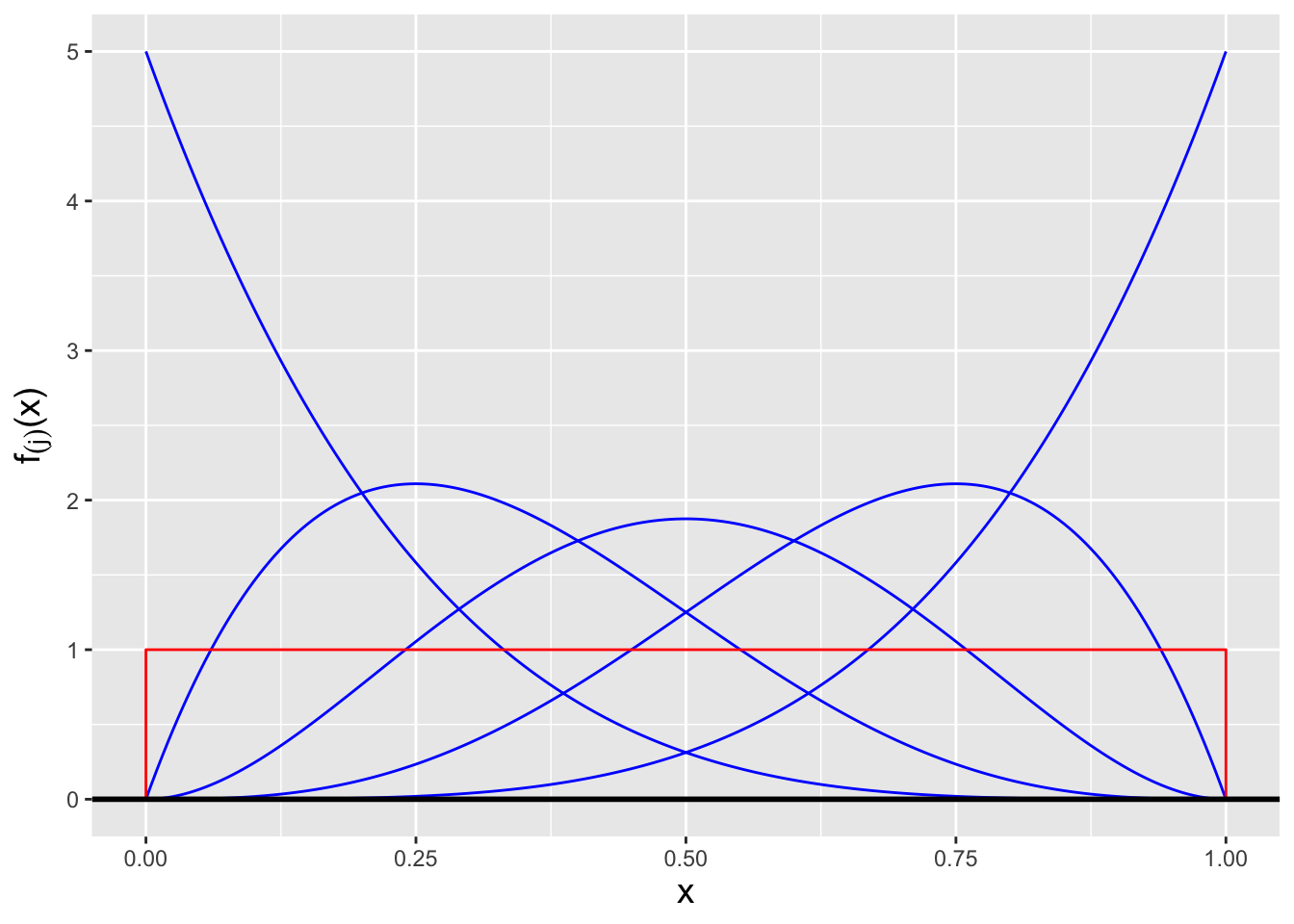

(In fact, we can go further and state a more general result: \(X_{(j)} \sim\) Beta(\(j,n-j+1\)): all the order statistics for data drawn from a Uniform(0,1) distribution are beta-distributed random variables! See Figure 3.7.)

Figure 3.7: The order statistic probability density functions \(f_{(j)}(x)\) for, from left to right, \(j = 1\) through \(j = 5\), for the situation in which \(n = 5\) iid data are drawn from a Uniform(0,1) distribution (overlaid in red). Each pdf is itself a beta distribution, with parameter values \(j\) and \(n-j+1\).

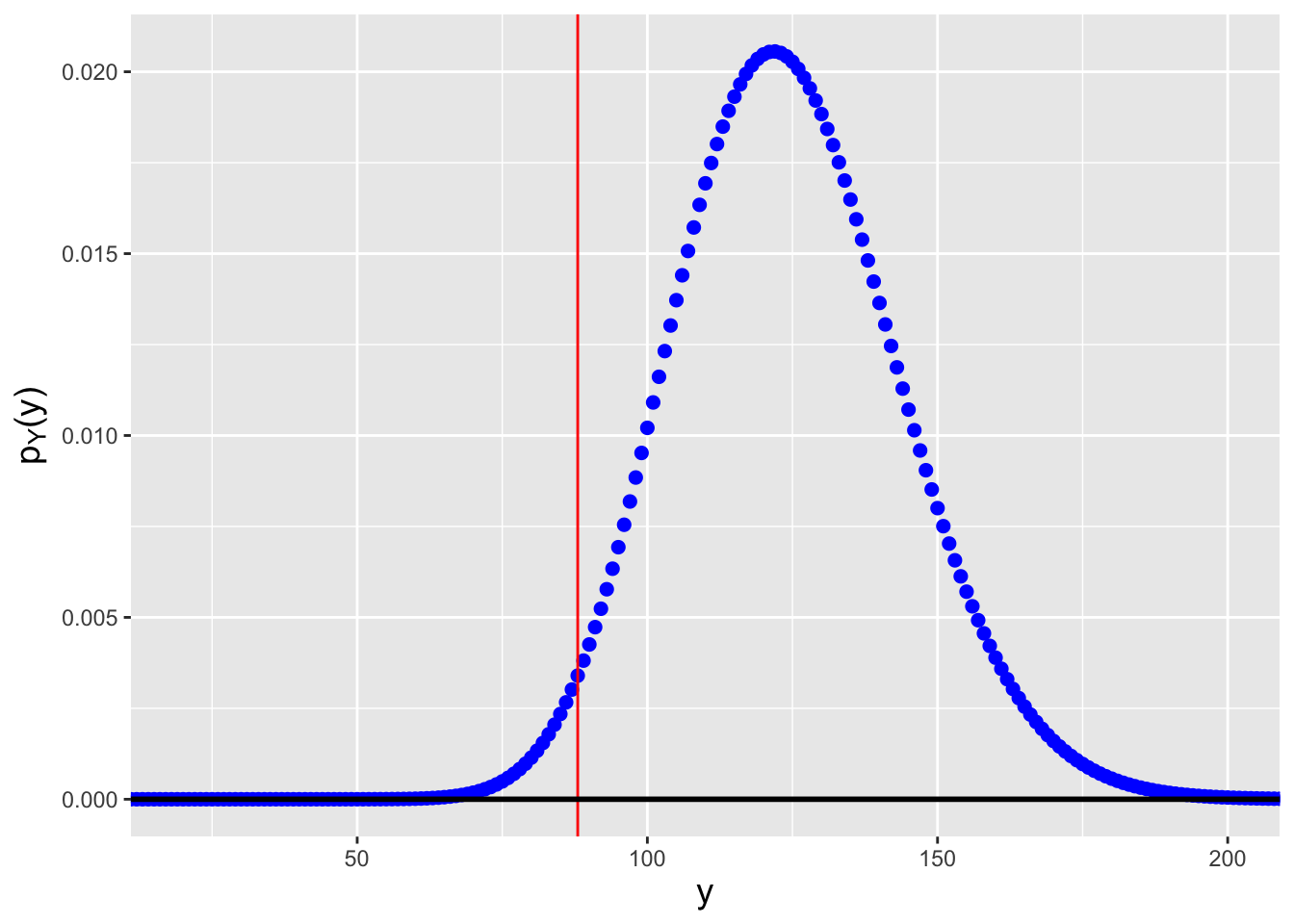

3.5.3 Order Statistic Distribution: Numeric Calculation

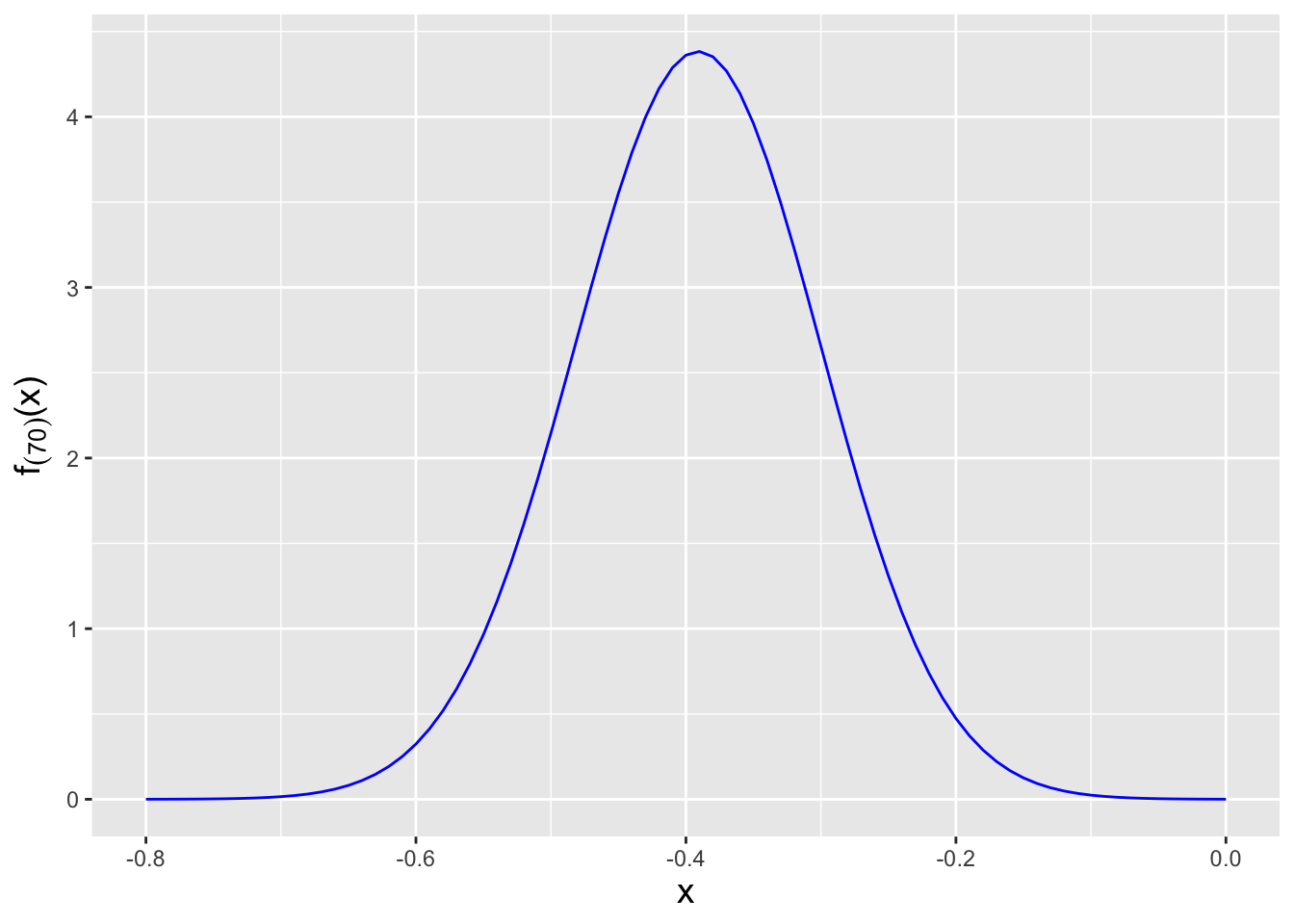

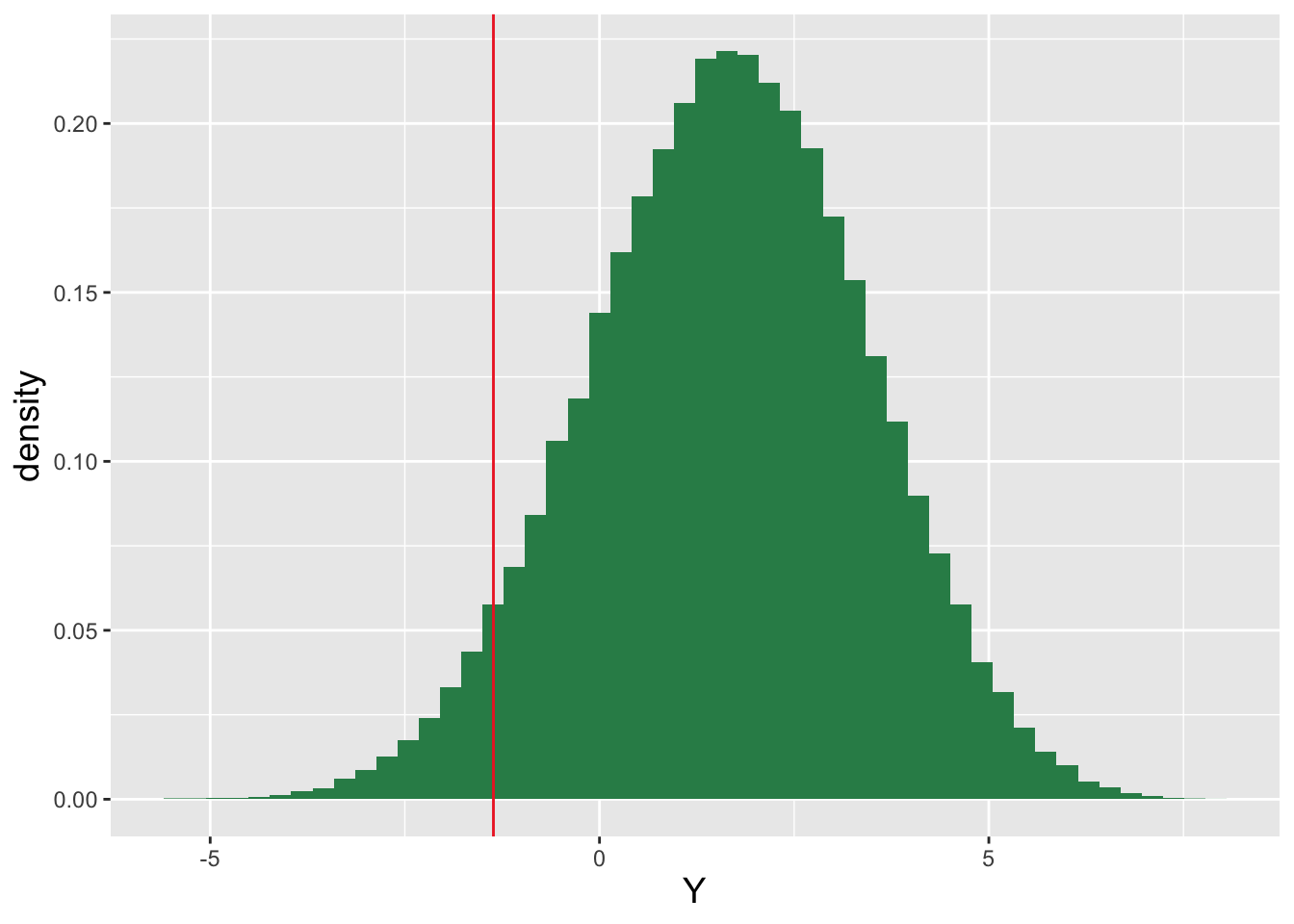

Let’s suppose that we sample \(n = 200\) data from a standard normal distribution. Can we compute, e.g., \(f_{(70)}(x)\), for any given value of \(x\)?

Because we cannot easily determine in this case whether the \(70^{\rm th}\) ordered datum is sampled according to some known, named distribution, we will attack this via direct numeric computation. Except…the expression for \(f_{(j)}(x)\) contains factorial functions, and evaluating factorial functions can lead to computational overflow:

## [1] InfBecause of this, the “safest” approach is to compute the natural logarithm of \(f_{(j)}\): \[ \log f_{(j)} = \log n! + \log f_X(x) + (j-1) \log F_X(x) + (n-j) \log [1 - F_X(x)] - \log (j-1)! - \log (n-j)! \,. \] In code, this would be expressed as

n <- 200

j <- 70

x <- -0.30

f.x <- dnorm(x)

F.x <- pnorm(x)

log.f.j <- lfactorial(n) + log(f.x) + (j-1)*log(F.x) + (n-j)*log(1-F.x) -

lfactorial(j-1) - lfactorial(n-j)

exp(log.f.j)## [1] 2.654509One can repeat this calculation over, e.g., a sequence of \(x\) values in order to visualize the probability density function.

Figure 3.8: The probability density function for the \(j = 70^{\rm th}\) order statistic, given \(n = 200\) data sampled according to a \(\mathcal{N}(\mu = 0,\sigma^2 = 1)\) distribution.

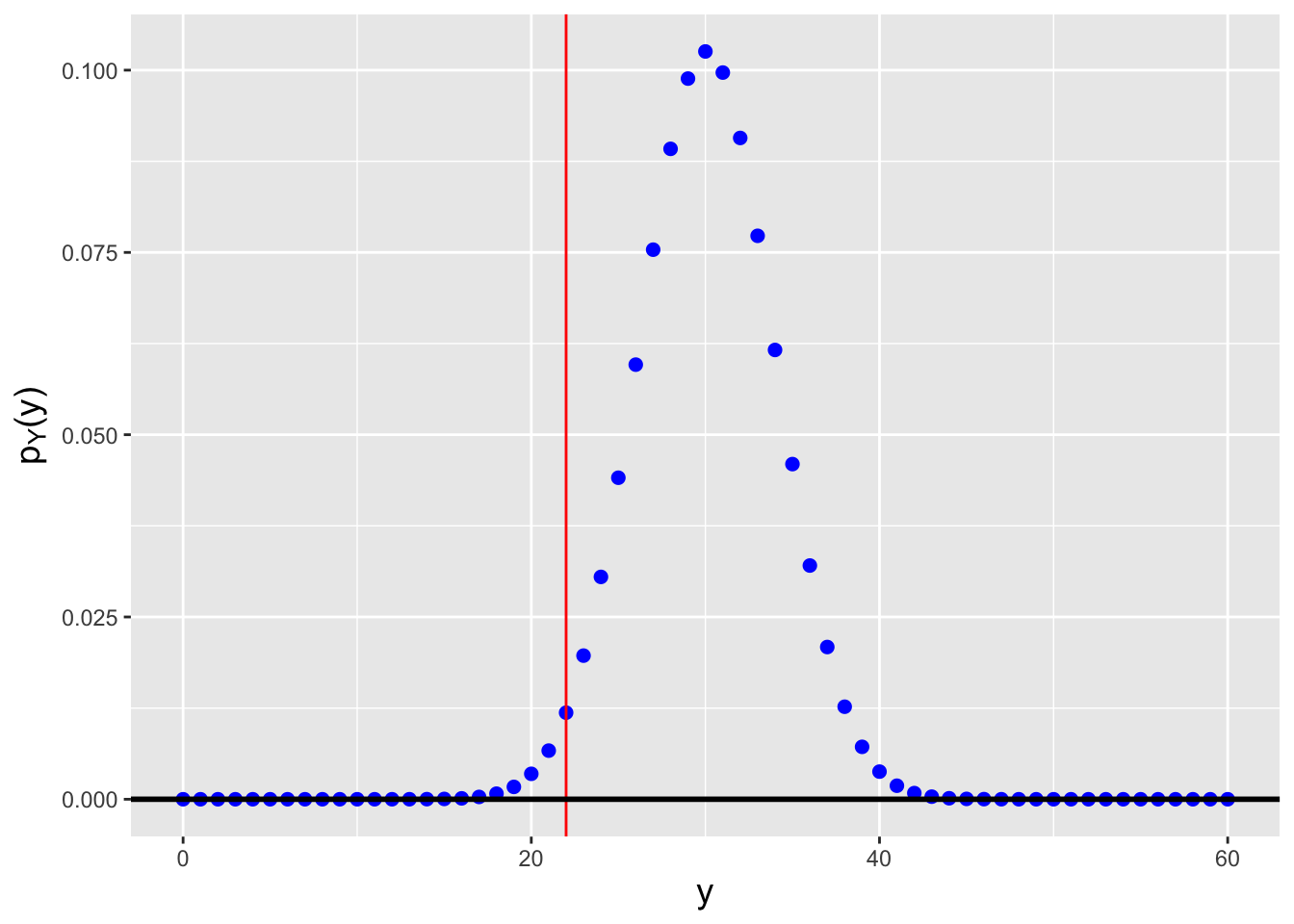

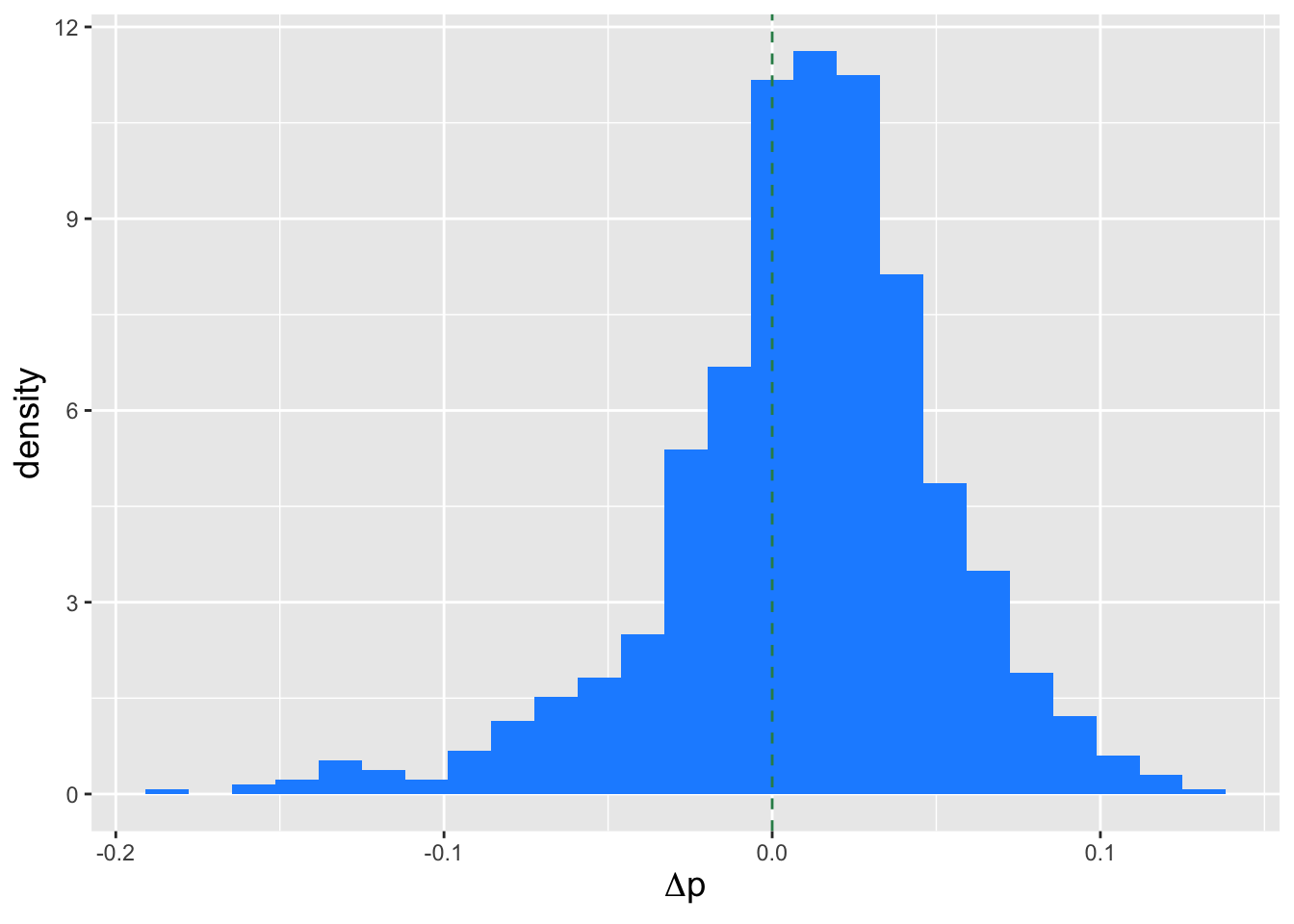

3.5.4 Discrete Order Statistic: Probability Mass Function

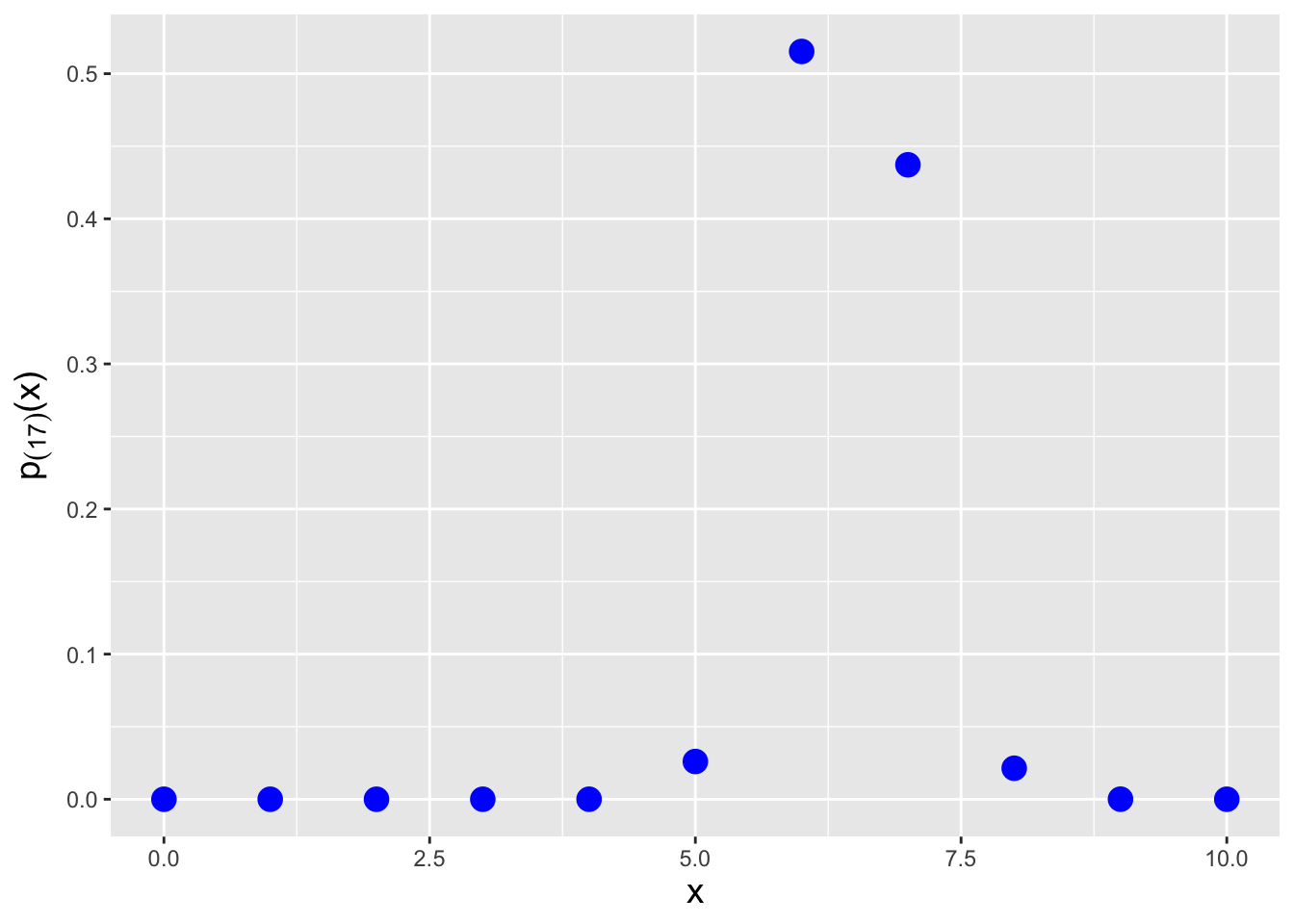

Let’s say that in an experiment, we flip a fair coin \(k = 10\) times and record the number of heads as our random variable \(X\). We then repeat this experiment \(n = 20\) times and arrange the 20 values \(\{X_1,\ldots,X_{20}\}\) into ascending order. What is the probability mass function for the \(17^{\rm th}\) ordered datum? We can numerically determine the pmf using the code below. See Figure 3.9.

# returns the cdf summation given above, for binomially distributed data

pbinom_order <- function(x, k, p, j, n)

{

i <- j:n

sum(choose(n, i) * (pbinom(x, k, p))^i * (1-pbinom(x, k, p))^(n-i))

}

k <- 10

p <- 0.5

j <- 17

n <- 20

# determine pmf for x = {0, 1, ..., k}

pmf_order <- rep(NA,k+1)

pmf_order[1] <- pbinom_order(0, k, p, j, n)

for ( ii in 2:(k+1) ) {

pmf_order[ii] <- pbinom_order(ii-1, k, p, j, n) - pbinom_order(ii-2, k, p, j, n)

}

Figure 3.9: The probability mass function for the \(j = 17^{\rm th}\) order statistic, given \(n = 20\) data sampled according to a Binom(\(k = 10,p = 0.5\)) distribution.

3.6 Point Estimation

What to take away from this section:

Because maximum likelihood estimators for distribution parameters \(\theta\) are not always unbiased (although any bias goes away as the sample size increases), we introduce a second point estimator: the minimum variance unbiased estimator, or MVUE.

The derivation of an MVUE for \(\theta\) hinges upon the identification of a sufficient statistic for \(\theta\).

Single-valued sufficient statistics exist for members of the exponential family of distributions, which includes those distributions typically used in data analyses: the normal, the binomial, etc.

An MVUE does not possess the invariance property, nor is there a guarantee that it converges in distribution to a normal random variable.

In the first two chapters, we introduce a number of concepts related to point estimation, the act of using statistics to make inferences about a population parameter \(\theta\). We…

- assess point estimators using the metrics of bias, variance, mean-squared error, and consistency;

- utilize the Fisher information metric to determine the lower bound on the variance for unbiased estimators (the Cramer-Rao Lower Bound, or CRLB); and

- define estimators via the maximum likelihood algorithm, which generates estimators that are at least asymptotically unbiased and at least asymptotically reach the CRLB, and which converge in distribution to normal random variables.

We will review these concepts in the context of estimating population quantities for binomial distributions below, in the body of the text and in examples. For now…

Recall: the bias of an estimator is the difference between the average value of the estimates it generates and the true parameter value. If \(E[\hat{\theta}-\theta] = 0\), then the estimator \(\hat{\theta}\) is said to be unbiased.

Recall: the value of \(\theta\) that maximizes the likelihood function is the maximum likelihood estimate, or MLE, for \(\theta\). The maximum is found by taking the (partial) derivative of the (log-)likelihood function with respect to \(\theta\), setting the result to zero, and solving for \(\theta\). That solution is the maximum likelihood estimate \(\hat{\theta}_{MLE}\). Also recall the invariance property of the MLE: if \(\hat{\theta}_{MLE}\) is the MLE for \(\theta\), then \(g(\hat{\theta}_{MLE})\) is the MLE for \(g(\theta)\).

Here we will introduce another means by which to define an estimator. The minimum variance unbiased estimator (or MVUE) is the one that has the smallest variance among all unbiased estimators of \(\theta\). The reader’s first thought might be “well, why didn’t we use this estimator in the first place…after all, the MLE is not guaranteed to yield an unbiased estimator, so why have we put off discussing the MVUE?” The primary reasons are that MVUEs are sometimes not definable (i.e., we can reach insurmountable computational roadblocks when trying to derive them), and unlike MLEs, they do not exhibit the invariance property. (For instance, if \(\hat{\theta}_{MLE} = \bar{X}\), then \(\hat{\theta^2}_{MLE} = \bar{X}^2\), but if \(\hat{\theta}_{MVUE} = \bar{X}\), it is not necessarily the case that \(\hat{\theta^2}_{MVUE} = \bar{X}^2\).) However, we should always at least try to define the MVUE, because if we can, it will be at least equal the performance of, if not perform better than, the MLE, in terms of mean-squared error.

There are two steps to carry out when deriving the MVUE:

- determining a sufficient statistic for \(\theta\); and

- correcting any bias that is observed when we utilize that sufficient statistic as our initial estimator.

A sufficient statistic for a parameter \(\theta\) captures all information about \(\theta\) contained in the sample. The sufficiency principle holds that if, e.g., \(Y\) is a sufficient statistic for \(\theta\), and we collect two datasets \(\mathbf{U}\) and \(\mathbf{V}\) such that \(Y(\mathbf{U}) = Y(\mathbf{V})\), then the inferences we make about \(\theta\) given that we observe \(\mathbf{U}\) will be exactly the same as those we would make if we were to observe \(\mathbf{V}\). Using any statistic beyond a sufficient statistic will not lead to improved inferences about \(\theta\). This means that, for instance, combining \(\bar{X}\) (a sufficient statistic for the normal mean \(\mu\) when the variance is known) with, say, the sample median will not reduce the length of confidence intervals for \(\mu\) or change the power of hypothesis tests about \(\mu\), relative to what we would find using \(\bar{X}\) alone. Note that utilizing the sufficiency principle is but one way by which statisticians can attempt data reduction prior to inference; two others, which are beyond the scope of this book, are the likelihood and equivalence principles. For a deeper treatment of sufficiency and data reduction than we provide here, the interested reader should consult Chapter 6 of Casella and Berger (2002).

A statistic \(Y(\mathbf{X})\) is a sufficient statistic for \(\theta\) if the ratio \[\begin{align*} \frac{f_X(\mathbf{x} \vert \theta)}{f_Y(y \vert \theta)} \overset{iid}{\rightarrow} \frac{\prod_{i=1}^n f_X(x_i \vert \theta)}{f_Y(y \vert \theta)} \,, \end{align*}\] where \(f_Y(y \vert \theta)\) is the probability density function of the sampling distribution for \(Y\), is constant as a function of \(\theta\). We note that by this definition, \(\mathbf{X}\) itself comprises a sufficient statistic for \(\theta\), as does the full set of order statistics \(\{X_{(1)},\ldots,X_{(n)}\}\). The relevant questions are: can we define a sufficient statistic that actually reduces the data? And if so, how can we identify it?

Let’s answer the second question first. The simplest way by which to identify a sufficient statistic is to write down the likelihood function and then to factorize it into two separate functions, one of which depends only on the observed data and the other of which depends on both the observed data and the parameter of interest: \[ \mathcal{L}(\theta \vert \mathbf{x}) = h(\mathbf{x}) \cdot g(\mathbf{x},\theta) \,. \] This is the so-called factorization criterion. In the expression \(g(\mathbf{x},\theta)\), the data will appear within, e.g., a summation (e.g., \(\sum_{i=1}^n x_i\)) or a product (e.g., \(\prod_{i=1}^n x_i\)), and we would identify that summation or product as a sufficient statistic for \(\theta\). For instance, for the binomial distribution, the factorized likelihood (given \(n\) iid data) is \[ \mathcal{L}(p \vert \mathbf{x}) = \prod_{i=1}^n \binom{k}{x_i} p^{x_i} (1-p)^{k-x_i} = \underbrace{\left[ \prod_{i=1}^n \binom{k}{x_i} \right]}_{h(\mathbf{x})} \underbrace{p^{\sum_{i=1}^n x_i} (1-p)^{nk-\sum_{i=1}^n x_i}}_{g(\sum_{i=1}^n x_i,p)} \,. \] By inspecting the function \(g(\mathbf{x},p)\), we immediately determine that \(Y = X_+ = \sum_{i=1}^n X_i\) is a sufficient statistic for \(p\). We say that \(X_+\) is “a” sufficient statistic because sufficient statistics are not unique: any one-to-one function of a sufficient statistic is also a sufficient statistic. For instance, if \(X_+\) is a sufficient statistic for \(\theta\), then so is \(\bar{X}\), etc.

Now we return to the first question above: can we define a sufficient statistic that actually reduces the data? The answer is “not always.” In fact, across all possible distributions, it is relatively rare that we can do this. The Pitman-Koopman-Darmois theorem holds that, among families of distributions that do not have domain-specifying parameters, only members of the exponential family of distributions have sufficient statistics whose dimension does not increase as the sample size increases. (This theorem motivates, in large part, why we introduce the exponential family in Chapter 2.)

Recall: the exponential family of distributions is the set of distributions whose probability mass or density functions can be expressed as \[ h(x) \exp\left[\left(\sum_{i=1}^p \eta_i(\boldsymbol{\theta}) T_i(x)\right) - A(\boldsymbol{\theta})\right] \,, \] where \(\boldsymbol{\theta}\) is a vector of parameters (e.g., \(\boldsymbol{\theta} = \{\mu,\sigma^2\}\) for a normal distribution). Note that none of the parameters can be a domain-specifying parameter.

Let’s assume that we are dealing with distributions that have just one free parameter \(\theta\), like the binomial distribution. Then the expression above simplifies to \[\begin{align*} h(x) \exp\left[ \eta(\theta) T(x) - A(\theta) \right] \,, \end{align*}\] where \(T(x)\) is a sufficient statistic. As shown earlier in this chapter, for a single binomially distributed datum, \(T(X) = X\). If on the other hand we collect \(n\) iid data, then a sufficient statistic is \(T(\mathbf{X}) = \sum_{i=1}^n X_i\), and as we can see it is still a single number\(-\)i.e., it has a dimension of one\(-\)regardless of the value of \(n\). Now, restricting ourselves to exponential family distributions in inferential situations would appear to limit the practical utility of the sufficiency principle…except it is the case that many (if not most) of the distributions that we typically utilize in inference are indeed exponential-family distributions, including all the ones that we focus on in chapters 2-4 of this book.

(We note here for completeness that once we identify a sufficient statistic, we are technically required to demonstrate that it is both minimally sufficient and complete, as it needs to be both so that we can use it to, e.g., determine the minimum variance unbiased estimator. It suffices to say here that the sufficient statistics that we identify for exponential family distributions via likelihood factorization are minimally sufficient and complete. See Chapter 7 for more details on minimal sufficiency and completeness.)

If \(Y\) is a sufficient statistic for \(\theta\), and there is a function \(h(Y)\) that is an unbiased estimator for \(\theta\) and that depends on the data only through \(Y\), then \(h(Y)\) is the MVUE for \(\theta\). (A reader who is interested in the underlying theory should reference the Lehmann-Scheff'e theorem.) Recall from above that one-to-one functions of sufficient statistics are themselves sufficient statistics, hence \(h(Y)\) is also going to be sufficient for \(\theta\). Here, given \(Y = \sum_{i=1}^n X_i\), we need to find a function \(h(\cdot)\) such that \(E[h(Y)] = p\). Earlier in this chapter, we determined that the distribution for the sum of iid binomial random variables is Binomial(\(nk\),\(p\)), and thus we know that this distribution has expected value \(nkp\). Thus \[ E\left[Y\right] = nkp ~\implies~ E\left[\frac{Y}{nk}\right] = p ~\implies~ h(Y) = \frac{Y}{nk} = \frac{\bar{X}}{k} \] is the MVUE for \(p\). The variance of \(\hat{p}\) is \[ V[\hat{p}] = V\left[\frac{\bar{X}}{k}\right] = \frac{1}{k^2}V[\bar{X}] = \frac{1}{k^2} \frac{V[X]}{n} = \frac{1}{k^2}\frac{kp(1-p)}{n} = \frac{p(1-p)}{nk} \,. \] We know that this variance abides by the restriction \[ V[\hat{p}] \geq \frac{1}{nI(p)} = -\frac{1}{nE\left[\frac{d^2}{dp^2} \log p_X(X \vert p) \right]} \,. \] But is it equivalent to the lower bound for unbiased estimators, the CRLB? (Note that in particular situations, the MVUE may have a variance larger than the CRLB; when this is the case, unbiased estimators that achieve the CRLB simply do not exist.) For the binomial distribution, \[\begin{align*} p_{X}(x) &= \binom{k}{x} p^{x} (1-p)^{k-x} \\ \log p_{X}(x) &= \log \binom{k}{x} + x \log p + (k-x) \log (1-p) \\ \frac{d}{dp} \log p_{X}(x) &= 0 + \frac{x}{p} - \frac{k-x}{(1-p)} \\ \frac{d^2}{dp^2} \log p_{X}(x) &= -\frac{x}{p^2} - \frac{k-x}{(1-p)^2} \\ E\left[\frac{d^2}{dp^2} \log p_{X}(X)\right] &= -\frac{1}{p^2}E[X] - \frac{1}{(1-p)^2}E[k-X] \\ &= -\frac{kp}{p^2}-\frac{k-kp}{(1-p)^2} \\ &= -\frac{k}{p}-\frac{k}{1-p} = -\frac{k}{p(1-p)} \,. \end{align*}\] The lower bound on the variance is thus \(p(1-p)/(nk)\), and so the MVUE does achieve the CRLB. We cannot define a better unbiased estimator for \(p\) than \(\bar{X}/k\).

3.6.1 The MLE for the Binomial Success Probability

Recall: the value of \(\theta\) that maximizes the likelihood function is the maximum likelihood estimate, or MLE, for \(\theta\). The maximum is found by taking the (partial) derivative of the (log-)likelihood function with respect to \(\theta\), setting the result to zero, and solving for \(\theta\). That solution is the maximum likelihood estimate \(\hat{\theta}_{MLE}\).

Above, we determined that the likelihood function for \(n\) iid binomial random variables \(\{X_1,\ldots,X_n\}\) is \[ \mathcal{L}(p \vert \mathbf{x}) = \left[\prod_{i=1}^n \binom{k}{x_i} \right] p^{\sum_{i=1}^n x_i} (1-p)^{nk-\sum_{i=1}^n x_i} \,. \] Recall that the value \(\hat{p}_{MLE}\) that maximizes \(\mathcal{L}(p \vert \mathbf{x})\) also maximizes \(\ell(p \vert \mathbf{x}) = \log \mathcal{L}(p \vert \mathbf{x})\), which is considerably easier to work with: \[\begin{align*} \ell(p \vert \mathbf{x}) &= \left(\sum_{i=1}^n x_i\right) \log p + \left(nk - \sum_{i=1}^n x_i\right) \log (1-p) \\ \ell'(p \vert \mathbf{x}) &= \frac{d}{dp} \ell(p \vert \mathbf{x}) = \frac{1}{p} \sum_{i=1}^n x_i - \frac{1}{1-p} \left(nk - \sum_{i=1}^n x_i\right) \,. \end{align*}\] (Here, we drop the binomial coefficient, which does not depend on \(p\) and thus differentiates to zero.) After setting \(\ell'(p \vert \mathbf{x})\) to zero and rearranging terms, we find that \[ p = \frac{1}{nk}\sum_{i=1}^n x_i ~\implies~ \hat{p}_{MLE} = \frac{\bar{X}}{k} \,. \] The MLE matches the MVUE, thus we know that the MLE is unbiased and we know that it achieves the CRLB.

Recall: the bias of an estimator is the difference between the average value of the estimates it generates and the true parameter value. If \(E[\hat{\theta}-\theta] = 0\), then the estimator \(\hat{\theta}\) is said to be unbiased.

Recall: the Cramer-Rao Lower Bound (or CRLB) is the lower bound on the variance of any unbiased estimator. If an unbiased estimator achieves the CRLB, it is the MVUE…but it can be the case that the MVUE does not achieve the CRLB. For a discrete distribution, the CRLB is given by \[ V_{\rm CRLB}[\hat{\theta}] = -\left(nE\left[\frac{d^2}{d\theta^2} \log p_X(X \vert p) \right]\right)^{-1} = \frac{1}{nI(\theta)} \] where \(I(\theta)\) is the Fisher information: \[ I(\theta) = -E\left[ \frac{\partial^2}{\partial \theta^2} \log f_X(x \vert \theta) \right] \,. \]

A useful property of MLEs is the invariance property, which holds that the MLE for a function of \(\theta\) is given by applying the same function to the MLE itself. Thus

- the MLE for the population mean \(E[X] = \mu = kp\) is \(\hat{\mu}_{MLE} = \bar{X}\); and

- the MLE for the population variance \(V[X] = \sigma^2 = kp(1-p)\) is \(\widehat{\sigma^2}_{MLE} = \bar{X}(1-\bar{X}/k)\).

Last, note \(\hat{p}_{MLE}\) is a consistent estimator (like all MLEs) and that asymptotically, it converges in distribution to a normal random variable (like all MLEs): \[ \hat{p}_{MLE} \overset{d}{\rightarrow} Y \sim \mathcal{N}\left(p,\frac{1}{nI(p)} = \frac{p(1-p)}{nk}\right) \,. \]

Recall: an estimator is consistent if its mean-squared error, \(B[\hat{\theta}]^2 + V[\hat{\theta}]\), goes to zero as the sample size \(n\) goes to infinity.

3.6.2 Normal Distribution: Sufficient Statistics

If we have \(n\) iid data drawn from a normal distribution with unknown mean \(\mu\) and unknown variance \(\sigma^2\), then the factorized likelihood is \[ \mathcal{L}(\mu,\sigma^2 \vert \mathbf{x}) = \underbrace{(2 \pi \sigma^2)^{-n/2} \exp\left(-\frac{1}{2\sigma^2}\sum_{i=1}^n x_i^2\right)\exp\left(\frac{\mu}{\sigma^2}\sum_{i=1}^n x_i\right)\exp\left(-\frac{n\mu^2}{2\sigma^2}\right)}_{g(\sum x_i^2, \sum x_i,\mu,\sigma)} \cdot \underbrace{1}_{h(\mathbf{x})} \,. \] Here, we identify \(\sum x_i^2\) and \(\sum x_i\) as joint sufficient statistics: we need two pieces of information to jointly estimate \(\mu\) and \(\sigma^2\). (To be clear: it is not necessarily the case that one of the parameters matches to one of the statistics…rather, the two statistics are jointly sufficient for estimation.) We thus cannot proceed further to define an MVUE for \(\mu\) or for \(\sigma^2\), without knowing the joint bivariate probability density function for \(Y_1 = \sum_{i=1}^n X_i^2\) and \(Y_2 = \sum_{i=1}^n X_i\).

(Note that we can proceed if we happen to know either \(\mu\) or \(\sigma^2\); if one of these values is fixed, then there will only be one sufficient statistic and we can determine the MVUE for the other, freely varying parameter.)

3.6.3 Sufficiency: When We Cannot Reduce Data

As stated above, it is often impossible to reduce data to, e.g., a single-number summary. The following examples, utilizing distributions that are not exponential-family distributions, illustrate this.

- A Laplace distribution with scale parameter 1 has the probability density function \[\begin{align*} f_X(x \vert \theta) &= \frac12 e^{-\vert x - \theta \vert} \,, \end{align*}\] where \(x \in (-\infty,\infty)\) and \(\theta \in (-\infty,\infty)\). If we observe \(n\) iid data, then likelihood factorization yields \[\begin{align*} \mathcal{L}(\theta \vert \mathbf{x}) = \frac{1}{2^n} e^{-\sum_{i=1}^n \vert x_i - \theta \vert} \,. \end{align*}\] \(Y = \sum_{i=1}^n \vert X_i - \theta \vert\) is not a sufficient statistic as it contains the (unknown) parameter: a sufficient statistic for \(\theta\) cannot contain \(\theta\) itself! We cannot isolate a function of \(\mathbf{X}\) alone, and thus no data reduction is possible.

- A Cauchy distribution with scale parameter 1 has the probability density function \[\begin{align*} f_X(x \vert \theta) = \frac{1}{\pi (x-\theta)^2} \,, \end{align*}\] where \(x \in (-\infty,\infty)\) and \(\theta \in (-\infty,\infty)\). If we observe \(n\) iid data, then likelihood factorization yields \[\begin{align*} \mathcal{L}(\theta \vert \mathbf{x}) = \frac{1}{\pi^n} \frac{1}{\prod_{i=1}^n (x_i-\theta)^2} \,. \end{align*}\] Again, we cannot isolate a function of the \(X_i\)’s alone: no data reduction is possible.

3.6.4 Exponential Distribution: MVUE for Population Mean

The exponential distribution is \[ f_X(x) = \frac{1}{\theta} \exp\left(-\frac{x}{\theta}\right) \,, \] where \(x \geq 0\) and \(\theta > 0\), and where \(E[X] = \theta\) and \(V[X] = \theta^2\). Let’s assume that we have \(n\) iid data drawn from this distribution. Can we define the MVUE for \(\theta\)? For \(\theta^2\)?

The likelihood function is \[ \mathcal{L}(\theta \vert \mathbf{x}) = \prod_{i=1}^n f_X(x_i \vert \theta) = \frac{1}{\theta^n}\exp\left(-\frac{1}{\theta}\sum_{i=1}^n x_i \right) = h(\mathbf{x}) \cdot g(\theta,\mathbf{x}) \,. \] Here, there are no terms that are functions of only the data, so \(h(\mathbf{x}) = 1\) and a sufficient statistic is \(Y = \sum_{i=1}^n X_i\). We compute the expected value of \(Y\): \[ E[Y] = E\left[\sum_{i=1}^n X_i\right] = \sum_{i=1}^n E[X_i] = \sum_{i=1}^n \theta = n\theta \,. \] The expected value of \(Y\) is not \(\theta\), so \(Y\) is not unbiased…but we can see immediately that \(E[Y/n] = \theta\), so that \(Y/n\) is unbiased. Thus the MVUE for \(\theta\) is thus \(\hat{\theta}_{MVUE} = Y/n = \bar{X}\).

Note that the MVUE does not possess the invariance property…it is not necessarily the case that \(\hat{\theta^2}_{MVUE} = \bar{X}^2\).

Let’s propose a function of \(Y\) and see if we can use that to define \(\hat{\theta^2}_{MVUE}\): \(h(Y) = Y^2/n^2 = \bar{X}^2\). (To be clear, we are simply proposing a function and seeing if it helps us define what we are looking for. It might not. If not, we can try again with another function of \(Y\).) Utilizing what we know about the sample mean, we can write down that \[ E[\bar{X}^2] = V[\bar{X}] + (E[\bar{X}])^2 = \frac{V[X]}{n} + (E[X])^2 = \frac{\theta^2}{n}+\theta^2 = \theta^2\left(\frac{1}{n} + 1\right) \,. \] So \(\bar{X}^2\) itself is not an unbiased estimator of \(\theta^2\)…but we can see that \(\bar{X}^2/(1/n+1)\) is. Hence \[ \hat{\theta^2}_{MVUE} = \frac{\bar{X}^2}{\left(\frac{1}{n}+1\right)} \,. \]

3.6.5 Geometric Distribution: MVUE for Number of Failures

Recall that the geometric distribution is a negative binomial distribution with \(s = 1\): \[\begin{align*} p_X(x) = \binom{x + s - 1}{x} p^s (1-p)^x ~ \rightarrow ~ p(1-p)^x \,, \end{align*}\] where \(p \in (0,1]\) and where \(E[X] = (1-p)/p\) and \(V[X] = (1-p)/p^2\). Let’s assume that we sample \(n\) iid data from this distribution. Can we define the MVUE for \(p\)? For \(1/p\)?

The likelihood function is \[\begin{align*} \mathcal{L}(p \vert \mathbf{x}) = \prod_{i=1}^n p_X(x_i \vert p) = p^n (1-p)^{\sum_{i=1}^n x_i} = 1 \cdot g(p,\mathbf{x}) \,. \end{align*}\] We identify a sufficient statistic as \(Y = \sum_{i=1}^n X_i\); the expected value of \(Y\) is \[\begin{align*} E[Y] = E\left[\sum_{i=1}^n X_i\right] = \sum_{i=1}^n E[X_i] = \sum_{i=1}^n \frac{1-p}{p} = n\left(\frac{1}{p}-1\right) \,. \end{align*}\] We cannot “debias” this expression to find the MVUE for \(p\), but we can do it to find the MVUE for the number of failures: \[\begin{align*} E\left[\frac{Y}{n}\right] = \frac{1}{p} - 1\,. \end{align*}\] As there is no invariance property for the MVUE, we cannot use this expression to write down the MVUE for \(p\) itself.

3.7 Confidence Intervals

What to take away from this section:

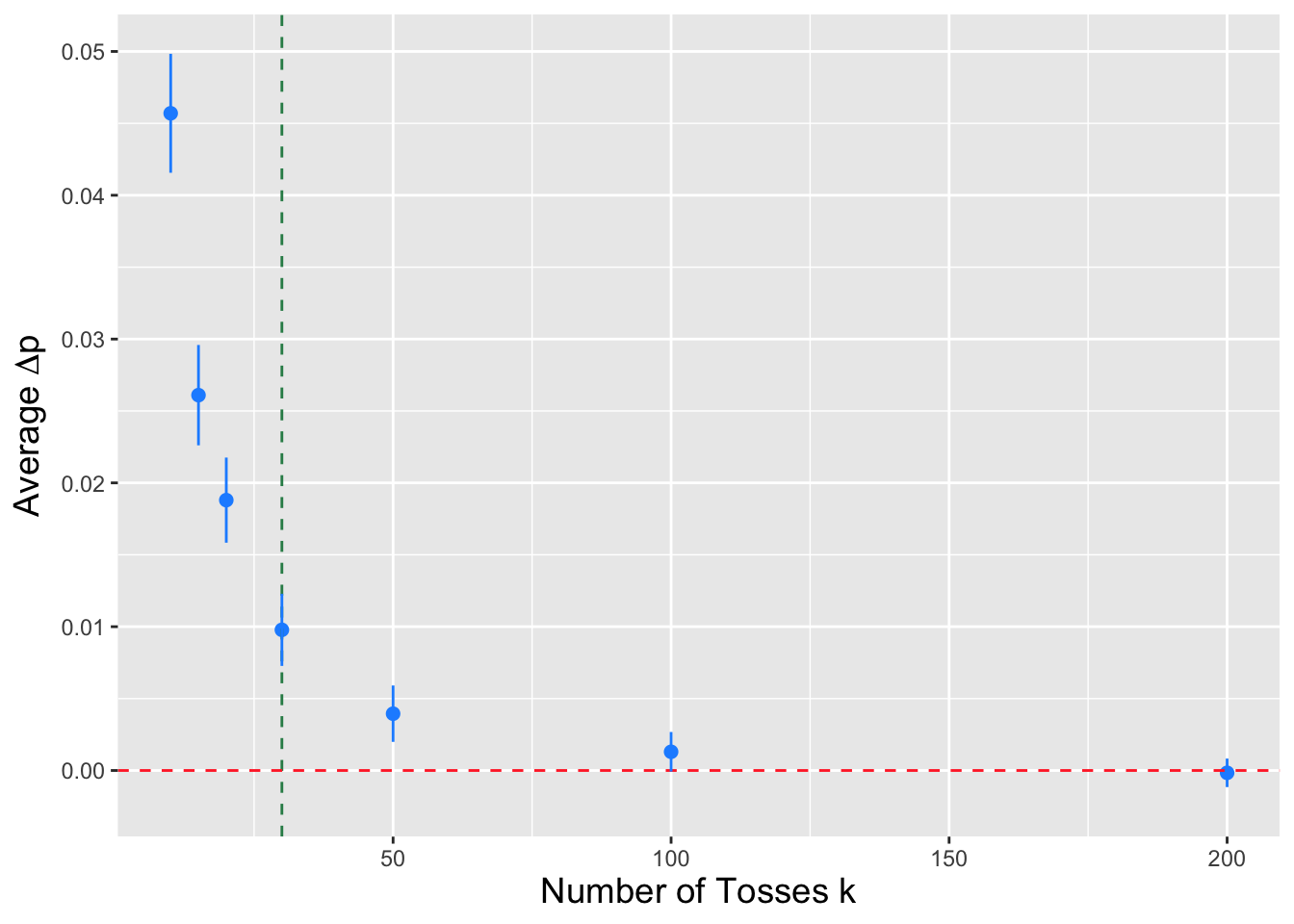

Given \(n\) iid data sampled according to a normal distribution, we can utilize numerical root-finding frameworks to construct \(100(1-\alpha)\)-percent confidence intervals for the binomial success proportion \(p\), as well as the parameters of related discrete distributions like the negative binomial.

Since the sampling distributions are discrete distributions, we must sometimes make discreteness corrections (such as subtracting 1 from the observed statistic value) when calling numerical root-finding functions.

And because the sampling distributions are discrete distributions, the interval coverage (i.e., the proportion of intervals that overlap the true value of \(\theta\)) will be \(\geq 1-\alpha\), with the equality achieved as the sample size (or, e.g., the number of trials) goes to infinity.

Recall: a confidence interval is a random interval \([\hat{\theta}_L,\hat{\theta}_U]\) that, when we work with continuous sampling distributions, overlaps (or covers) the true value \(\theta\) with probability \[ P\left( \hat{\theta}_L \leq \theta \leq \hat{\theta}_U \right) = 1 - \alpha \,, \] where \(1 - \alpha\) is the confidence coefficient. Note that this is a long-term probabilistic statement that is not to be applied to any one numerically evaluated interval: an evaluated interval either overlaps the true value, or it does not (and thus we cannot say there is a \(100(1-\alpha)\)-percent chance that \(\theta\) lies within the interval). We determine \(\hat{\theta}\) by solving the following equation: \[ F_Y(y_{\rm obs} \vert \theta) - q = 0 \,, \] where \(F_Y(\cdot)\) is the cumulative distribution function for the statistic \(Y\), \(y_{\rm obs}\) is the observed value of the statistic, and \(q\) is an appropriate quantile value that is determined using the confidence interval reference table introduced in section 16 of Chapter 1.

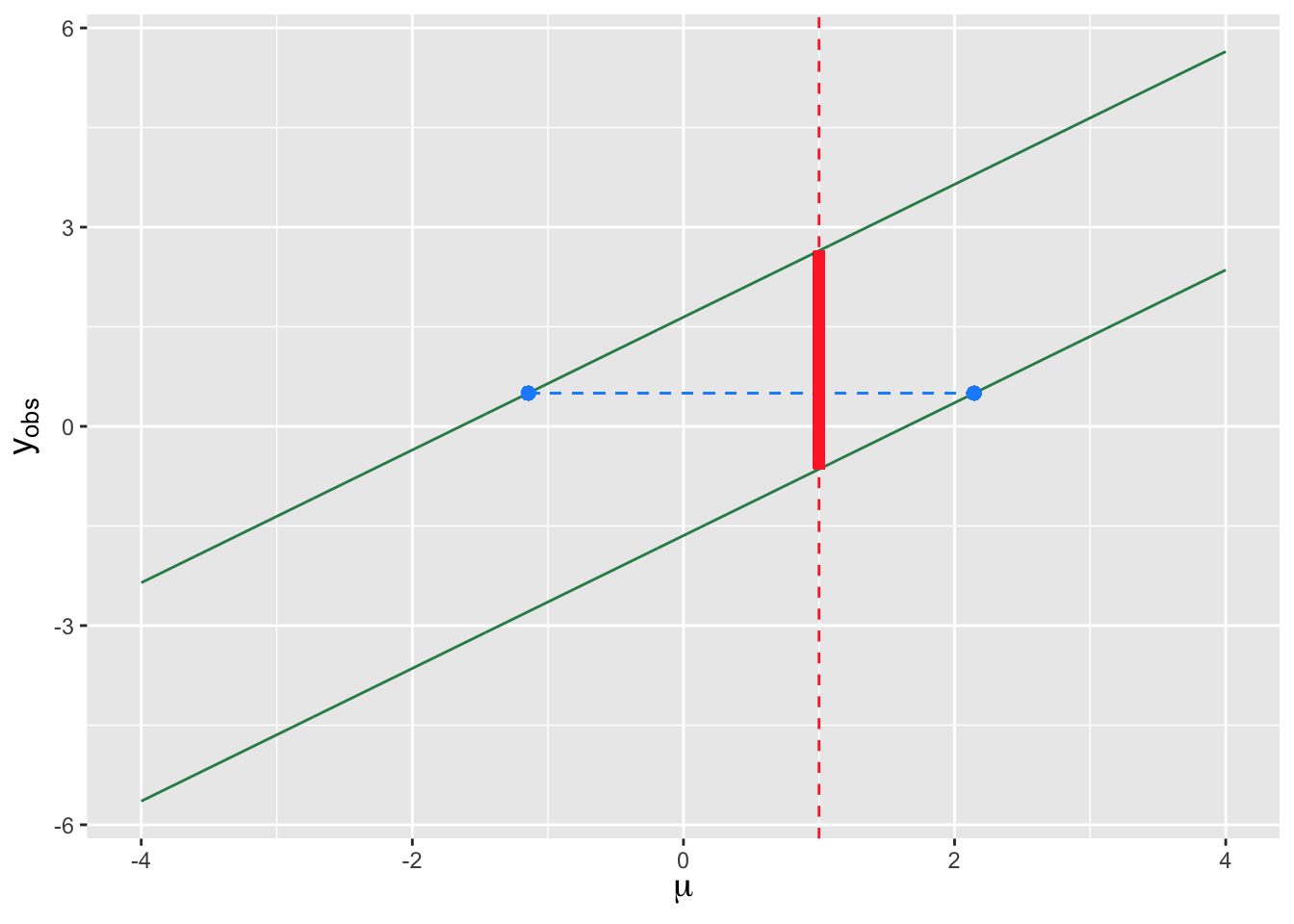

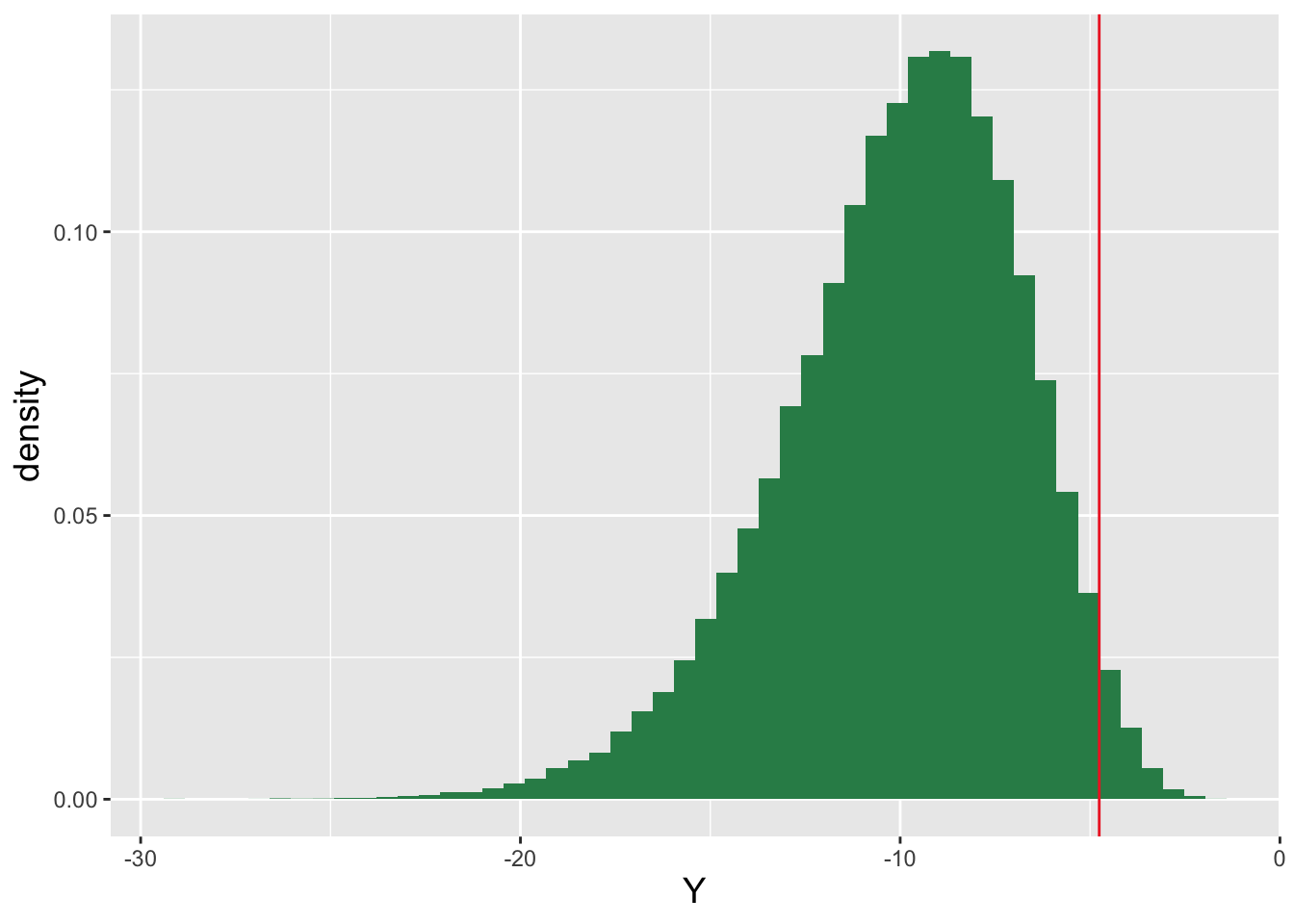

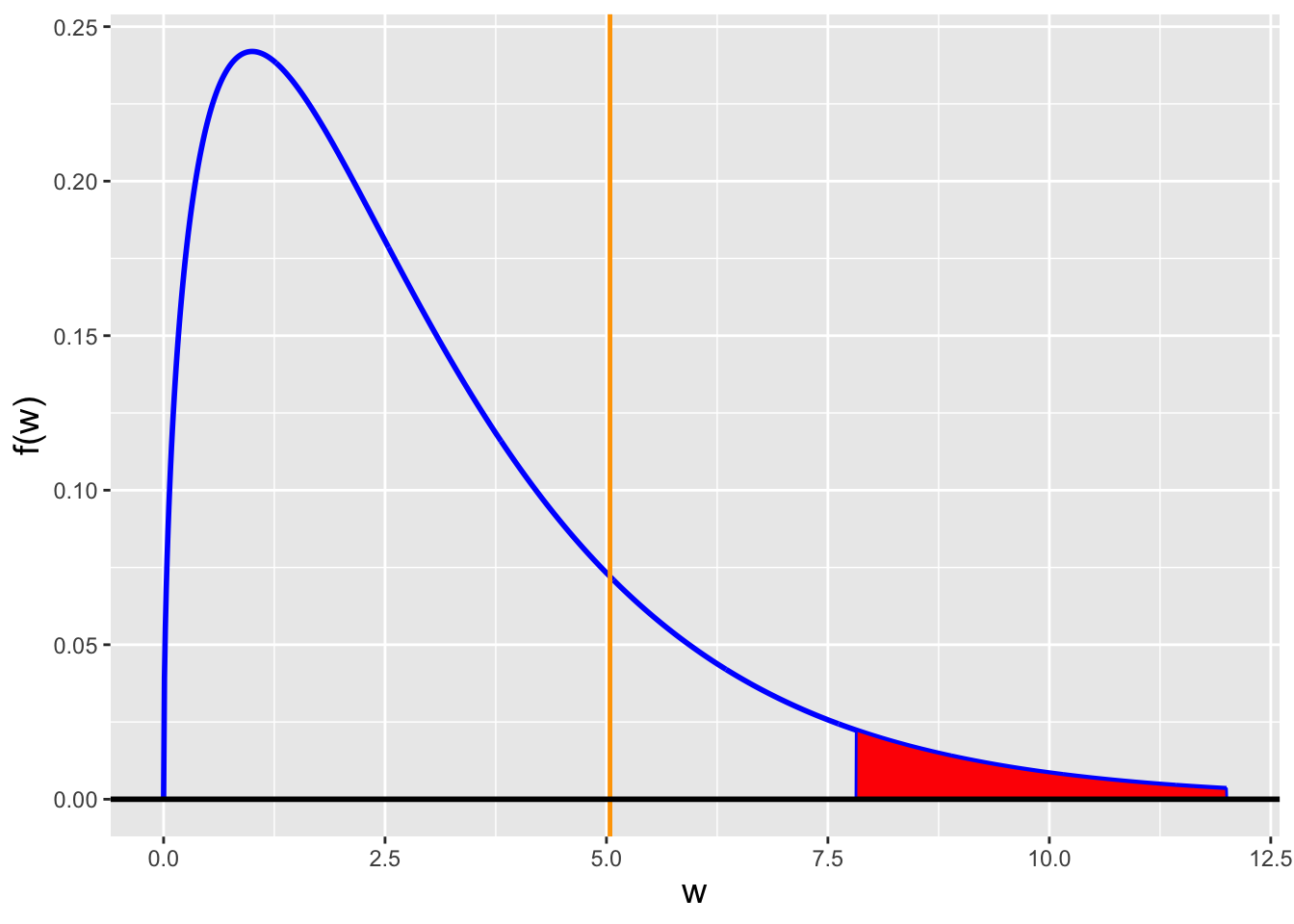

A concept that we have not explicitly discussed yet is the “duality” between confidence intervals and hypothesis tests: as they are mathematically related, one can, in theory, “invert” hypothesis tests to derive confidence intervals and vice-versa. We illustrate this duality in Figure 3.10, in which we assume that the distribution from which we are to sample data is a normal distribution with mean \(\mu\) and known variance \(\sigma^2\). The parallel green lines in the figure represent lower and upper rejection region boundaries as a function of (the null hypothesis value) \(\mu\). Let’s say that we pick a specific value of \(\mu\), say \(\mu = \mu_o = 1\), and draw a vertical line at that coordinate. The part of that line that lies between the parallel green lines (indicated in solid red) indicates the range of observed statistic values \(y_{\rm obs}\) for which we would fail to reject the null, and the parts above and below the parallel lines (shown in dashed red) would indicate \(y_{\rm obs}\) values for which we would reject the null. (These are the acceptance and rejection regions, respectively; “acceptance region” is a term that we have not used up until now, but it simply denotes the range of statistic values for which we would fail to reject a null hypothesis, when it is indeed correct.) Confidence intervals, on the other hand, are ranges of values of \(\mu\) for which a given value of \(y_{\rm obs}\) lies in the acceptance region. (For instance, the dashed horizontal blue line in the figure shows the range of values associated with a two-sided confidence interval for \(\mu\) given \(y_{\rm obs} = 0.5\).) We can see that for a given value of \(\mu\), if we were to sample a value of \(y_{\rm obs}\) between the two green lines (with probability \(1-\alpha\)), then the associated confidence interval will overlap \(\mu\), and if we sample a value of \(y_{\rm obs}\) outside the green lines (with probability \(\alpha\)), the confidence interval will not overlap \(\mu\). The confidence interval coverage is thus exactly \(100(1-\alpha)\) percent.

Figure 3.10: An illustration of the relationship between hypothesis testing and confidence interval estimation for a continuous sampling distribution (here, a normal distribution with known variance). The two parallel green lines define rejection-region boundaries for a two-sided test with null hypothesis parameter value \(\mu\). Assume \(\mu = 1\): the dashed red lines indicate the values of \(y_{\rm obs}\) in the rejection region, while the solid red line indicates the values of \(y_{\rm obs}\) in the acceptance region (where we fail to reject the null). The horizontal dashed blue line shows the confidence interval constructed for a given value of \(y_{\rm obs}\). Given \(\mu\), the probability of sampling a statistic in the acceptance region is exactly \(1-\alpha\), and thus the coverage of confidence intervals will also be exactly \(1-\alpha\).

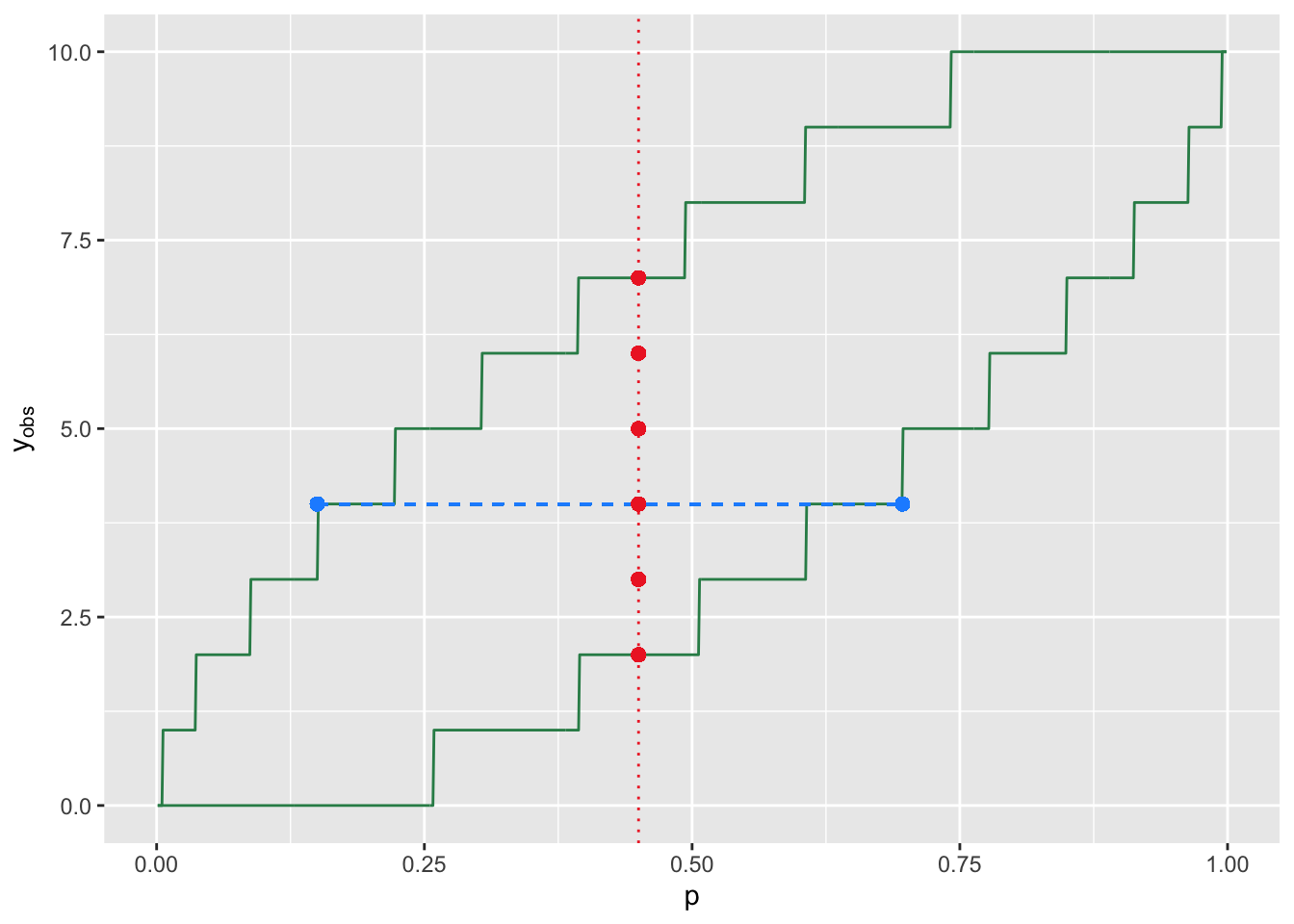

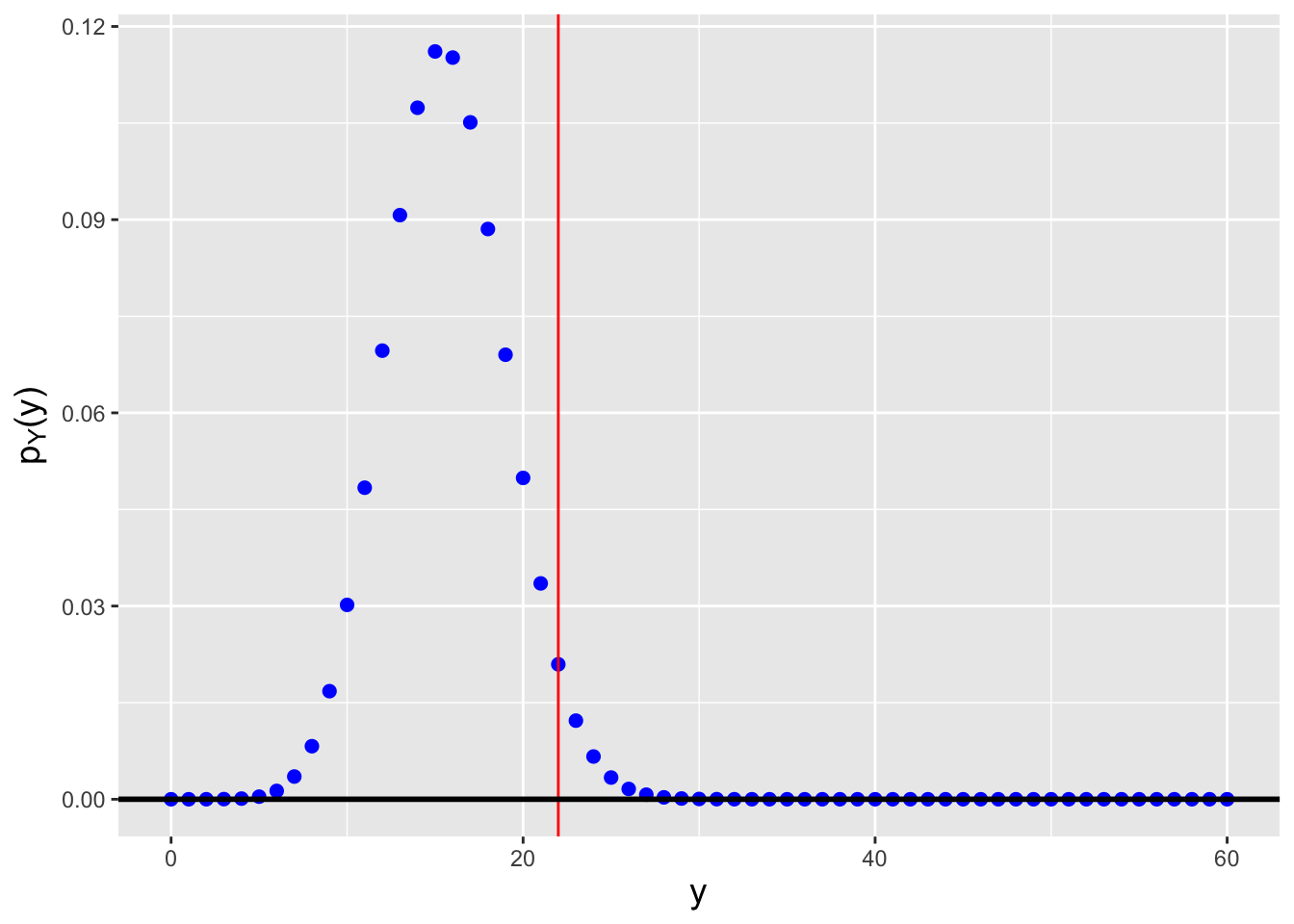

When we work with discrete sampling distributions, the overall picture is similar, but we sometimes need to make what we will dub “discreteness corrections” so that the codes we use generate the correct results. Figure 3.11 is an analogue to Figure 3.10 in which we illustrate the relationship between confidence intervals and hypothesis tests for a situation in which we conduct \(k = 10\) binomial trials. Because the statistic is discretely valued, the acceptance-region boundaries are step functions, but this is not the only change introduced with discrete distributions:

In the continuous case, the probability of sampling a datum that lies within the acceptance region for a given value of \(\theta\) is exactly \(1 - \alpha\). In the discrete case, the probability is \(\geq 1 - \alpha\). (And it will rarely, if ever, be the case that the probability masses within the acceptance region will sum to exactly \(1 - \alpha\)…although the sum will converge towards \(1 - \alpha\) as \(nk \rightarrow \infty\). This motivates our use of the word “level,” as in “we conduct a level-\(\alpha\) test,” rather than “size.” For a size-\(\alpha\) test, the probability of sampling a statistic value in the acceptance region is, by definition, exactly \(1-\alpha\), while for a level-\(\alpha\) test, it is \(\geq 1 - \alpha\).)

Because the probability of sampling a datum in the acceptance region is equal to the confidence interval coverage, the coverage will also be \(\geq 1 - \alpha\).

Figure 3.11: An illustration of the relationship between hypothesis testing and confidence interval estimation for a discrete sampling distribution (here, a binomial distribution with \(k=10\)). The two green lines define rejection-region boundaries for a two-sided test with null hypothesis parameter value \(p\). Assume \(p = 0.45\): the red dots indicates the values of \(y_{\rm obs}\) in the acceptance region (where we fail to reject the null). The horizontal dashed blue line shows the confidence interval constructed for a given value of \(y_{\rm obs}\). Given \(p\), the probability of sampling a statistic in the acceptance region is generally greater than \(1-\alpha\), and thus the coverage of confidence intervals, which has the same value, will also be generally greater than \(1-\alpha\).

The blue dashed line in Figure 3.11 is an example of

a confidence interval (specifically for \(y_{\rm obs} = 4\)). In order to

construct an interval like this, we would use a uniroot()-style code

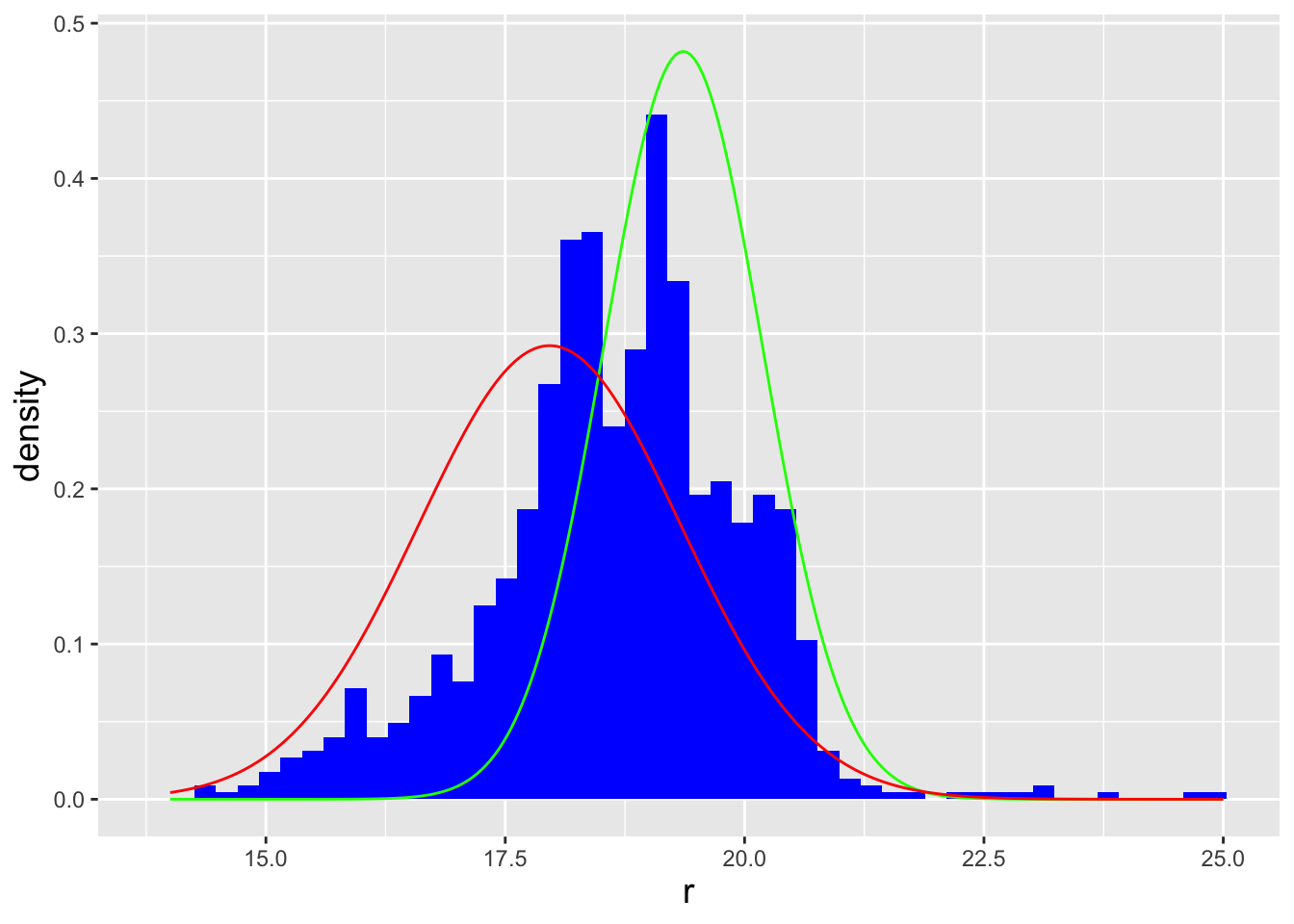

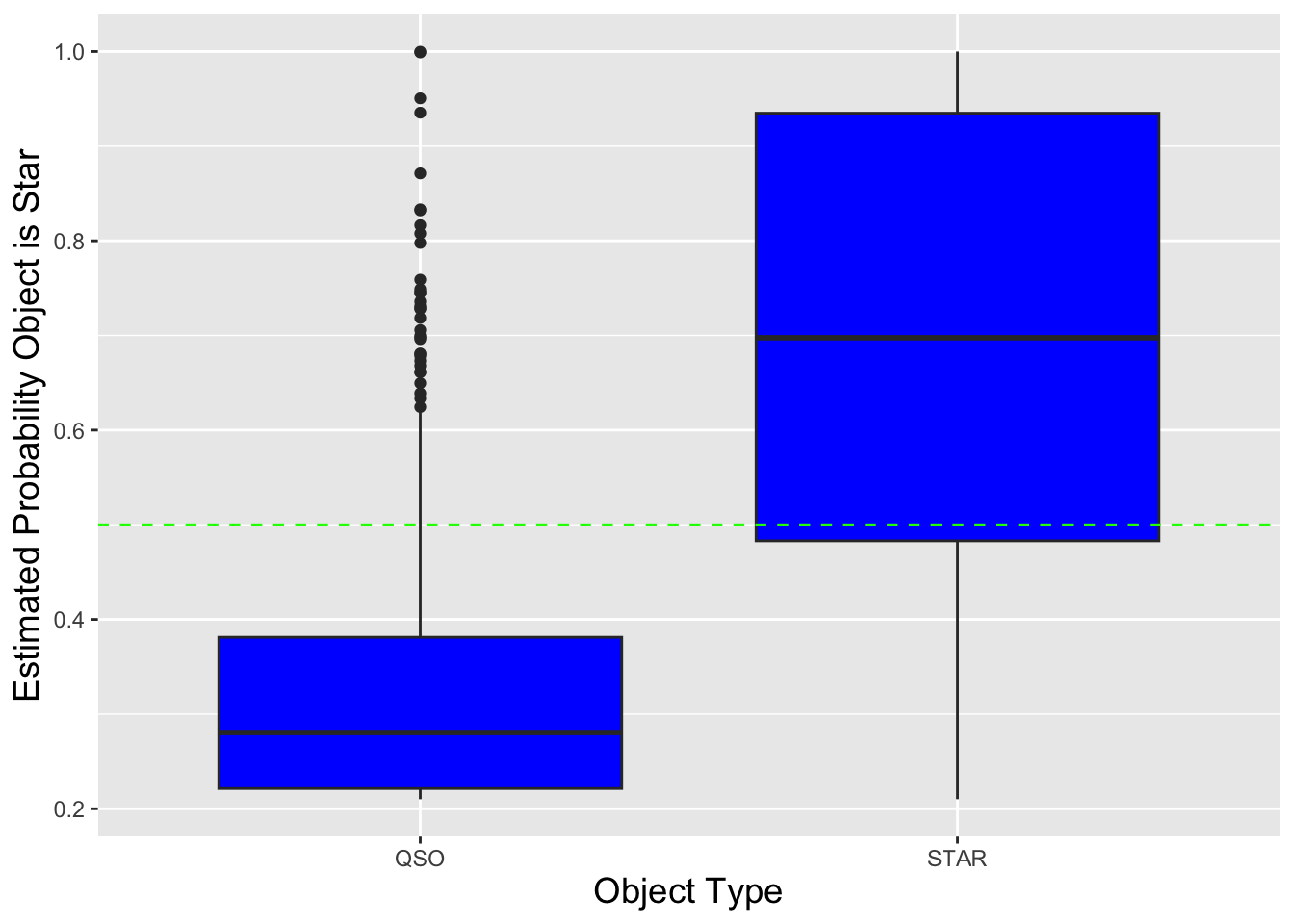

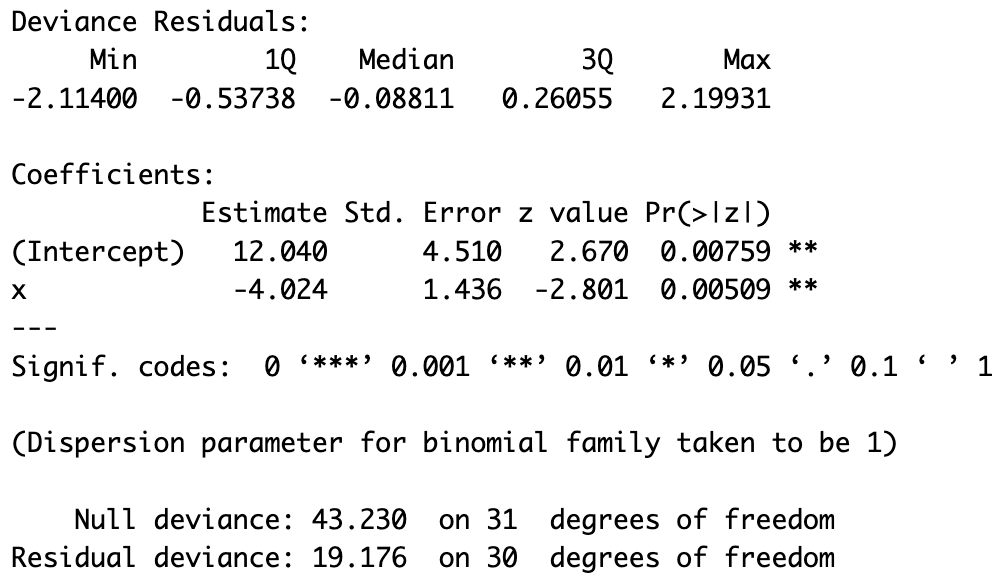

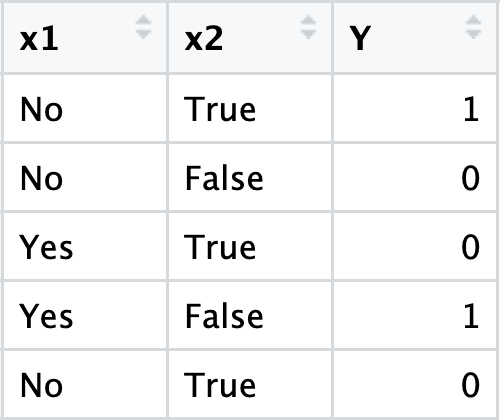

as we have before, but with the following changes.