4 The Poisson (and Related) Distributions

In this chapter, we illustrate probability and statistical inference concepts utilizing the Poisson distribution, which governs the data that arise from Poisson processes: spatio-temporal experiments whose outcomes are counts.

One of the challenges of a Prussian soldier’s life in the 19th century was avoiding being kicked by horses. This was no trivial matter: over one 20-year period, 122 soldiers died from horse-kick-related injuries. The data below are from Fisher (1925), and they are a subset of the original data that were compiled earlier by the statistician Ladislaus Bortkiewicz.

| \(x\) | \(N(x)\) |

|---|---|

| 0 | 109 |

| 1 | 65 |

| 2 | 22 |

| 3 | 3 |

| 4 | 1 |

\(x\) is the number of deaths observed in any one Prussian army corp in any one year, and \(N(x)\) is the number of corps-years in which \(x\) deaths were observed. (\(N(x)\) sums to \(20 \cdot 10 = 200\), reflecting that the compiled data represent 10 army corps observed over a 20-year period.)

These data are an example of a process, i.e., a sequence of observations, but we can immediately see that unlike the case with coin flips, this process is not a Bernoulli process. That’s because the number of possible outcomes is greater than two (0 and 1); in fact, the number of possible outcomes is countably infinite, so we could not even call this a multinomial process. Well, the reader might say, we could simply discretize the data more finely, so that the number of possible outcomes is at least finite (multinomial) or better yet falls to two (Bernoulli). Let’s get monthly data, or daily data, or hourly data. However, there is no time period \(\Delta t\) for which the number of possible outcomes is limited to some maximum value: in theory, an infinite number of soldiers could die in the same second, or even the same nanosecond.

But let’s keep playing with this idea of making the time periods smaller and smaller. Let the number of time periods into which we divide our observation interval, \(k\), go to infinity such that the probability of observing a horse-kick death \(p\) goes to zero, but in such a way that \(kp \rightarrow \lambda\), where \(\lambda\) is a constant. Under these conditions, the binomial distribution transforms into the Poisson distribution, which Bortkiewicz dubbed the law of small numbers. This distribution gives the probability of observing a particular number of events (“counts”) in a fixed interval of space and/or time, given some expected number of events in that interval, and is the one that is most commonly applied in practice to model counts-based data.

4.1 Probability Mass Function

What to take away from this section:

The Poisson distribution is a discrete distribution with a probability mass function whose shape and location along the real-number line is governed by a single parameter: \(\lambda\), the number of counts we expect to record, on average, over the course of an experiment.

As was the case for the binomial probability mass function, the shape of the Poisson pmf tends to that of the normal as \(\lambda\) gets larger, which has historically led analysts to utilize a normal approximation when making statistical inferences…but with computers, we can now easily make exact ones.

Recall: a probability mass function is one way to represent a discrete probability distribution, and it has the properties (a) \(0 \leq p_X(x) \leq 1\) and (b) \(\sum_x p_X(x) = 1\), where the sum is over all values of \(x\) in the distribution’s domain.

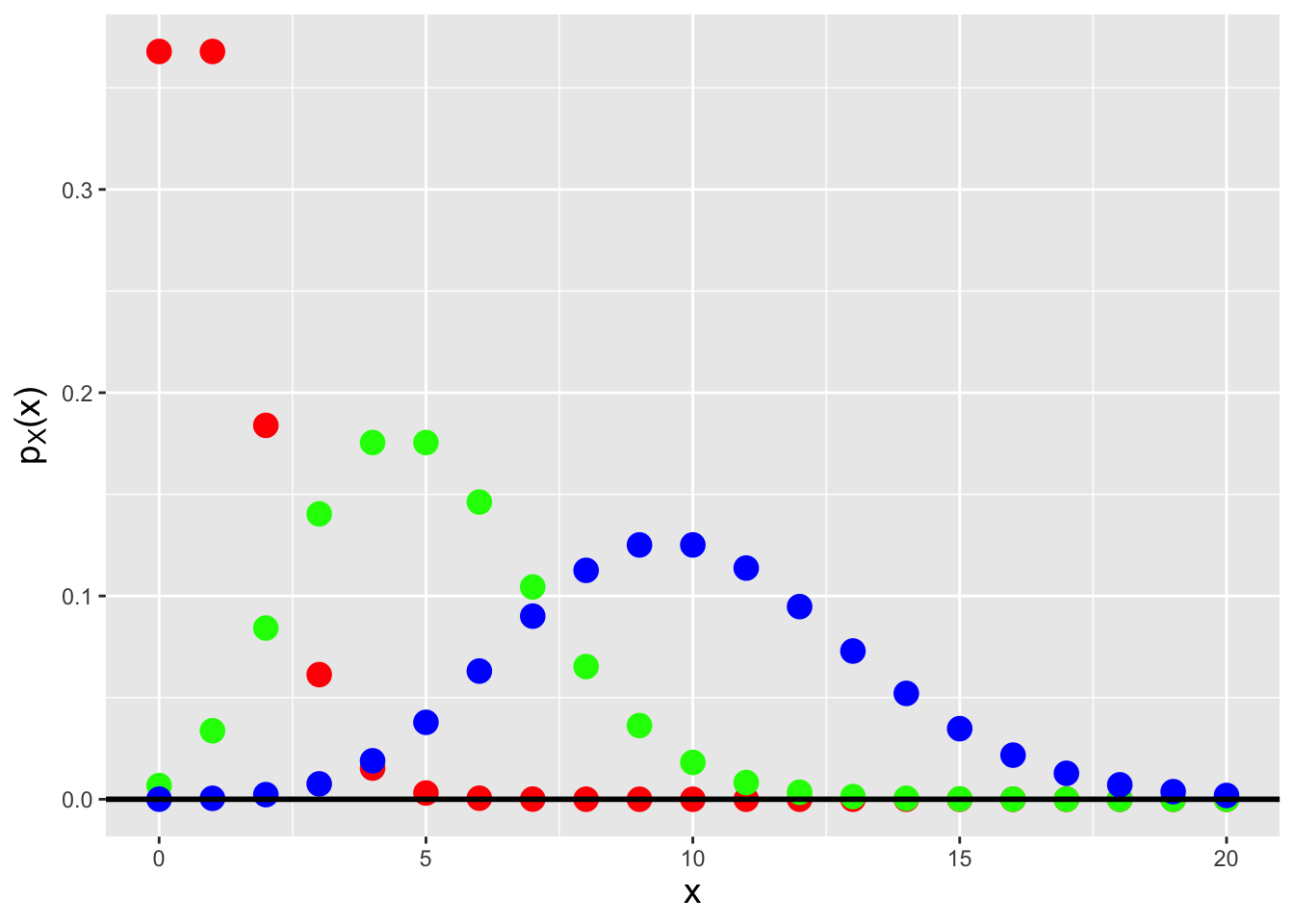

To derive the Poisson probability mass function, we start by writing down the binomial distribution, setting \(p\) to \(\lambda/k\), where \(\lambda\) is an arbitrarily valued positive constant, and letting \(k\) go to infinity: \[\begin{align*} P(X=x) &= \binom{k}{x} p^x (1-p)^{k-x} = \frac{k!}{x!(k-x)!} \left(\frac{\lambda}{k}\right)^x \left(1-\frac{\lambda}{k}\right)^{k-x} \\ &= \frac{k!}{(k-x)! k^x} \frac{\lambda^x}{x!} \left(1-\frac{\lambda}{k}\right)^{k-x} \\ &= \left(\frac{k}{k}\right) \left(\frac{k-1}{k}\right) \cdots \left(\frac{k-x+1}{k}\right) \left(\frac{\lambda^x}{x!}\right) \left(1-\frac{\lambda}{k}\right)^{k-x} \\ &\rightarrow \frac{\lambda^x}{x!} \left(1-\frac{\lambda}{k}\right)^{k-x} ~~~\mbox{as}~~~ k \rightarrow \infty \\ &\rightarrow \frac{\lambda^x}{x!} \left(1-\frac{\lambda}{k}\right)^k ~~~~~~~~\mbox{as}~~~ k \rightarrow \infty \,. \end{align*}\] We now concentrate on the parenthetical term above. Given that \[ \lim_{k \rightarrow \infty} \left(1 - \frac{1}{k}\right)^k = e^{-1} \,, \] we can state that \[ \lim_{k \rightarrow \infty} \left(1 - \frac{1}{k/\lambda}\right)^{k/\lambda} = e^{-1} \implies \lim_{k \rightarrow \infty} \left(1 - \frac{1}{k/\lambda}\right)^k = e^{-k} \,. \] We can now write down the probability mass function for a Poisson random variable (see Figure 4.1): \[ P(X=x) = p_X(x) = \frac{\lambda^x}{x!} e^{-\lambda} \,, \] where \(x \in \{0,1,\ldots,\infty\}\) and \(\lambda > 0\). To indicate that we have sampled a datum from a Poisson distribution, we write \(X \sim\) Poisson(\(\lambda\)). The expected value and variance of the Poisson distribution are \(E[X] = \lambda\) and \(V[X] = \lambda\), respectively; the former is derived below in an example. As we will show in another example below, a Poisson random variable converges in distribution to a normal random variable as \(\lambda \rightarrow \infty\), a result which affects how Poisson-distributed data have historically been treated in, e.g., hypothesis test settings.

Figure 4.1: Poisson probability mass functions for \(\lambda = 1\) (red squares), 5 (green triangles), and 10 (blue circles). Note how the shape of the pmf tends more and more towards that of a normal distribution as \(\lambda\) gets larger.

4.1.1 Poisson Random Variable: Expected Value

Recall: the expected value of a discretely distributed random variable is \[ E[X] = \sum_x x p_X(x) \,, \] where the sum is over all values of \(x\) within the domain of the pmf p_X(x). The expected value is equivalent to a weighted average, with the weight for each possible value of \(x\) given by \(p_X(x)\).

For a Poisson distribution, the expected value is \[ E[X] = \sum_{x=0}^\infty x \frac{\lambda^x}{x!} e^{-\lambda} = \sum_{x=1}^\infty x \frac{\lambda^x}{x!} e^{-\lambda} = \sum_{x=1}^\infty \frac{\lambda^x}{(x-1)!} e^{-\lambda} \,. \] The trick here is to move constants into or out of the summation so that the summation becomes one of a probability mass function over its entire domain. Here, we move \(\lambda\) out of the summation, and make the substitution \(y = x-1\); the summand then takes on the form of a Poisson pmf, summed over its entire domain: \[ E[X] = \lambda \sum_{x=1}^\infty \frac{\lambda^{x-1}}{(x-1)!} e^{-\lambda} = \lambda \sum_{y=0}^\infty \frac{\lambda^{y}}{y!} e^{-\lambda} = \lambda \,. \]

Recall: the variance of a discretely distributed random variable is \[ V[X] = \sum_x (x-\mu)^2 p_X(x) = E[X^2] - (E[X])^2\,, \] where the summation is over all values of \(x\) within the domain of the pmf \(p_X(x)\). The variance represents the square of the “width” of a probability mass function, where by “width” we mean the range of values of \(x\) for which \(p_X(x)\) is effectively non-zero.

A similar calculation to the one above, but that starts with the derivation of the factorial moment \(E[X(X-1)]\), eventually yields the Poisson variance, which is also \(\lambda\).

4.1.2 Poisson Distribution: Normal Approximation

As the expected number of counts, \(\lambda\), increases, a Poisson random variable will converge in distribution to a normal random variable. Here, we show mathematically why this is the case.

The Poisson probability mass function is \[ p_X(x \vert \lambda) = \frac{\lambda^x}{x!} e^{-\lambda} \,. \] We note that the normal probability density function does not have a factorial in it, so we will start by using Stirling’s approximation: \[ x! \approx \sqrt{2 \pi x} x^x e^{-x} \,. \] This approximation has an error of 1.65% for \(x = 5\) and 0.83% for \(x = 10\), with the percentage error continuing to shrink as \(x \rightarrow \infty\). With this approximation, we can write that \[ p_X(x \vert \lambda) \approx \frac{\lambda^x}{\sqrt{2 \pi x}} x^{-x} e^{x-\lambda} \,. \] This still does not quite look like a normal pdf. So there is more work to do. We compute the logarithm of this quantity: \[ \log p_X(x \vert \lambda) \approx x \log \lambda - \frac{1}{2} \log (2 \pi x) - x \log x + x - \lambda = -x ( \log x - \log \lambda ) - \frac{1}{2} \log (2 \pi x) + x - \lambda \,, \] and then look at \(\log x - \log \lambda\): \[ \log x - \log \lambda = \log \frac{x}{\lambda} = \log \left( 1 - \frac{\lambda-x}{\lambda}\right) \approx -\frac{\delta}{\sqrt{\lambda}} - \frac{\delta^2}{2 \lambda} - \cdots \,. \] Here, \(\delta = (\lambda - x)/\sqrt{\lambda}\). Plugging this result into the expression for \(\log p_X(x \vert \lambda)\), we find that \[ \log p_X(x \vert \lambda) \approx -\frac{1}{2} \log (2 \pi x) + x \left( \frac{\delta}{\sqrt{\lambda}} + \frac{\delta^2}{2\lambda} \right) - \delta \sqrt{\lambda} \,. \] The next step is plug in \(x = \lambda - \sqrt{\lambda}\delta\): \[\begin{align*} \log p_X(x \vert \lambda) &\approx -\frac{1}{2} \log (2 \pi x) + (\lambda - \sqrt{\lambda}\delta)\left( \frac{\delta}{\sqrt{\lambda}} + \frac{\delta^2}{2\lambda} \right) - \delta \sqrt{\lambda} \\ &= -\frac{1}{2} \log (2 \pi x) + \sqrt{\lambda}\delta - \delta^2 + \frac{\delta^2}{2} - \frac{\delta^3}{2 \lambda^{3/2}} - \sqrt{\lambda}\delta \\ &\approx -\frac{1}{2} \log (2 \pi x) - \frac{\delta^2}{2} \,, \end{align*}\] where we drop the \(\mathcal{O}(\delta^3)\) term. When we exponentiate both sides, the final result is \[ p_X(x \vert \lambda) \approx \frac{1}{\sqrt{2 \pi x}} \exp\left( -\frac{\delta^2}{2} \right) = \frac{1}{\sqrt{2 \pi x}} \exp\left( -\frac{(x-\lambda)^2}{2\sqrt{\lambda}} \right) \,. \] This has the (approximate) form of a normal pdf, at least for values \(x \approx \lambda\). So, in the end, the Poisson probability mass function \(p_X(x \vert \lambda)\) has approximately the same shape as the normal probability density function \(f_X(x \vert \mu=\lambda,\sigma^2=\lambda)\) for \(x \gg 1\) and \(x \approx \lambda\).

4.1.3 Poisson Distribution: Exponential Family

Recall: the exponential family of distributions is the set of distributions whose probability mass or density functions can be expressed as \[ h(x) \exp\left[\left(\sum_{i=1}^p \eta_i(\boldsymbol{\theta}) T_i(x)\right) - A(\boldsymbol{\theta})\right] \,, \] where \(\boldsymbol{\theta}\) is a vector of parameters (e.g., \(\boldsymbol{\theta} = \{\mu,\sigma^2\}\) for a normal distribution). Note that none of the parameters can be a domain-specifying parameter.

Is the Poisson distribution a member of the larger exponential family of distributions? We will start our answer to this question by noting that \[\begin{align*} \lambda^x = \exp\left(\log \lambda^x\right) = \exp\left(x \log \lambda\right) \,. \end{align*}\] Thus \[\begin{align*} p_X(x) = \frac{1}{x!} \exp\left(x \log \lambda - \lambda\right) \,. \end{align*}\] and \[\begin{align*} h(x) &= \frac{1}{x!} ~~~~~~ \eta(\lambda) = \log \lambda ~~~~~~ T(x) = x ~~~~~~ A(\lambda) = \lambda \,. \end{align*}\] The Poisson distribution is indeed a member of the exponential family.

4.2 Poisson Distribution: Cumulative Distribution Function

What to take away from this section:

- The cumulative distribution function, or cdf, for a Poisson distribution is a step function that can be difficult to work with analytically (which historically motivated the application of the normal approximation, but which now leads us to use coding for exact inference).

Recall: the cumulative distribution function, or cdf, is another means by which to encapsulate information about a probability distribution. For a discrete distribution, it is defined as \(F_X(x) = \sum_{y\leq x} p_Y(y)\), and it is defined for all values \(x \in (-\infty,\infty)\), with \(F_X(-\infty) = 0\) and \(F_X(\infty) = 1\).

For the Poisson distribution, the cdf is

\[

F_X(x) = \sum_{y=0}^{\lfloor x \rfloor} p_Y(y) = \sum_{y=0}^{\lfloor x \rfloor} \frac{\lambda^y}{y!} \exp(-\lambda) = \frac{\Gamma(\lfloor x+1 \rfloor,\lambda)}{\lfloor x \rfloor !} \,,

\]

where \(\lfloor x \rfloor\) denotes the

floor function, which returns the

largest integer that is less than or

equal to \(x\) (e.g., if \(x\) = 8.33, \(\lfloor x \rfloor\) = 8),

and where \(\Gamma(\cdot,\cdot)\) is the upper incomplete gamma function

\[

\Gamma(\lfloor x+1 \rfloor,\lambda) = \int_{\lambda}^\infty u^{\lfloor x \rfloor} e^{-u} du \,.

\]

(An example of an R function which computes the upper incomplete

gamma function is incgam() in the pracma package.)

As we are dealing with a probability mass function, the

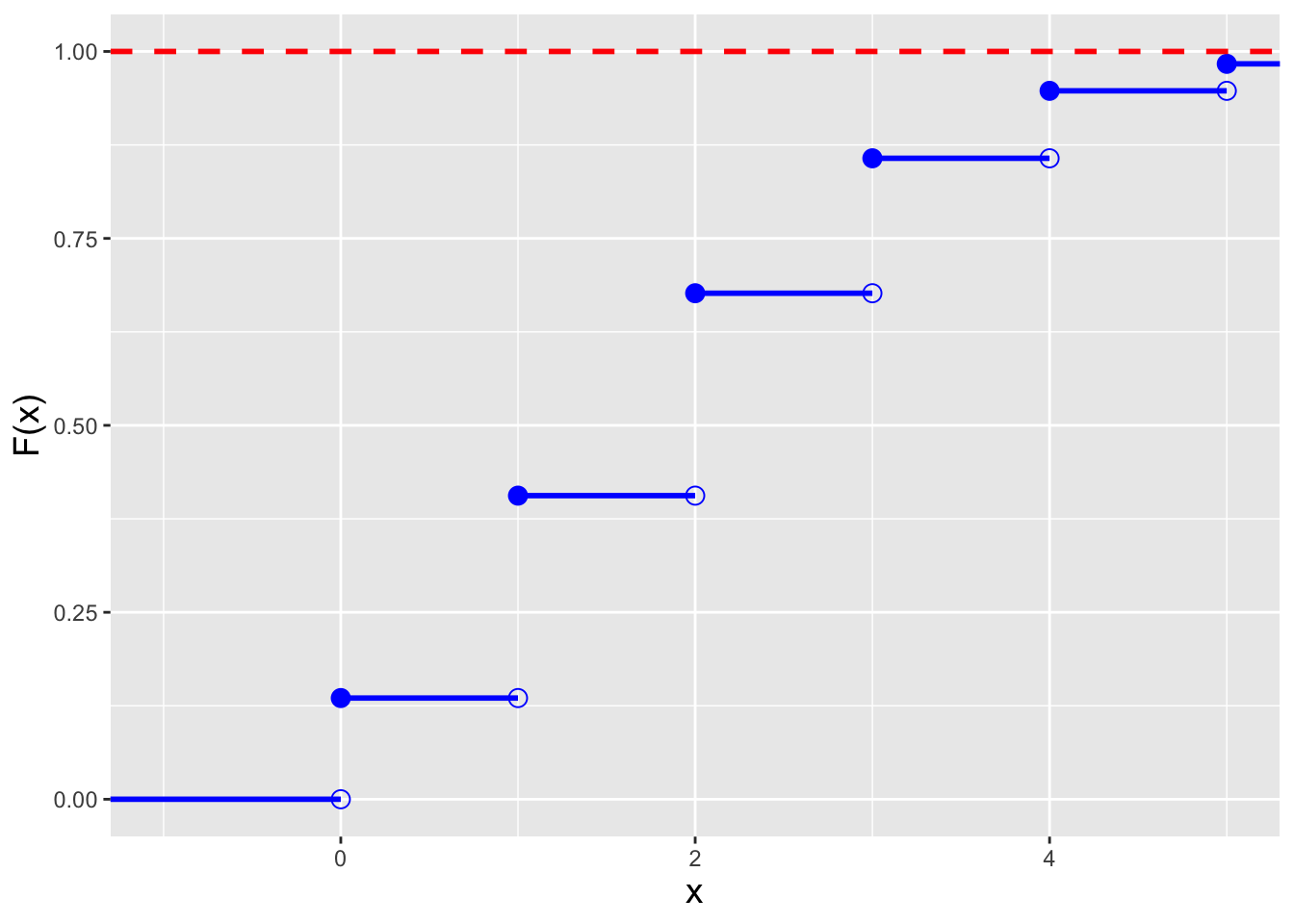

cdf is a step function, as illustrated in the left panel of

Figure 4.2.

Recall that because of the step-function nature of the cdf,

the form of inequalities in a probabilistic statement matter: e.g.,

\(P(X < x)\) and \(P(X \leq x)\) will not be the same

if \(x\) is zero or a positive integer.

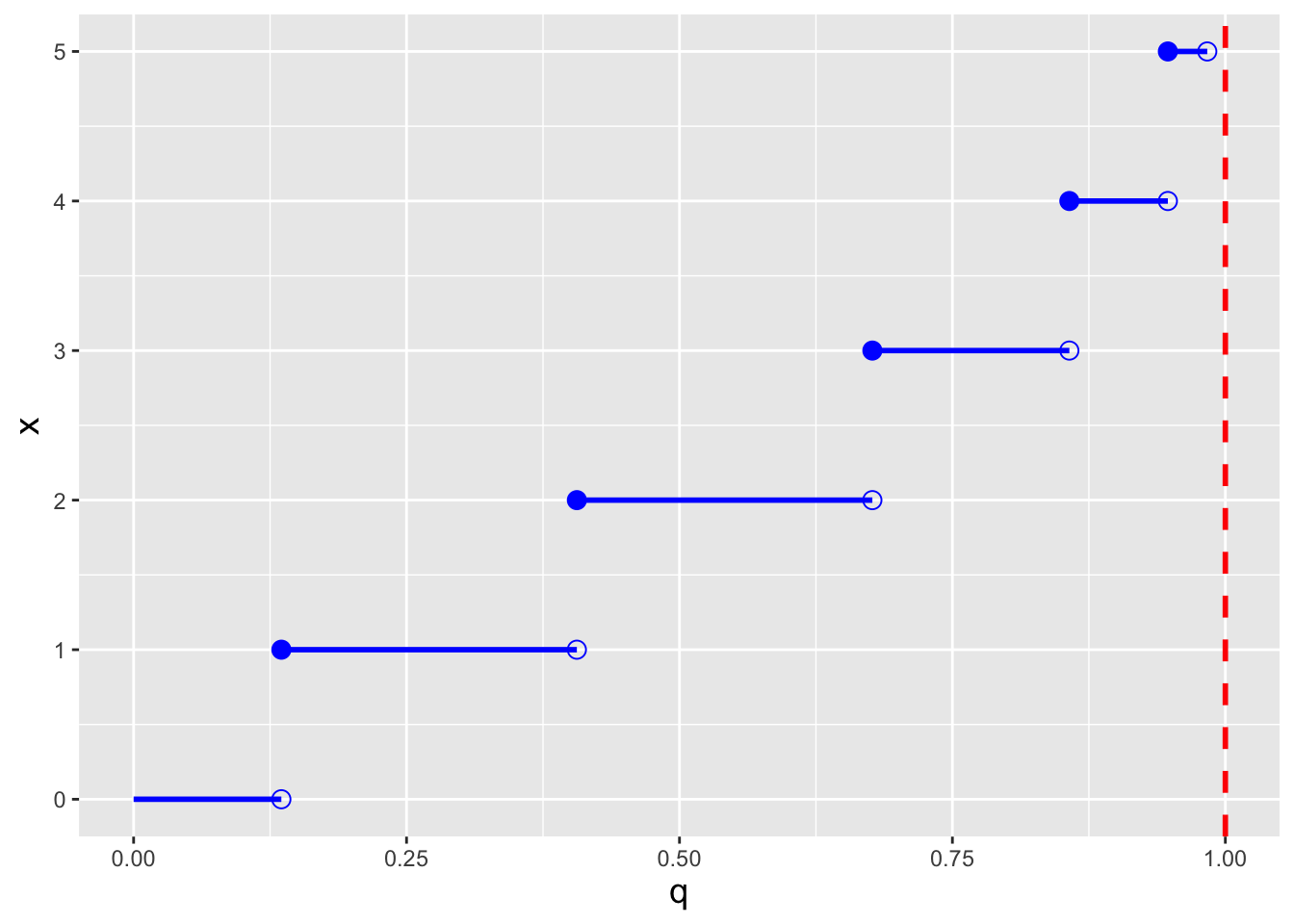

Recall: an inverse cdf function \(x = F_X^{-1}(q)\) takes as input a distribution quantile \(q \in [0,1]\) and returns the value of \(x\). A discrete distribution has no unique inverse cdf; it is convention to utilize the generalized inverse cdf, \[ x = \mbox{inf}\{x : F_X(x) \geq q\} \,, \] where “inf” indicates that the function is to return the smallest value of \(x\) such that \(F_X(x) \geq q\).

In the right panel of Figure 4.2, we display the inverse cdf for the same distribution used to generate the figure in the left panel (\(\lambda = 2\)). Like the cdf, the inverse cdf for a discrete distribution is a step function.

Figure 4.2: Illustration of the cumulative distribution function \(F_X(x)\) (left) and inverse cumulative distribution function \(F_X^{-1}(q)\) (right) for a Poisson distribution with \(\lambda=2\). Note that because the domain of the Poisson distribution is countably infinite, we do observe any values of \(x\) for which \(F_X(x) = 1\).

4.2.1 Computing Probabilities

- If \(X \sim\) Poisson(5), which is \(P(4 \leq X < 6)\)?

We first note that due to the form of the inequality, we do not include \(X=6\) in the computation. Thus \(P(4 \leq X < 6) = p_X(4) + p_X(5)\), which equals \[ \frac{5^4}{4!}e^{-5} + \frac{5^5}{5!}e^{-5} = \frac{5^4}{4!}e^{-5} \left( 1 + \frac{5}{5} \right) = 2\frac{5^4}{4!}e^{-5} = 0.351\,. \] If we utilize

R:

## [1] 0.351Note that we can take advantage of

R’s vectorization capabilities by writing the above as

## [1] 0.351This approach is far more convenient than writing out a string of calls to

dpois(), particularly when the number of values of \(x\) to sum over becomes large. We can also utilize cdf functions here: \(P(4 \leq X < 6) = P(X < 6) - P(X < 4) = P(X \leq 5) - P(X \leq 3) = F_X(5) - F_X(3)\), which inRis computed as follows:

## [1] 0.351However, summing pmf values is the preferred approach, as this approach makes it easier to properly take into account the form of any inequalities in a probability statement.

- If \(X \sim\) Poisson(5), what is the value of \(a\) such that \(P(X \leq a) = 0.9\)?

First, we set up the inverse cdf formula: \[ P(X \leq a) = F_X(a) = 0.9 ~~~ \Rightarrow ~~~ a = F_X^{-1}(0.9) \] Note that we do not do anything differently here than we would have done in a continuous distribution setting…and we can proceed directly to

Rbecause it utilizes the generalized inverse cdf algorithm.

## [1] 84.3 Poisson-Related Distributions

What to take away from this section:

There are several distributions related to the Poisson distribution that are often seen in data analyses…

the zero-inflated Poisson distribution, a model that takes into account the fact that a data-generating process might include observing a heightened number of zero-count outcomes;

the zero-truncated Poisson distribution, a model that takes into account the fact that a data-generating process might be such that it is impossible to observe a zero-count outcomes; and

the gamma distribution, a continuous semi-infinite distribution that one can use to model the Poisson expected counts \(\lambda\).

There are several probability distributions that are directly related to the Poisson distribution and that are commonly utilized in statistical inference. Some of these are

- the zero-inflated Poisson distribution;

- the zero-trucated Poisson distribution; and

- the gamma distribution.

Below, we introduce each in turn.

Zero-Inflated Poisson Distribution. The experimental setting for this distribution is a simple one: when counting the numbers of a particular object (like an animal species) that exist at different locations and/or times, it can be the case that the object in question only exists in a subset of those locations/times…meaning that we might record a larger number of zeros than we might expect, and thus that we should not model the data with a conventional Poisson distribution. In a zero-inflated Poisson (or ZIP) process, data are assumed to be sampled according to a mixture of two distributions:

- a distribution that generates only zeros; and

- a Poisson distribution

The probability mass function for the ZIP distribution is \[ p_X(x) = \left\{ \begin{array}{ll} \theta + (1-\theta) e^{-\lambda} & x = 0 \\ (1 - \theta) \frac{\lambda^x}{x!} e^{-\lambda} & x \in \{1,2,\ldots\} \end{array} \right. \,, \] where \(\lambda > 0\) and \(\theta \in [0,1]\).

Zero-Truncated Poisson Distribution. In contrast to situations in which we might invoke the zero-inflated Poisson distribution, there can be experimental settings in which the data are counts but zero is not a possibility. For instance, if our data are the number of items purchased across \(n\) separate online orders, presumably none of the \(n\) values will be zero: one cannot make a purchase without at least one item in the cart!

The zero-truncated Poisson distribution is also known as the conditional Poisson distribution, and that alternative name makes sense, as we can derive the probability mass function for the ZTP distribution by computing \(P(X = x \vert X > 0)\), where \(X\) is a(n uncoditional) Poisson random variable: \[\begin{align*} p_X(x) = P(X = x \vert X > 0) &= \frac{P(X = x \cap X > 0)}{P(X > 0)} \\ &= \frac{P(X = x \cap X > 0)}{1 - P(X = 0)} \\ &= \frac{P(X = x)}{1 - P(X = 0)} \\ &= \frac{\lambda^x}{x!}e^{-\lambda}\frac{1}{1 - e^{-\lambda}} \\ &= \frac{\lambda^x}{x! (e^\lambda - 1)} \,, \end{align*}\] where \(x \in \{1,\ldots\}\) and \(\lambda > 0\).

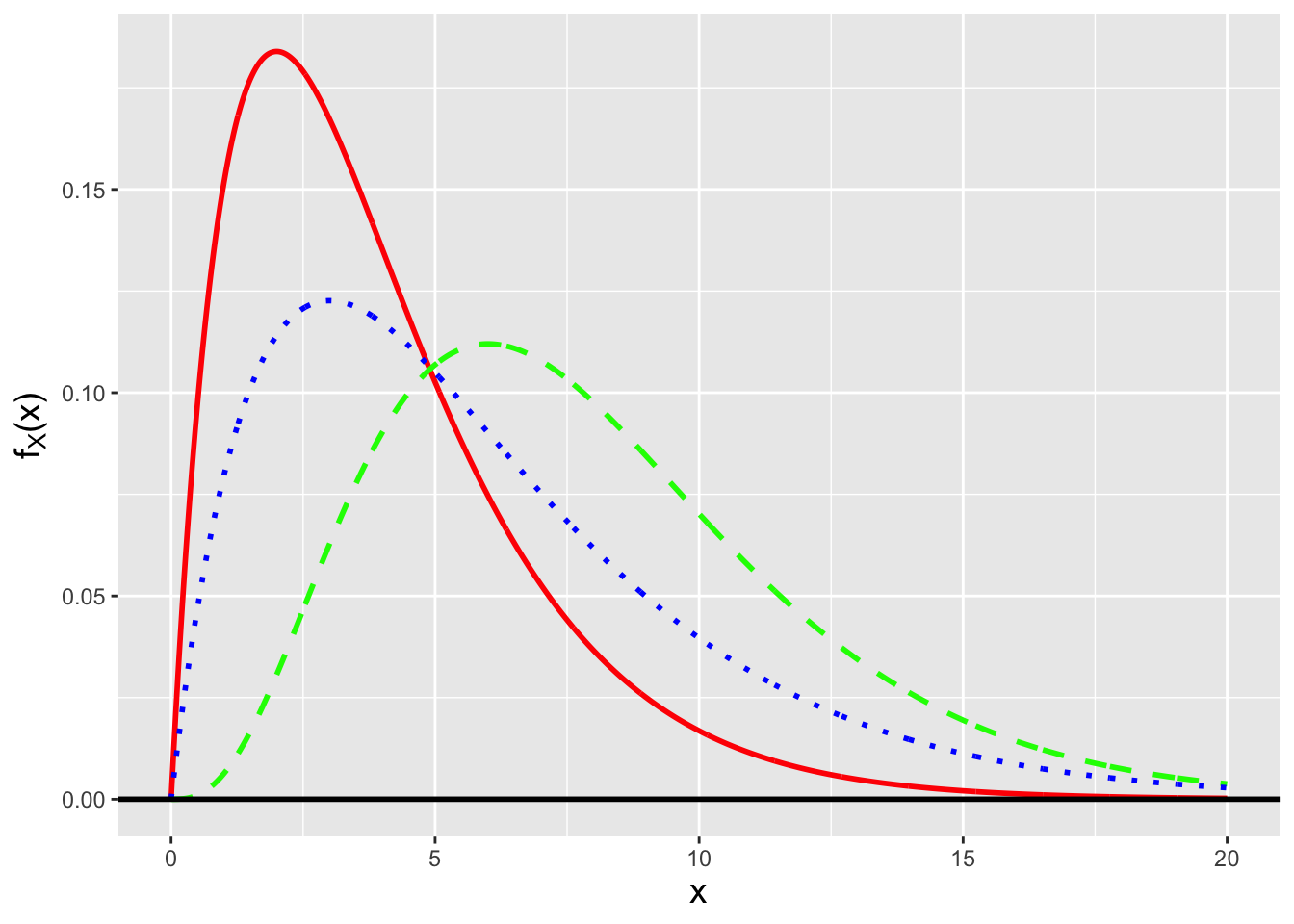

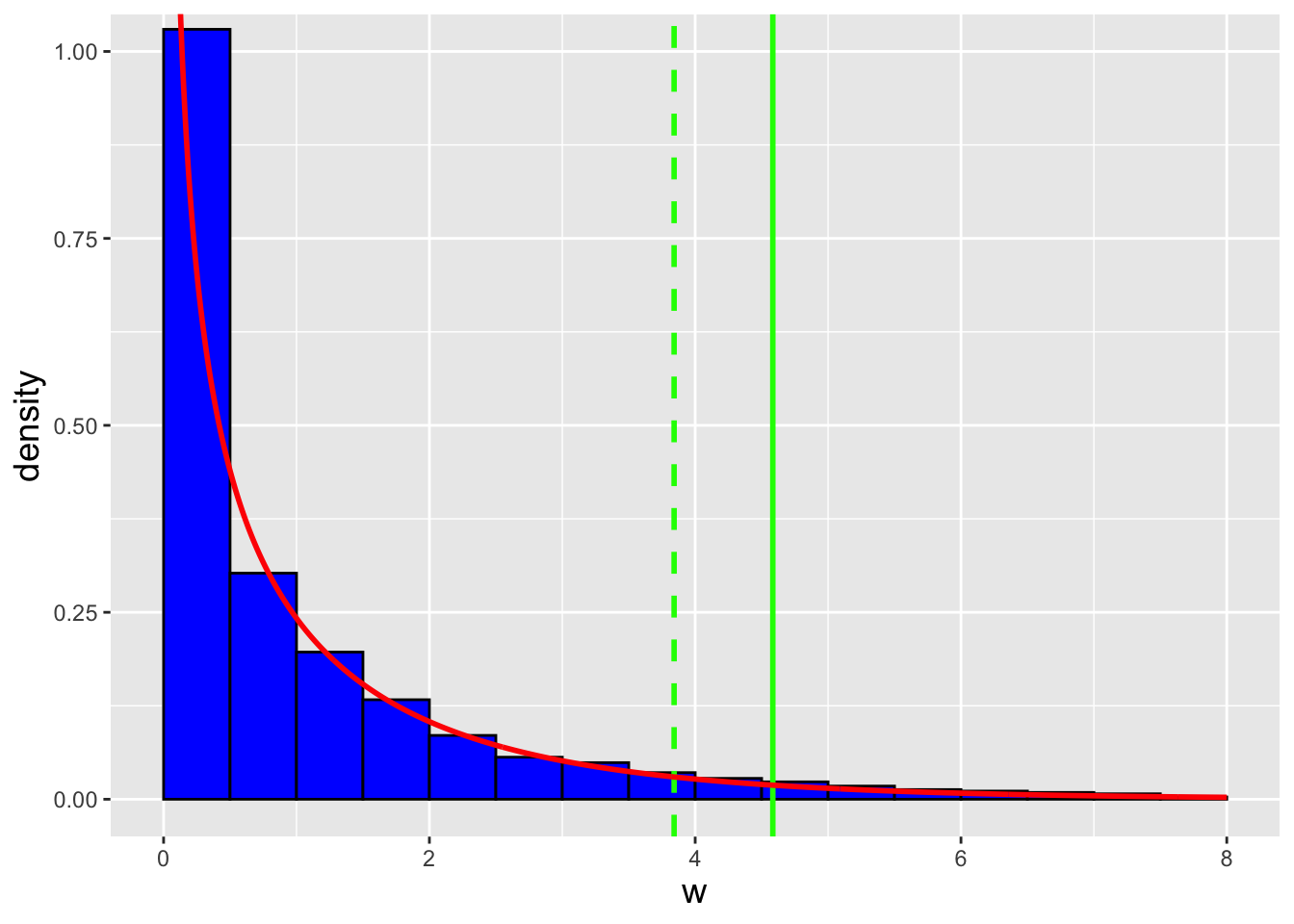

Gamma Distribution. The gamma distribution is a continuous distribution that is commonly used to, e.g., model the waiting times between discrete events. (Recall that we have previously encountered this distribution: given \(n\) iid normal random variables, \(S^2\) is a gamma-distributed random variable.) Its probability density function is given by \[ f_X(x) = \frac{x^{a-1}}{b^a} \frac{\exp\left(-x/b\right)}{\Gamma(a)} \,, \] where \(x \in [0,\infty)\), \(a\) and \(b\) are both \(>\) 0, and \(\Gamma(a)\) is the gamma function: \[ \Gamma(a) = \int_0^\infty u^{a-1} e^{-u} du \,. \] (See Figure 4.3.) \(a\) and \(b\) are referred to as “shape” and “scale” parameters, respectively. (Note that there is an alternative parameterization of the gamma distribution, the so-called “shape/rate” parameterization. So one should always be careful to determine which parameterization that one is working with before performing any analyses.) The gamma family of distributions exhibits a wide variety of shapes and it is the parent family to a number of other distributions, some of which we have met before. One in particular is the exponential distribution, \[ f_X(x) = \frac{1}{b} \exp\left(-\frac{x}{b}\right) \,, \] which is a gamma distribution with \(a = 1\). Note how we lead off above by saying that the gamma distribution is commonly used to model the waiting times between discrete events. The exponential distribution specifically models the waiting time between one event and the next in a homogeneous Poisson process (i.e., one in which \(\lambda\) does not vary as a function of time). The number of strong earthquakes that occur in California in one year? That can be modeled as a Poisson random variable. The time that elapses between two consecutive strong earthquakes in California? That can be modeled using the exponential distribution. (For completeness: the Erlang distribution is a generalization of the exponential distribution, in the sense that we can use it to model the waiting time between the \(i^{\rm th}\) and \((i+a)^{\rm th}\) events, where \(a\) is a positive integer, in a Poisson process.)

| distribution | \(a\) | \(b\) |

|---|---|---|

| exponential | 1 | \((0,\infty)\) |

| Erlang | \(\{1,2,3,\ldots\}\) | \((0,\infty)\) |

| chi-square | \(\{1/2,1,3/2,\ldots\}\) | 2 |

Let’s conclude this section by repeating the exercise we did in the last chapter while discussing the beta distribution, the one in which we examined the functional form of the likelihood function \(\mathcal{L}(p \vert k,x)\). Here, we write down the Poisson likelihood function \[ \mathcal{L}(\lambda \vert x) = \frac{\lambda^x}{x!} e^{-\lambda} \] and compare it with the gamma pdf. We can match the gamma pdf if we map the Poisson \(\lambda\) to the gamma \(x\), the Poisson \(x\) to the gamma \(a-1\), and we set \(b\) to 1. But because the Poisson \(x\) is an integer with values \(\{0,1,2,\ldots\}\), we find that the integrand specifically matches the Erlang pdf, for which \(a = \{1,2,3,\ldots\}\). So, if we observe a random variable \(X \sim\) Poisson(\(\lambda\)), then the likelihood function \(\mathcal{L}(\lambda \vert x)\) has the shape (and normalization!) of a Gamma(\(x+1,1\)) (or Erlang(\(x+1\))) distribution.

(About the normalization: if we integrate the likelihood function over its domain, we find that \[ \frac{1}{x!} \int_0^\infty \lambda^x e^{-\lambda} d\lambda = \frac{1}{x!} \Gamma(x+1) = \frac{x!}{x!} = 1 \,. \] The mathematics works out here because \(x\) is integer valued and thus \(\Gamma(x+1) = x!\).)

Figure 4.3: Three examples of gamma probability density functions: Gamma(2,2) (solid red line), Gamma(4,2) (dashed green line), and Gamma(2,3) (dotted blue line).

4.3.1 Zero-Inflated Poisson Random Variable: Expected Value

The zero-inflated Poisson, or ZIP, distribution has probability mass function \[ p_X(x) = \left\{ \begin{array}{ll} \theta + (1-\theta) e^{-\lambda} & x = 0 \\ (1 - \theta) \frac{\lambda^x}{x!} e^{-\lambda} & x = \{1,2,\ldots\} \end{array} \right. \] Thus the expected value is \[\begin{align*} E[X] = \sum_{x=0}^\infty x p_X(x) &= 0 + \sum_{x=1}^\infty x(1-\theta)\frac{\lambda^x}{x!} e^{-\lambda} \\ &= \sum_{x=1}^\infty (1-\theta)\frac{\lambda^x}{(x-1)!} e^{-\lambda} \\ &= \lambda (1-\theta) \sum_{x=1}^\infty \frac{\lambda^{x-1}}{(x-1)!} e^{-\lambda} \\ &= \lambda (1-\theta) \sum_{y=0}^\infty \frac{\lambda^y}{y!} e^{-\lambda} \\ &= \lambda (1-\theta) \,. \end{align*}\] The variance, which one can derive by working with the second factorial moment \(E[X(X-1)]\), is \(\lambda (1-\theta) (1 + \lambda\theta)\).

4.3.2 Zero-Truncated Poisson Random Variable: Expected Value

The zero-truncated Poisson, or ZTP, distribution has probability mass function \[ p_X(x) = \frac{\lambda^x}{x! (e^\lambda-1)} \,, \] where \(x \in \{1,\ldots\}\) and \(\lambda > 0\). The expected value is thus \[\begin{align*} E[X] &= \sum_{x=1}^\infty x p_X(x) \\ &= \frac{1}{e^\lambda-1} \sum_{x=1}^\infty x \frac{\lambda^x}{x!} \\ &= \frac{1}{e^\lambda-1} \sum_{x=1}^\infty \frac{\lambda^x}{(x-1)!} \\ &= \frac{\lambda}{e^\lambda-1} \sum_{x=1}^\infty \frac{\lambda^{x-1}}{(x-1)!} \\ &= \frac{\lambda e^\lambda}{e^\lambda-1} \sum_{x=1}^\infty \frac{\lambda^{x-1}}{(x-1)!} e^{-\lambda} \\ &= \frac{\lambda e^\lambda}{e^\lambda-1} \sum_{y=0}^\infty \frac{\lambda^y}{y!} e^{-\lambda} \\ &= \frac{\lambda e^\lambda}{e^\lambda-1} = \frac{\lambda}{1-e^{-\lambda}} \,. \end{align*}\] The variance, which one can derive by working with the second factorial moment \(E[X(X-1)]\), is \[ V[X] = \frac{\lambda [ 1 - (\lambda+1) e^{-\lambda} ]}{(1-e^{-\lambda})^2} \,. \]

4.3.3 Gamma Random Variable: Expected Value

The expected value of a gamma random variable is found by introducing constants into the expected value integral so that a gamma pdf integrand is formed. Specifically \[\begin{align*} E[X] = \int_0^\infty x f_X(x) dx &= \int_0^\infty x \frac{x^{a-1}}{b^a} \frac{\exp(-x/b)}{\Gamma(a)} dx \\ &= \int_0^\infty \frac{x^{a}}{b^a} \frac{\exp(-x/b)}{\Gamma(a)} dx \\ &= \int_0^\infty \frac{x^{a}}{b^a} \frac{\exp(-x/b)}{\Gamma(a)} \frac{\Gamma(a+1)}{\Gamma(a+1)} \frac{b^{a+1}}{b^{a+1}} dx \\ &= \int_0^\infty \frac{x^{a}}{b^{a+1}} \frac{\exp(-x/b)}{\Gamma(a+1)} \frac{\Gamma(a+1)}{\Gamma(a)} \frac{b^{a+1}}{b^a} dx \\ &= \frac{\Gamma(a+1)}{\Gamma(a)} \frac{b^{a+1}}{b^a} \int_0^\infty \frac{x^{a}}{b^{a+1}} \frac{\exp(-x/b)}{\Gamma(a+1)} dx \\ &= \frac{a \Gamma(a)}{\Gamma(a)} b \times 1 \\ &= a b \,. \end{align*}\] By introducing the constants, we are able to transform the integrand to that of a Gamma(\(a+1,b\)) distribution, and because the integral is over the entire domain of a gamma distribution, the integral evaluates to 1.

A similar calculation involving the derivation of \(E[X^2]\) allows us to determine that the variance of a gamma random variable is \(V[X] = a b^2\).

4.3.4 Exponential Distribution: Memorylessness

An important feature of the exponential distribution is that when we use it to model, e.g., the lifetimes of components in a system, it exhibits memorylessness. In other words, if \(T\) is the random variable representing a component’s lifetime, where \(T \sim\) Exponential(\(b\)) and where \(E[T] = b\), it doesn’t matter how old the component is when we first examine it: from that point onward, the average lifetime will be \(b\).

Let’s demonstrate how this works. We examine a component “born” at time \(t_0=0\) at a later time \(t_1\), and we wish to determine the probability that it will live beyond an even later time \(t_2\). In other words, we wish to compute \[ P(T \geq t_2-t_0 \vert T \geq t_1-t_0) \,. \] (We know the component lived to time \(t_1\), hence the added condition.) Let \(T \sim\) Exponential(\(b\)). Then \[\begin{align*} P(T \geq t_2-t_0 \vert T \geq t_1-t_0) &= \frac{P(T \geq t_2-t_0 \cap T \geq t_1-t_0)}{P(T \geq t_1-t_0)} \\ &= \frac{P(T \geq t_2-t_0)}{P(T \geq t_1-t_0)} \\ &= \frac{\int_{t_2-t_0}^\infty (1/b) \exp(-t/b) dt}{\int_{t_1-t_0}^\infty (1/b) \exp(-t/b) dt} \\ &= \frac{\left. -\exp(-t/b) \right|_{t_2-t_0}^\infty}{\left. -\exp(-t/b) \right|_{t_1-t_0}^\infty} \\ &= \frac{0 + \exp(-(t_2-t_0)/b)}{0 + \exp(-(t_1-t_0)/b)} \\ &= \exp[-(t_2-t_1)/b] = P(T \geq t_2-t_1) \,. \end{align*}\] Note that \(t_0\) drops out of the final result: no matter how long ago \(t_0\) might have been, the probability that the component will live \(t_2-t_1\) units longer is the same, and the average additional lifetime is still \(b\).

4.4 Linear Functions of Poisson Random Variables

What to take away from this section:

The method of moment-generating functions allows us to determine that…

the sum of \(n\) iid Poisson random variables is itself Poisson distributed; and

the mean of \(n\) iid binomial random variables has a known but typically unutilized distribution: it is the sample sum distribution with a transformed domain.

Foreshadowing: the sample sum result will allow us to make statistical inferences about the Poisson expected counts parameter \(\lambda\), given \(n\) iid normal random variables.

Let’s assume we are given \(n\) iid Poisson random variables: \(X_1,X_2,\ldots,X_n \sim\) Poisson(\(\lambda\)). What is the distribution of \(Y = \sum_{i=1}^n a_i X_i\)?

Recall: the moment-generating function, or mgf, is a means by which to encapsulate information about a probability distribution. When it exists, the mgf is given by \(m_X(t) = E[e^{tX}]\). Also, if \(Y = \sum_{i=1}^n a_iX_i\), then \(m_Y(t) = m_{X_1}(a_1t) m_{X_2}(a_2t) \cdots m_{X_n}(a_nt)\); if we can identify \(m_Y(t)\) as the mgf for a known family of distributions, then we can immediately identify the distribution for \(Y\) and the parameters of that distribution.

If \(X\) is a Poisson random variable, then \[\begin{align*} m_X(t) = E[e^{tX}] &= \sum_{x=0}^\infty e^{tx} p_X(x) = \sum_{x=0}^\infty e^{tx} \frac{\lambda^x}{x!} e^{-\lambda} = e^{-\lambda} \sum_{x=0}^\infty \frac{\lambda^x}{x!} e^{tx} \\ &= e^{-\lambda} \left[ 1 + \lambda e^t + \frac{\lambda^2}{2!}e^{2t} + \ldots \right] = e^{-\lambda} \left[ 1 + y + \frac{y^2}{2!} + \ldots \right] \\ &= e^{-\lambda} e^y = \exp(-\lambda) \exp(\lambda e^t) = \exp[\lambda(e^t-1)] \,. \end{align*}\] Thus the mgf for \(Y = \sum_{i=1}^n X_i\) is \[ m_Y(t) = \exp\left[\lambda\left(e^t-1\right)\right] \cdots \exp\left[\lambda\left(e^t-1\right)\right] = \exp\left[(\lambda+\cdots+\lambda)(e^t-1)\right] = \exp\left[n\lambda(e^t-1)\right] \,. \] This mgf retains the form of a Poisson mgf. We thus see that the sum of \(n\) iid Poisson-distributed random variables is itself Poisson distributed with parameter \(n\lambda\), i.e., \(Y = \sum_{i=1}^n X_i \sim\) Poisson(\(n\lambda\)).

As was the case for the binomial distribution, the

sample mean of \(n\) iid Poisson-distributed random variables has

a sampling distribution whose masses are identical to those observed for

the sample sum,

but whose domain shifts from \(\{0,1,\cdots\}\) to \(\{0,1/n,\cdots\}\).

(As a reminder, we can derive this result mathematically by making

the general transformation \(\sum_{i=1}^n X_i \rightarrow (\sum_{i=1}^n X_i)/n\).)

While we could define, e.g., the sample mean pmf ourselves

using our own R function, there is no real need to: e.g., if we wish to

construct a confidence interval for \(\lambda\),

we can simply construct one for \(n\lambda\) using the

sum of the data as a statistic, and then divide each bound by \(n\).

(We could also, in theory, utilize the Central Limit Theorem if

\(n \gtrsim 30\), but there is absolutely no reason to do that to make

inferences about \(\lambda\): we know the distribution of the sum of the data

exactly, and thus there is no need to fall back upon approximations.)

4.4.1 Two Poisson Random Variables: Difference Distribution

Let’s assume that we are pointing a camera at an object, such as a star. A star gives off photons at a particular average rate \(r_S\) (with units of, e.g., photons per second). Thus if we open the shutter for a length of time \(t\), the number of photons we observe from the star is a Poisson random variable \(S \sim\) Poisson(\(\lambda_S=r_St\)). But the star is not the only object in the field of view; there may be other objects in the background that give off photons at a rate \(r_B\), and the number of photons we observe from the background will be \(B \sim\) Poisson(\(\lambda_B=r_Bt\)). Thus what we record is not \(S\), but \(T = S+B\)…so how can we make statistical inferences about \(S\) itself?

One possibility is to point the camera to an “empty” field near the star, and record some number of photons \(B\) over the same amount of time. Then we can estimate \(S\) using \(S = T - B\). What is the distribution of \(S\)?

We can utilize the method of moment-generating functions and write that \[\begin{align*} m_S(t) = m_T(t) m_B(-t) &= \exp[\lambda_T(e^t-1)] \exp[\lambda_B(e^{-t}-1)] \\ &= \exp[\lambda_T(e^t-1) + \lambda_B(e^{-t}-1)] \\ &= \exp[-(\lambda_T+\lambda_B) + \lambda_Te^t + \lambda_Be^{-t}] \,, \end{align*}\] where \(\lambda_T = \lambda_S+\lambda_B = (r_S+r_B)t\). At first, utilizing the method of mgfs appears to be a fool’s errand: this is not an mgf we know. But it turns out that the family of distributions associated with this mgf does have a name: \(S = T-B\) is a random variable sampled according to a Skellam distribution, with mean \(\lambda_T-\lambda_B\) and variance \(\lambda_T+\lambda_B\). We can work with this distribution to, e.g., construct confidence intervals for \(\mu_S\), etc., if we so choose. (Note, however, that in order to do this we would need to fix \(\mu_B\)…or we would have to move into the realm of numerical confidence region construction, which is beyond the scope of this book.)

4.4.2 Zero-Inflated Poisson Distribution: Moment-Generating Function

Recall that the zero-inflated Poisson, or ZIP, distribution has probability mass function \[ p_X(x) = \left\{ \begin{array}{ll} \theta + (1-\theta) e^{-\lambda} & x = 0 \\ (1 - \theta) \frac{\lambda^x}{x!} e^{-\lambda} & x = \{1,2,\ldots\} \end{array} \right. \] If we have \(n\) iid data distributed according to a ZIP distribution, can we determine the distribution of, e.g., the sample sum?

The moment-generating function for the ZIP distribution is \[\begin{align*} m_X(t) = E[e^{tX}] &= \sum_{x=0}^\infty e^{tx} p_X(x) \\ &= \theta + (1-\theta)e^{-\lambda} + \sum_{x=1}^\infty e^{tx} (1-\theta) \frac{\lambda^x}{x!} e^{-\lambda} \\ &= \theta + \sum_{x=0}^\infty e^{tx} (1-\theta) \frac{\lambda^x}{x!} e^{-\lambda} \\ &= \theta + (1-\theta) e^{-\lambda} \sum_{x=0}^\infty \frac{\lambda^x}{x!} e^{tx} \\ &= \theta + (1-\theta) \exp(-\lambda) \exp(\lambda e^t) = \theta + (1-\theta) \exp[\lambda(e^t-1)] \,. \end{align*}\] We have already seen how to evaluate the final summation, in an example just above.

The mgf for, e.g., the sample sum is \[ m_Y(t) = \prod_{i=1}^n m_{X_i}(t) = \left[ m_X(t) \right]^n = \left[ \theta + (1-\theta) \exp[\lambda(e^t-1)] \right]^n \,. \] It is clear that this is not the mgf of a ZIP distribution (or any other distribution with which we are familiar). Hence any statistical inference that we would wish to perform given ZIP-distributed data would require us to utilize simulations. We show how to use simulations to, e.g., construct confidence intervals in an example later in this chapter.

(We note, for completeness, that we run into the same issue with the zero-truncated Poisson distribution. We can derive the mgf easily enough… \[ m_X(t) = \frac{\exp[\lambda(e^t-1)] - \exp(-\lambda)}{1 - \exp(-\lambda)} \] …but we cannot use it to derive an exact sampling distribution for, e.g., the sample sum.)

4.4.3 Exponential Data: Sample Sum Distribution

As stated above, the exponential distribution, i.e., the gamma distribution with \(\alpha = 1\), is used to model the waiting time between two successive events in a Poisson process. Let’s assume that we have recorded \(n\) separate times between \(n\) separate pairs of events. What is the distribution of \(T = T_1 + \cdots + T_n\)?

As we do when faced with a linear function of \(n\) iid random variables, we utilize the method of moment-generating functions: \[ m_T(t) = \prod_{i=1}^n m_{T_i}(t) \,, \] where \(m_{T_i}(t) = (1-b t)^{-1}\) is the mgf for the exponential distribution. Thus \[ m_T(t) = \prod_{i=1}^n (1-b t)^{-1} = (1-b t)^{-n} \,. \] This has the form of the mgf for a Gamma(\(n,b\)) distribution, or, equivalently, an Erlang(\(n,b\)) distribution. In other words the sum of \(n\) iid waiting times has the same distribution as the waiting time between the \(i^{\rm th}\) and \((i+n)^{\rm th}\) events of a Poisson process.

4.5 Point Estimation

What to take away from this section:

Along with the maximum likelihood estimator and the minimum variance unbiased estimator, there has historically been a third point estimator that practitioners have used: the method of moments estimator or MoM estimator.

The MoM estimator is useful when, e.g., we are trying to jointly estimate two or more parameter values, or when we are trying to estimate one value and we cannot analytically derive either the MLE or the MVUE.

A MoM estimator does not possess the invariance property, nor is there a guarantee that it converges in distribution to a normal random variable.

In the age of computers, the method of moments has been superseded by the use of numerical optimization to estimate the MLE.

Previously, we described two commonly used point estimators: the maximum likelihood estimator (or MLE) and the minimum variance unbiased estimator (or MVUE). We review both below in examples, in the context of estimating the Poisson \(\lambda\) parameter. Here, for completeness, we introduce one last, less-commonly used approach, the so-called method of moments estimator.

The method of moments (or MoM) is a classic means by which to define estimators that has been historically useful when we face with the following situation: (a) the likelihood function is not easily differentiated, because it contains terms like \(\Gamma(\theta)\); and (b) the distribution has two or more free parameters, rendering it difficult (though certainly not impossible) to define the MVUE, since we would have to work with joint sufficient statistics and their multivariate sampling distributions. Now, why do we say “historically useful”? Because in the age of computers, we can always determine MLEs (or, at least, their numerical values) via numerical optimization (as shown in an example below), which effectively eliminates any need to ever use the MoM estimator in practice.

Recall that by definition, \(E[X^k]\) is the \(k^{\rm th}\) moment of the distribution of the random variable \(X\). We can define analagous sample moments, e.g., \[ m_1 = \frac{1}{n} \sum_{i=1}^n X_i = \bar{X} ~~ \mbox{and} ~~ m_2 = \frac{1}{n} \sum_{i=1}^n X_i^2 = \overline{X^2} \,. \] (For \(m_2\): note that the average of \(X_i^2\) is not the same as the average of \(X_i\), squared.) Let’s suppose that we have \(p\) parameters that we are trying to estimate. In method of moments estimation, we generally set the first \(p\) population moments equal to the first \(p\) sample moments and solve the system of equations to determine parameter estimates. These estimates are generally consistent, but also may be biased. (Situations may exist where higher-order moments may be preferable to use, such as when the one parameter of a distribution is \(\sigma^2\) and thus we might derive a better estimator using the second moment rather than the first, but typically we use the first \(p\) moments.)

For the Poisson distribution, there is one parameter to estimate and thus we set \(\mu_1' = E[X] = \lambda = m_1' = \bar{X}\). We thus find that \(\hat{\lambda}_{MoM} = \bar{X}\). For a more relevant example of method of moments usage, see below.

4.5.1 Poisson Expected Counts: Maximum Likelihood Estimator

Recall: the value of \(\theta\) that maximizes the likelihood function is the maximum likelihood estimate, or MLE, for \(\theta\). The maximum is found by taking the (partial) derivative of the (log-)likelihood function with respect to \(\theta\), setting the result to zero, and solving for \(\theta\). That solution is the maximum likelihood estimate \(\hat{\theta}_{MLE}\). Also recall the invariance property of the MLE: if \(\hat{\theta}_{MLE}\) is the MLE for \(\theta\), then \(g(\hat{\theta}_{MLE})\) is the MLE for \(g(\theta)\).

The likelihood function for the Poisson parameter \(\lambda\) is \[ \mathcal{L}(\lambda \vert \mathbf{x}) = \prod_{i=1}^n \frac{\lambda^{x_i}}{x_i!} \exp(-\lambda) \] and the log-likelihood is \[ \ell(\lambda \vert \mathbf{x}) = \left(\sum_{i=1}^n x_i\right) \log \lambda - n \lambda - \log\left(\prod_{i=1}^n x_i!\right) \,. \] (Note that we could write this as \[ \ell(\lambda \vert \mathbf{x}) \propto \left(\sum_{i=1}^n x_i\right) \log \lambda - n \lambda \,, \] where \(\propto\) is the proportionality symbol, because the last term in the original log-likelihood will disappear during differentiation because it does not contain \(\lambda\).) The derivative of \(\ell(\lambda \vert \mathbf{x})\) with respect to \(\lambda\) is \[ \frac{d\ell}{d\lambda} = \left(\frac{1}{\lambda}\sum_{i=1}^n x_i \right) - n \,. \] Setting the derivative to zero and rearranging terms, we find that \[ \hat{\lambda}_{MLE} = \frac{1}{n} \sum_{i=1}^n X_i = \bar{X} \] is the MLE for \(\lambda\).

Recall: the bias of an estimator is the difference between the average value of the estimates it generates and the true parameter value. If \(E[\hat{\theta}-\theta] = 0\), then the estimator \(\hat{\theta}\) is said to be unbiased.

By the general rule for \(\bar{X}\) introduced in Chapter 1, \(E[\hat{\lambda}_{MLE}] = E[\bar{X}] = \lambda\), so \(\hat{\lambda}_{MLE}\) is an unbiased estimator. (There is no guarantee that the MLE will always be an unbiased estimator\(-\)it just happens to be so here\(-\)but it will always be at least asymptotically unbiased.)

Recall: an estimator is consistent if its mean-squared error, \(B[\hat{\theta}]^2 + V[\hat{\theta}]\), goes to zero as the sample size \(n\) goes to infinity.

\(V[\hat{\lambda}_{MLE}] = \lambda/n\), so \(\hat{\lambda}_{MLE}\) is a consistent estimator, since \(\hat{\lambda}_{MLE} \rightarrow \lambda\) as \(n \rightarrow \infty\). (The MLE is always a consistent estimator.)

If we wish to find the MLE for a function of the parameter, e.g., \(\lambda^2\), we simply apply that function to \(\hat{\theta}_{MLE}\). Hence \(\hat{\lambda^2}_{MLE}\) is \(\bar{X}^2\). This is the invariance property of the MLE.

Last, recall that the MLE converges in distribution to a normal random variable with mean \(\theta\) and variance \(1/I_n(\theta)\), where \(I_n(\theta)\) is the Fisher information content of the data sample. Here, that means that \[ \hat{\lambda} \overset{d}{\rightarrow} Y \sim \mathcal{N}\left(\lambda,\frac{\lambda}{n}\right) \,. \]

4.5.2 Poisson Expected Counts: Minimum Variance Unbiased Estimator

Recall: deriving the minimum variance unbiased estimator involves two steps:

- factorizing the likelihood function to uncover a sufficient statistic \(U\) (that we assume is both minimal and complete); and

- finding a function \(h(U)\) such that \(E[h(U)] = \lambda\).

A sufficient statistic for a parameter (or parameters) \(\theta\) captures all information about \(\theta\) contained in the sample.

When we factorize the likelihood function, \[ \mathcal{L}(\lambda \vert \mathbf{x}) = \prod_{i=1}^n \frac{\lambda^{x_i}}{x_i!} e^{-\lambda} = \left(\prod_{i=1}^n \frac{1}{x_i!}\right) \left(\prod_{i=1}^n \lambda^{x_i}e^{-\lambda}\right) = \underbrace{\left(\prod_{i=1}^n \frac{1}{x_i!}\right)}_{h(\mathbf{x})} \underbrace{\lambda^{\sum_{i=1}^n x_i}e^{-n\lambda}}_{g\left(\sum_{i=1}^n x_i,\lambda\right)} \,, \] we see that a sufficient statistic is \(Y = \sum_{i=1}^n X_i\). Let’s determine the expected value for \(Y\): \[ E[Y] = E\left[\sum_{i=1}^n X_i\right] = \sum_{i=1}^n E[X_i] = \sum_{i=1}^n \lambda = n\lambda \,. \] Thus \(h(Y) = Y/n = \bar{X}\) is the MVUE for \(\lambda\). As this matches the MLE, we know already that in addition to being unbiased, the MVUE for \(\lambda\) is a consistent estimator.

The next question is whether the variance of the MVUE achieves the Cramer-Rao Lower Bound on the variance of an unbiased estimator. We show that it does in an example below. However, achieving the CRLB is not a guarantee in all cases: when the MVUE does not, it simply means that there does not exist an unbiased estimator that achieves the CRLB.

4.5.3 Death-by-Horse-Kick: Point Estimate

We begin this chapter by displaying the number of deaths per Prussian army corps per year resulting from horse kicks. Leaving aside the question of whether it is plausible that these data are truly Poisson distributed (a question we answer later in this chapter), what is the estimated rate of death per corps per year?

The total number of events observed are \[ 0 \times 109 + 1 \times 65 + 2 \times 22 + 3 \times 3 + 4 \times 1 = 65 + 44 + 9 + 4 = 122 \,, \] and the total sample size is \(n = 200\), so \[ \hat{\lambda} = \bar{X} = \frac{1}{n} \sum_{i=1}^n X_i = \frac{122}{200} = 0.61 \,. \] This is the MLE, the MVUE, and the MoM estimate for \(\lambda\). Later, we will use these data to estimate a 95% confidence interval for \(\lambda\).

4.5.4 The CRLB for the Variance of Expected Counts Estimators

Recall: the Cramer-Rao Lower Bound (or CRLB) is the lower bound on the variance of any unbiased estimator. If an unbiased estimator achieves the CRLB, it is the MVUE…but it can be the case that the MVUE does not achieve the CRLB. For a discrete distribution, the CRLB is given by \[ V_{\rm CRLB}[\hat{\theta}] = -\left(nE\left[\frac{d^2}{d\theta^2} \log p_X(X \vert p) \right]\right)^{-1} = \frac{1}{nI(\theta)} \] where \(I(\theta)\) is the Fisher information: \[ I(\theta) = -E\left[ \frac{\partial^2}{\partial \theta^2} \log f_X(x \vert \theta) \right] \,. \]

For the Poisson distribution, \[\begin{align*} p_X(x \vert \lambda) &= \frac{\lambda^x}{x!}e^{-\lambda} \\ \log p_X(x \vert \lambda) &= x \log \lambda - \lambda - \log x! \\ \frac{d}{d\lambda} \log p_X(x \vert \lambda) &= \frac{x}{\lambda} - 1 \\ \frac{d^2}{d\lambda^2} \log p_X(x \vert \lambda) &= -\frac{x}{\lambda^2} \\ I(\lambda) = -E \left[ \frac{d^2}{d\lambda^2} \log p_X(X \vert \lambda) \right] &= \frac{1}{\lambda^2} E[X] \\ &= \frac{1}{\lambda^2} \lambda = \frac{1}{\lambda} \,. \end{align*}\] Thus \[ V_{\rm CRLB}[\hat{\lambda}] = \frac{1}{n/\lambda} = \frac{\lambda}{n} \,. \] As we already know that \(V[\bar{X}] = V[X]/n = \lambda/n\), we can say that \(\hat{\lambda}_{MLE}\), \(\hat{\lambda}_{MVUE}\), and \(\hat{\lambda}_{MoM}\) all achieve the CRLB.

4.5.5 Minimum Variance Unbiased Estimation: Lack of Invariance

As stated above, the MVUE does not possess the property of invariance. To demonstrate this, we define the MVUE for \(\lambda^2\).

The first thing to notice is that we cannot fall back on factorization to determine an appropriate sufficient statistic, since \(\lambda^2\) does not appear directly in the likelihood function. So we iterate: we make an initial guess and see where that guess takes us, and we guess again if our initial guess is wrong, etc.

A seemingly appropriate first “guess” for \(\lambda^2\) is \(\bar{X}^2\): \[ E[\bar{X}^2] = V[\bar{X}] + (E[\bar{X}])^2 = \frac{\lambda}{n} + \lambda^2 \] We do get the term \(\lambda^2\) here…but we also get \(\lambda/n\). Hmm…so let’s try \(\bar{X}^2 - \bar{X}/n\) instead: \[ E\left[\bar{X}^2 - \frac{\bar{X}}{n}\right] = E[\bar{X}^2] - \frac{1}{n}E[\bar{X}] = \frac{\lambda}{n} + \lambda^2 - \frac{\lambda}{n} = \lambda^2 \,. \] Done! The MVUE for \(\lambda^2\) is thus \(\hat{\lambda^2}_{MVUE} = \bar{X}^2-\bar{X}/n\), which is not equal to \(\hat{\lambda^2}_{MLE} = \bar{X}^2\) (except in the limit \(n \rightarrow \infty\)).

4.5.6 Gamma Distribution: Method of Moments Estimation

Recall that the probability density function for a gamma random variable \(X\) is \[ f_X(x) = \frac{x^{a-1}}{b^{a}} \frac{\exp(-x/b)}{\Gamma(a)} \,, \] for \(x \geq 0\) and \(a,b > 0\). The expected value is \(E[X] = a b\) while the variance is \(V[X] = a b^2\) (and thus \(E[X^2] = V[X] + (E[X^2])^2 = a b^2 + a^2 b^2\)).

Let’s assume we have \(n\) iid gamma-distributed random variables. Because there are two parameters, we match the first two moments: \[\begin{align*} \mu_1' = E[X] = a b &= m_1' = \bar{X} \\ \mu_2' = E[X^2] = a b^2 + a^2 b^2 &= m_2' = \frac{1}{n}\sum_{i=1}^n X_i^2 = \overline{X^2} \,. \end{align*}\] We thus have a system of two equations with two unknowns. Let \(b = \bar{X}/a\). Then \[\begin{align*} a \left( \frac{\bar{X}}{a} \right)^2 + a^2 \left( \frac{\bar{X}}{a} \right)^2 &= \overline{X^2} \\ \Rightarrow ~~~~~~ \frac{(\bar{X})^2}{a} &= \overline{X^2} - (\bar{X})^2 \\ \Rightarrow ~~~~~~ \hat{a}_{MoM} &= \frac{(\bar{X})^2}{\overline{X^2} - (\bar{X})^2} \,, \end{align*}\] and thus \[ \hat{b}_{MoM} = \frac{\bar{X}}{\hat{a}_{MoM}} = \frac{\overline{X^2} - (\bar{X})^2}{\bar{X}} \,. \]

4.5.7 Gamma Distribution: Numerical Maximum Likelihood Estimation

As noted above, a reason to utilize method of moments estimation is that it can “work” in those situations where we cannot derive the MVUE or the MLE. For instance, let’s suppose that we draw \(n\) iid data from a gamma distribution, which has the following probability density function: \[\begin{align*} f_X(x) = \frac{x^{a-1}}{b^a} \frac{e^{-x/b}}{\Gamma(a)} \,, \end{align*}\] where \(x \in [0,\infty)\) and \(a,b > 0\). If \(a\) and \(b\) are both freely varying, then we are in a situation in which we have joint sufficient statistics…and thus we are not able to compute the MVUEs. We then fall back on trying to compute the MLEs…but what, e.g., is the partial derivative of \(n \log \Gamma(a)\) with respect to \(a\)?

With computers, we can circumvent this last issue by attempting to maximize the value of the likelihood function, as a function of \(a\) and \(b\), using a numerical optimizer.

The subject of numerical optimization is far too vast for us to be able to cover all the important details here. It suffices to say that our goal is to (a) define an objective function (here, the log-likelihood), and (b) pass that function into an optimizer that explores the space of free parameters (here, \(a\) and \(b\)) and returns the parameter values that optimize the objective function’s value (here, the ones that maximize the log-likelihood).

Let’s start with the objective function. If the pdf or pmf of our random variables is already coded in

R, we can use that coded function rather than write out the function mathematically. Recall that when we have sampled iid (continuously valued) data, \[\begin{align*} \ell(\theta \vert \mathbf{x}) = \sum_{i=1}^n \log f_X(x_i \vert \theta) \end{align*}\] For the specific case of the gamma distribution, we can convert this statement into code:

sum(log(dgamma(x, shape=a, scale=b)))where

dgamma()is the gamma pdf function. This is the objective function, except for one tweak that we will make: becauseR’soptim()function minimizes the objective function value by default, and we want to maximize the log-likelihood value, we will use

-sum(log(dgamma(x, shape=a, scale=b)))instead. So let’s now write down the full function:

f <- function(par, x)

{

-sum(log(dgamma(x, shape=par[1], scale=par[2])))

}Note how

Rexpects the parameter values to be contained within a single vector, which here we callpar.

The rest of the coding is straightforward: (a) we initialize the vector

parwith initial (good!) guesses for the values of the parameters, (b) passparand the observed data intooptim(), and (c) access theparelement of the list output byoptim(). The initial parameter values should be “close enough” to the optimized values so that the optimizer does not wander off to a local minimum that is not the global minimum. (And a good way to generate plausible initial values is to utilize the MoM estimator!)

set.seed(236)

# generate observed data

n <- 40

a <- 2.440 # arbitrarily chosen true values

b <- 5.390

X <- rgamma(n, shape=a, scale=b)

# compute MLE values via optimization

f <- function(par, x)

{

-sum(log(dgamma(x, shape=par[1], scale=par[2])))

}

# generate initial guesses using MoM estimator

a.MoM <- mean(X)^2/(mean(X^2)-mean(X)^2)

b.MoM <- mean(X)/a.MoM

par <- c(a.MoM, b.MoM)

# optimize

opt <- optim(par, f, x=X) # need to specify x via an extra argument

round(opt$par, 3)## [1] 2.274 4.932We thus find that \(\hat{a}_{\rm MLE} = 2.274\) and \(\hat{b}_{\rm MLE} = 4.932\). These values are close to, but not equal to, the true values; because these are MLEs, we know that our estimates will get closer and closer to the true values as \(n \rightarrow \infty\).

4.6 Confidence Intervals

What to take away from this section:

Given \(n\) iid data sampled according to a normal distribution, we can utilize numerical root-finding frameworks to construct \(100(1-\alpha)\)-percent confidence intervals for the Poisson expected counts parameter \(\lambda\).

If we do not know the sampling distribution for our chosen statistic \(Y\), but we do know (or can assume) the distribution that governs the sampling of each of our \(n\) iid data, we can utilize a simulation framework to construct interval estimates that are exact (i.e., that match what we would find if we did know the sampling distribution of \(Y\)) to within, e.g., one part in 10,000.

It is only if we do not know the sampling distribution of our chosen statistic \(Y\), and we do not know (nor do we wish to assume) the distribution that governs the sampling of each of our \(n\) iid data, that we would make use of bootstrapping, in which we would, e.g., resample the observed data with replacement so as to create an empirical sampling distribution for \(Y\).

Recall: a confidence interval is a random interval \([\hat{\theta}_L,\hat{\theta}_U]\) that overlaps (or covers) the true value \(\theta\) with probability \[ P\left( \hat{\theta}_L \leq \theta \leq \hat{\theta}_U \right) = 1 - \alpha \,, \] where \(1 - \alpha\) is the confidence coefficient. Note that this is a long-term probabilistic statement that is not to be applied to any one numerically evaluated interval: an evaluated interval either overlaps the true value, or it does not (and thus we cannot say there is a \(100(1-\alpha)\)-percent chance that \(\theta\) lies within the interval). We determine \(\hat{\theta}\) by solving the following equation: \[ F_Y(y_{\rm obs} \vert \theta) - q = 0 \] for \(\theta\), where \(F_Y(\cdot)\) is the cumulative distribution function for the statistic \(Y\), \(y_{\rm obs}\) is the observed value of the statistic, and \(q\) is an appropriate quantile value that is determined using the confidence interval reference table introduced in section 16 of Chapter 1.

As far as the construction of confidence intervals given a discrete sampling distribution goes, nothing changes algorithmically from where we were in Chapter 3; in an example below, we review how we would construct an interval for the Poisson parameter \(\lambda\).

In the context of statistical inference, there is one more important question to answer. What do we do if we neither know nor are willing to assume the distribution from which our data are sampled? Our root-finding algorithm relies upon knowing the sampling distribution of an observed statistic, and that in turn relies on knowing the distribution from which we draw each of our \(n\) iid data. One thing we can do is fall back upon bootstrapping. We demonstrate bootstrap confidence interval estimation in an example below.

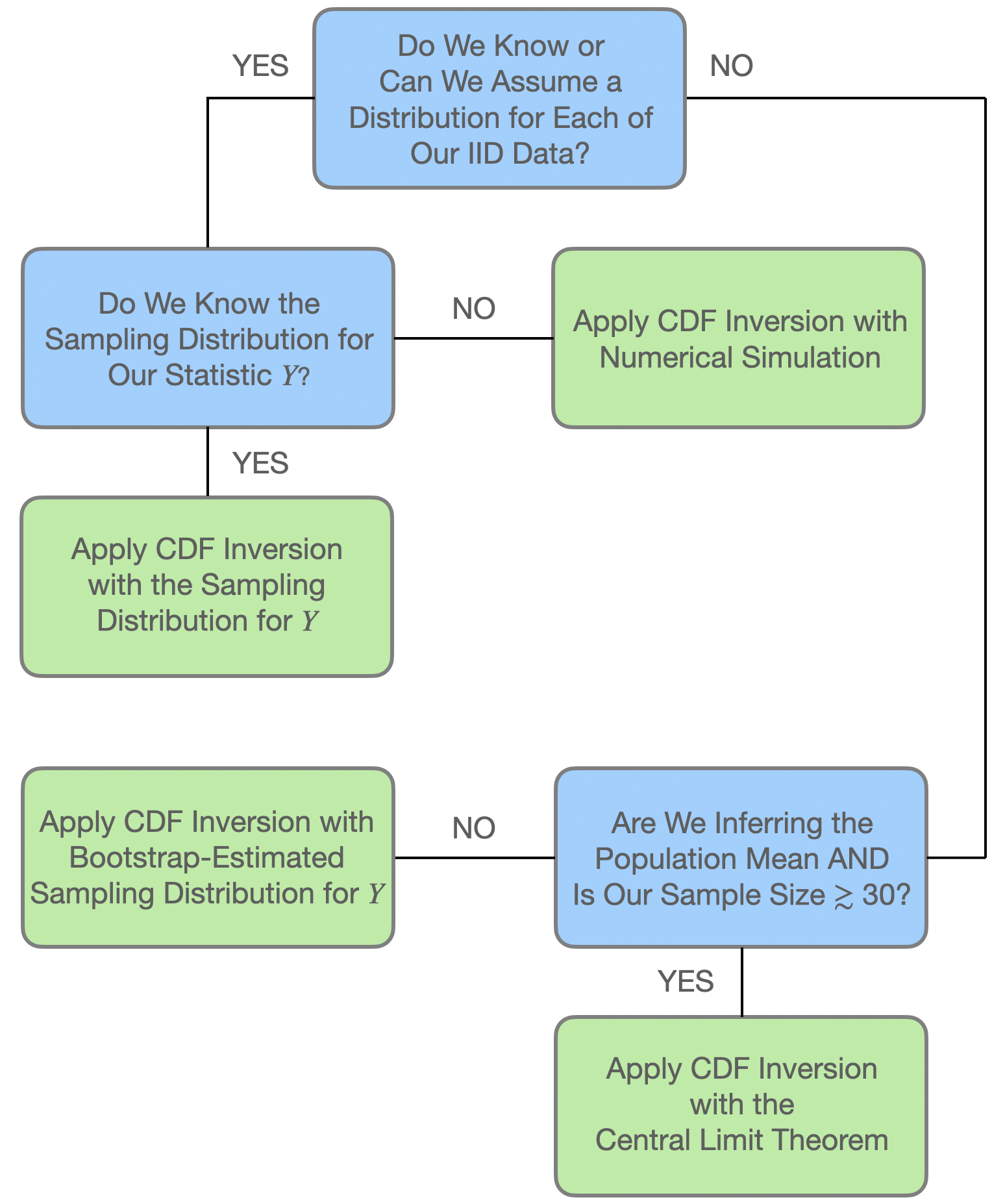

At this point, it is useful for us to review how we would pursue the construction of confidence intervals in different analysis situations. See Figure 4.4. We can summarize the chart as follows: if we have \(n\) iid data \(\{X_1,\ldots,X_n\}\) sampled according to some distribution \(P\), and we have computed some statistic \(Y = g(X_1,\ldots,X_n)\), then…

- if we know the sampling distribution for \(Y\), use it to construct the interval as we have throughout this book; or

- if we do not know the sampling distribution for \(Y\), but do know the distribution according to which each individual datum is sampled, then we utilize that distribution in a simulation framework (as demonstrated in an example below); or

- if we do not know the sampling distribution for \(Y\), and we do not know the distribution for each of our individual data, then we fall back upon, e.g., nonparametric bootstrapping (as demonstrated in another example below).

However, regarding the third point: if our goal is to infer the population mean \(\mu\), and our sample size is \(\gtrsim 30\), then we can utilize the Central Limit theorem instead of bootstrapping and circle back to first point above, while assuming the sampling distribution \(Y = \bar{X} \sim \mathcal{N}(\mu,S^2/n)\).

Is there a cost to nonparametric bootstrapping? The algorithm seems easy enough to implement, and if we are trying to statistically infer the population mean using, e.g., the sample median, there would be certainly be less mathematical work involved. The short answer is that, for small samples, the actual coverage of bootstrap confidence intervals is generally less than the advertised coverage, with the difference between the advertised and observed coverage going to zero as \(n\) goes to infinity. There is no such issue when we utilize the exact sampling distribution for \(Y\), or generate a sufficient number of datasets in a simulation framework. In other words: when we can generate exact confidence intervals, we should do the work necessary to generate them.

Figure 4.4: A flowchart showing how to apply our confidence interval methodology (dubbed cdf inversion in the chart) in different analysis situations. From Freeman (2026).

4.6.1 Poisson Expected Counts: Confidence Interval

Assume that we sample \(n\) iid data. We know that the sum \(Y = \sum_{i=1}^n X_i\) is a sufficient statistic for \(\lambda\) and that \(Y \sim\) Poisson(\(n\lambda\)). For this statistic, \(E[Y] = n\lambda\), which increases with \(\lambda\), so we know that we will utilize the “yes” lines of the confidence interval reference table. Our observed test statistic is \(y_{\rm obs} = \sum_{i=1}^n x_i\).

# Let's assume we observe ten years of data in a Poisson process

set.seed(101)

alpha <- 0.05

n <- 10

lambda <- 8

X <- rpois(n, lambda=lambda)

f <- function(nlambda, y.obs, q)

{

ppois(y.obs, lambda=nlambda) - q

}

round(uniroot(f, interval=c(0.001,10000), y.obs=sum(X)-1, 1-alpha/2)$root/n, 3)## [1] 5.722## [1] 9.179Note both the discreteness correction that we apply when deriving the lower bound and the division by \(n\) after the calls to

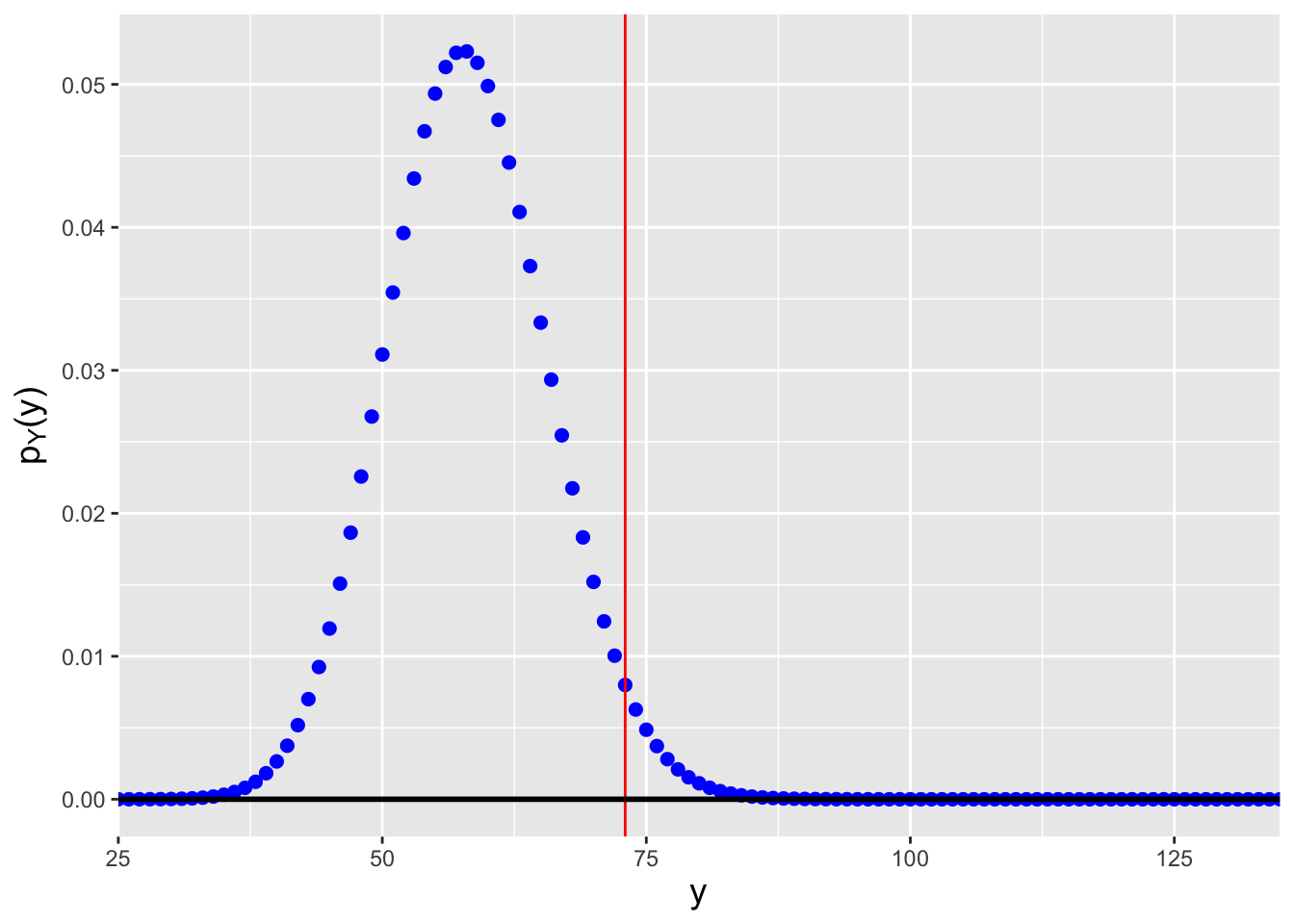

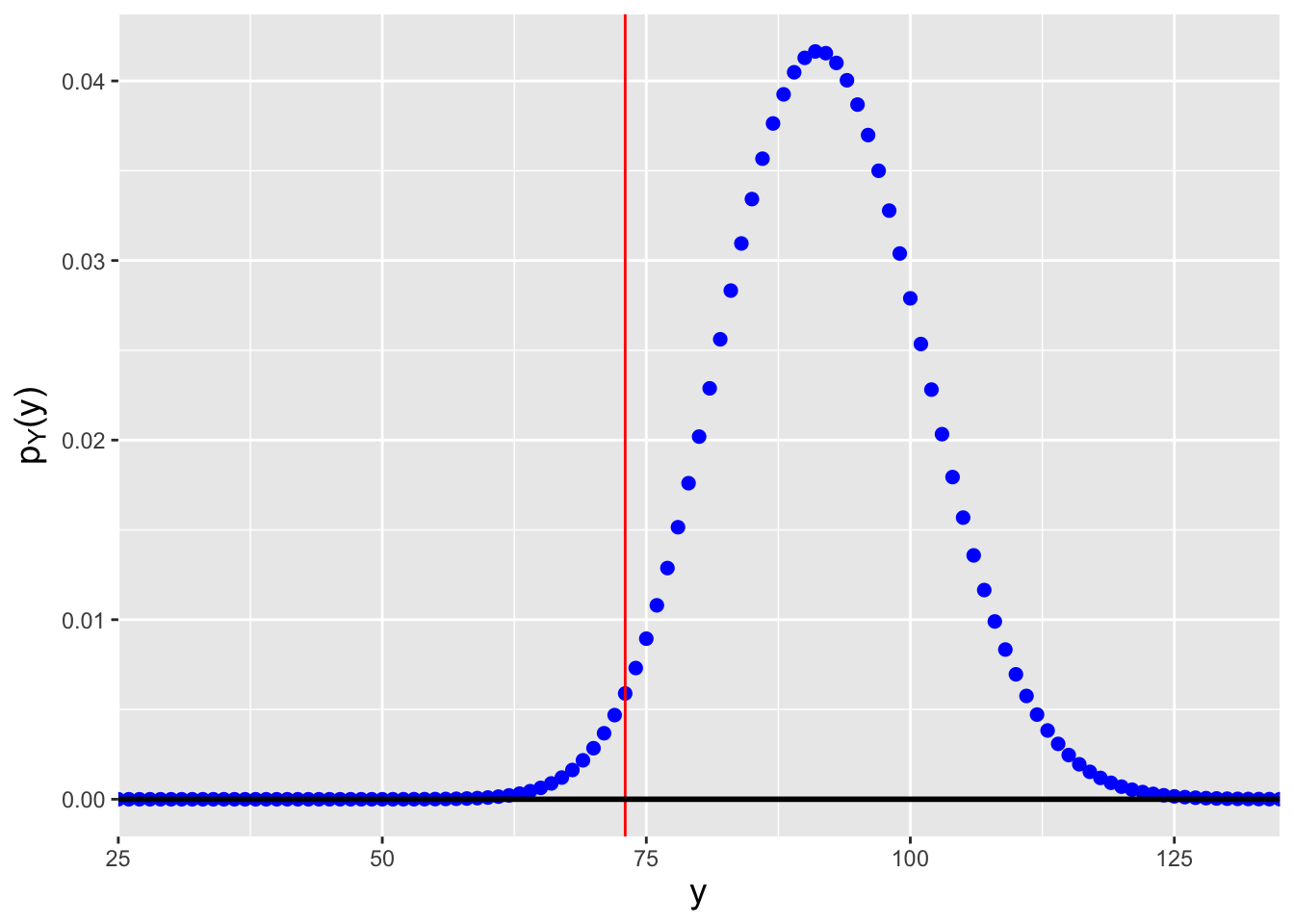

uniroot(). The latter converts the interval bounds from being ones on \(n\lambda\) to being ones on \(\lambda\) itself. The interval is \([\hat{\lambda}_L,\hat{\lambda}_U] = [5.72,9.18]\), which overlaps the true value of 8. (See Figure 4.5.) Note that the interval over which we search for the root is [0.001,10000], which is (effectively) the range of possible values for \(\lambda\). (Recall that \(\lambda > 0\), hence the small but non-zero lower bound forinterval.)

Figure 4.5: Probability mass functions for Poisson distributions for which (left) \(n\lambda=57.2\), and (right) \(n\lambda=91.8\). We assume that we observe \(y_{\rm obs} = \sum_{i=1}^n x_i = 73\) events in total and that we want to construct a 95% confidence interval for \(\lambda\). \(n\lambda=57.2\) is the smallest value of \(n\lambda\) such that \(F_Y^{-1}(0.975) = 73\), while \(n\lambda=91.8\) is the largest value of \(n\lambda\) such that \(F_Y^{-1}(0.025) = 73\).

In Chapter 3, we discussed how when we work with discrete sampling distributions, the coverage of the confidence intervals that we construct, given a value \(\theta = \theta_o\), will be equal to the probability of sampling a datum in the acceptance region given a null hypothesis \(H_o : \theta = \theta_o\), and thus the coverage will be, in general, \(> 1-\alpha\). Let’s verify that that is the case here.

# here, lambda = 8

y.rr.lo <- qpois( alpha/2, lambda=lambda)

y.rr.hi <- qpois(1-alpha/2, lambda=lambda)

round(sum(dpois(y.rr.lo:y.rr.hi, lambda=lambda)), 3)## [1] 0.969If \(\lambda = 8\), the coverage of our interval estimates is 96.9%.

4.6.2 Death-by-Horse-Kick: Confidence Interval

Previously, we determined that a point estimate for the expected number of deaths from horse kicks in any one Prussian army corps during any one year is \(\hat{\lambda} = 0.61\). Here, we determine a 95% two-sided interval estimate for \(\lambda\).

X <- c(rep(0,109), rep(1,65), rep(2,22), rep(3,3), rep(4,1))

n <- length(X)

alpha <- 0.05

f <- function(nlambda, y.obs, q)

{

ppois(y.obs, lambda=nlambda) - q

}

round(uniroot(f, interval=c(0.001,10000), y.obs=sum(X)-1, 1-alpha/2)$root/n, 3)## [1] 0.507## [1] 0.728The 95% confidence interval is \([0.507,0.728]\).

4.6.3 Death-by-Horse-Kick: Nonparametric Bootstrap Confidence Interval

The bootstrap, introduced in Efron (1979), uses the observed data themselves to build up empirical sampling distributions for statistics. (Note that here we are specifically referring to the nonparametric bootstrap procedure. Other bootstrap procedures exist; see, e.g., Hesterberg 2015 and references therein.) Let’s suppose we are handed the following data: \[ \mathbf{X} = \{X_1,X_2,\ldots,X_n\} \overset{iid}{\sim} P \,, \] where the distribution \(P\) is unknown. Now, let’s suppose further that from these data we compute a statistic: a single number. How can we build up an empirical sampling distribution from a single number? The answer is to repeatedly resample the data we observe, with replacement. For instance, if we have as data the numbers \(\{1,2,3\}\), a bootstrap sample might be \(\{1,1,3\}\) or \(\{2,3,3\}\), etc. Every time we resample the data, we compute the statistic we are interested in and record its value. Voila: we have an empirical sampling distribution. And if we can link the elements of that sampling distribution to a population parameter, we can immediately write down a confidence interval. For instance, if we have the \(n_{\rm boot}\) statistics \(\{\bar{X}_1,\ldots,\bar{X}_k\}\), we can put bounds on the population mean \(\mu\): \[\begin{align*} \hat{\mu}_L &= \bar{X}_{\alpha/2} \\ \hat{\mu}_U &= \bar{X}_{1-\alpha/2} \,, \end{align*}\] where \(\alpha/2\) and \(1-\alpha/2\) represent sample percentiles, e.g., the 2.5\(^{\rm th}\) and 97.5\(^{\rm th}\) percentiles.

What is a 95% two-sided bootstrap confidence interval on the expected number of deaths by horse kick per Prussian army corps per year?

# We are utilizing the same variable X defined above

n.boot <- 10000

x.bar <- rep(NA, n.boot)

for ( ii in 1:n.boot ) {

s <- sample(length(X), length(X), replace=TRUE)

x.bar[ii] <- mean(X[s])

}

round(quantile(x.bar, probs=c(0.025, 0.975)), 3)## 2.5% 97.5%

## 0.505 0.720The estimated interval is \([0.505,0.725]\). This is on par with what we observe above, but we will note that in general the nonparametric bootstrap procedure suffers from what Hesterberg (2015) dubs a “narrowness bias”: the coverage of its intervals is smaller than \(1-\alpha/2\) for small samples, converging to \(1-\alpha/2\) as \(n \rightarrow \infty\). (We have \(n = 200\) data here, so the narrowness bias is effectively moot.) When we can propose a plausible distribution for our data, we should do so, as we will generate more meaningful confidence intervals (even if simulations are involved) than if we lazily fall back upon bootstrapping.

4.6.4 Bootstrap Sample: Proportion of Observed Data

Let’s assume that we sample \(n\) iid data from some distribution \(P\). When we create a bootstrap sample of these data, some of the observed data appear multiple times, while other data do not appear at all. What is the average proportion of observed data in any given bootstrap sample?

Let \(i\) be the index of an arbitrary datum, where the indices are \(\{1,2,\ldots,n-1,n\}\). Let \(X\) be the number of times \(i\) is chosen when we construct a bootstrap sample of size \(n\). We can see immediately that data sampling is a series of Bernoulli trials (i.e., either we pick a particular datum, with probability \(1/n\), or we do not), so \(X \sim\) Binom(\(n, 1/n\)). The probability of observing a particular datum one or more times, \(P(X \geq 1)\), then represents the average proportion of observed data in a bootstrap sample: \[ P(X \geq 1) = 1 - P(X = 0) = 1 - (1-1/n)^n \,, \] which, as \(n \rightarrow \infty\), approaches \(1-1/e = 0.632\). Thus, for a sufficiently large sample, 63.2% of the observed data will appear at least once in a bootstrapped dataset.

4.6.5 Death-by-Horse Kick: Simulation-Based Confidence Interval

Let’s assume that we do not know that the sampling distribution for the sum of \(n\) iid Poisson random variables is Poisson(\(n\lambda\)), but that we can assume each datum is itself sampled according to a Poisson distribution. To determine a confidence interval for \(\lambda\), then, we can utilize numerical simulations, to estimate empirical sampling distributions for \(Y\) given candidate values of \(\lambda\), and determining those values of \(\lambda\) that make \(F_Y(y_{\rm obs} \vert \lambda)\) (at least approximately) equal to 0.025 and 0.975.

Let’s first build up the code that we would need to construct the empirical cumulative distribution function:

X <- matrix(rpois(n*num.sim, lambda=lambda), nrow=num.sim)

Y <- apply(X, 1, function(x){sum(x)}) # we still know the sum is sufficient

sum(Y <= y.obs)/num.simOn the first line, we create

num.simseparate datasets given a parameter value, and store them row-by-row in a matrix. On the second line, we useR’sapply()function, row-by-row (as indicated by the argument1), to determine the statistic value for each dataset. Then, on the third line, we determine the proportion of statistic values that are less than or equal to \(y_{\rm obs}\): this is the empirical cumulative distribution function value \(\hat{F}_n(y_{\rm obs} \vert a)\). We then find the value of \(a\) for which \(\hat{F}_n(y_{\rm obs} \vert a) - q = 0\) usinguniroot().

Let’s put this all together in an example where \(n = 5\) and \(y_{\rm obs} = 10\):

# We utilize the same data vector X defined above

n <- length(X)

y.obs <- sum(X)

alpha <- 0.05

# Note: we are generating data with lambda, so the CI is for lambda, not n*lambda

f <- function(lambda, n, y.obs, q, num.sim=1000000, seed=236)

{

set.seed(seed)

X <- matrix(rpois(n*num.sim, lambda=lambda), nrow=num.sim)

Y <- rowSums(X) # equivalent to apply(X, 1, function(x){sum(x)})

sum(Y <= y.obs)/num.sim - q

}

round(uniroot(f, c(0.001, 1000), n=n, y.obs=y.obs-1, q=1-alpha/2, num.sim=100000)$root, 3)## [1] 0.507## [1] 0.728Our simulation-based 95% confidence interval for \(\lambda\) is [0.507,0.728], which is equivalent to the one determined using the fact that the sum of the data are Poisson-distributed with parameter \(n\lambda\). To be clear: simulation-based confidence intervals are exact to within machine tolerance (the fact that

sum(Y <= y.obs)/num.simandqcan differ by \(\sim 10^{-4}\) by default) and any uncertainty in the value of the empirical cdf \(\hat{F}_n(y_{\rm obs} \vert \lambda)\) due to our use of a finite number of simulated datasets,num.sim. Regarding the second point: the empirical cdf is an estimate, i.e., even if we hold \(\lambda\) constant,sum(Y <= y.obs)is a random variable whose value can change as we change the random number generator seed value. Let’s denote the sum as \(S \vert \lambda\), the total number of simulated datasets as \(k\), and the true cdf value (given \(y_{\rm obs}\)) as \(p\). Then \[\begin{align*} S \vert \lambda \sim \text{Binomial}(k, p) \,. \end{align*}\] The standard error for the empirical cdf is thus \[\begin{align*} \sqrt{V\left[\frac{1}{k}(S \vert \lambda)\right]} = \sqrt{\frac{p(1-p)}{k}} \,. \end{align*}\] If \(k = 10^5\) and, e.g., \(p = 0.025\), the standard error is \(\sim 10^{-4}\). Combining this value with the tolerance ofuniroot(), we expect the uncertainties of our interval bound estimates to be \(\sim 10^{-4}\). (Particularly if we utilize a larger number of simulated datasets. Above, we setnum.simto a smaller value because, ultimately, we multiply this value by \(n\), and since \(n = 200\), we need to be mindful to not utilize too much computer memory and to not make the computation time too long. Eachuniroot()call in the code given here generates results in \(\lesssim\) 10 CPU seconds on a standard desktop or laptop computer.)

4.6.6 Zero-Truncated Poisson Expected Counts: Simulation-Based Confidence Interval

Earlier in this chapter, we derived the moment-generating function for the zero-inflated Poisson distribution and wrote down the mgf for the zero-truncated Poisson distribution, and indicated that we could use neither of them to determine the sampling distribution of, e.g., the sample sum given \(n\) iid random variables. As indicated in Figure 4.4, when we do not know the sampling distribution of \(Y\), but we do know the distribution for each individual datum, we can utilize simulations to derive exact confidence intervals. We will demonstrate that here for the zero-truncated distribution.

Let’s assume that we conduct an experiment in which we gather the following data:

## [1] 2 4 2 3 2 3 5 3 3 1We decide that we wish to construct a 95% lower bound on \(\lambda\). The reader can verify that a sufficient statistic for \(\lambda\) is \(Y = \sum_{i=1}^n X_i\), and that \(E[Y]\) increases with \(\lambda\) (meaning that \(q = 1-\alpha = 0.95\)). Given this information and the simulation framework laid out in the last example, determining the lower bound is straightforward:

n <- length(X)

y.obs <- sum(X)

alpha <- 0.05

f <- function(lambda, n, y.obs, q, num.sim=1000000, seed=236)

{

set.seed(seed)

X <- matrix(rztpois(n*num.sim, lambda=lambda), nrow=num.sim)

Y <- rowSums(X) # equivalent to apply(X, 1, function(x){sum(x)})

sum(Y <= y.obs)/num.sim - q

}

round(uniroot(f, c(0.001, 1000), n=n, y.obs=y.obs, q=1-alpha)$root, 3)## [1] 1.848The 95% lower bound on \(\lambda\) is 1.848.

4.7 Hypothesis Testing

What to take away from this section:

While the Neyman-Pearson lemma states that a ratio of likelihood functions constitutes the most powerful test of the simple hypotheses \(H_o : \theta = \theta_o\) and \(H_a : \theta = \theta_a\), we cannot extend that result to state a most powerful test of composite hypotheses.

A commonly used test of composite hypothesis is the likelihood ratio test, or LRT.

If we are working with a member of the exponential family of distributions, then to carry out the LRT, we should simply conduct a hypothesis test in the manner described in Chapters 1 and 2 while utilizing a sufficient statistic for \(\theta\).

Given a single vector of Poisson data \(\{X_1,\ldots,X_m\}\), where \(\sum_{i=1}^m X_i = k\), and a null hypothesis that the expected data proportions across the bins are \(\{p_{1,o},\ldots,p_{m,o}\}\), we can carry out a set of \(m\) separate Poisson-based hypothesis tests and aggregate the \(m\) \(p\)-values into a single \(p\)-value.

Recall: a hypothesis test is a framework to make an inference about the value of a population parameter \(\theta\). The null hypothesis \(H_o\) is that \(\theta = \theta_o\), while possible alternatives \(H_a\) are \(\theta \neq \theta_o\) (two-tail test), \(\theta > \theta_o\) (upper-tail test), and \(\theta < \theta_o\) (lower-tail test). For, e.g., a one-tail test, we reject the null hypothesis if the observed test statistic \(y_{\rm obs}\) falls outside the bound given by \(y_{RR}\), which is a solution to the equation \[ F_Y(y_{RR} \vert \theta_o) - q = 0 \,, \] where \(F_Y(\cdot)\) is the cumulative distribution function for the statistic \(Y\) and \(q\) is an appropriate quantile value that is determined using the hypothesis test reference table introduced in section 17 of Chapter 1. Note that the hypothesis test framework only allows us to make a decision about a null hypothesis; nothing is proven.

In Chapter 3, we built upon the framework described above by introducing the Neyman-Pearson lemma. This result allows us to bypass the “guesswork” that goes into selecting a hypothesis test statistic, by defining the most powerful test of a simple null hypothesis versus a simple specified alternative.

Recall: when we test the simple hypotheses \(H_o: \theta = \theta_o\) versus \(H_a: \theta = \theta_a\), the Neyman-Pearson lemma allows us to state that the hypothesis test with maximum power has a rejection region of the form \[ \frac{\mathcal{L}(\theta_o \vert \mathbf{x})}{\mathcal{L}(\theta_a \vert \mathbf{x})} < c(\alpha) \,, \] where \(c(\alpha)\) is a constant whose value depends on the specified Type I error \(\alpha\). When we sample data from an exponential-family distribution, we would simply determine a sufficient statistic \(Y\), and develop a hypothesis test as we have done previously using that statistic (or a function of it), assuming we know or can assume its sampling distribution. If the rejection region does not depend on \(\theta_a\), then the test is said to be a uniformly most powerful (UMP) test.

In an example, we demonstrate how to apply the NP lemma to construct a hypothesis test for the Poisson parameter \(\lambda\), given a sample of \(n\) iid data. Here we describe a more general hypothesis test framework, dubbed the likelihood ratio test (or LRT).

“Wait…the NP lemma had a likelihood ratio. How is the LRT different?”

That is a good question. It differs in how we specify the null and alternative hypotheses: \[ H_o: \theta \in \Theta_o ~~\mbox{vs.}~~ H_a: \theta \in \Theta_o^c \,, \] where \(\Theta_o\) (“capital theta naught”) represents a set of possible null values for \(\theta\), while \(\Theta_o^c\) is the complement of that set. For instance, for tests involving the Poisson parameter \(\lambda\), \(\Theta_o\) could be \(\lambda \in [5,10]\), so that \(\Theta_o^c\) is \(\lambda < 5\) or \(\lambda > 10\). (Thus the null and alternative hypotheses can both be composite hypotheses, as opposed to simple ones.) Let \(\Theta = \Theta_o \cup \Theta_o^c\), i.e., the union of the null and alternative sets. The rejection region for the LRT is \[ \lambda_{LR} = \frac{\mbox{sup}_{\theta \in \Theta_o} \mathcal{L}(\theta \vert \mathbf{x})}{\mbox{sup}_{\theta \in \Theta} \mathcal{L}(\theta \vert \mathbf{x})} < c(\alpha) \,, \] where, like it is in the context of the NP lemma, \(c(\alpha)\) is a constant that depends on the specified Type I error \(\alpha\).

“Since the LRT is more general, why would we ever utilize the NP lemma?”

That is another good question.

The primary point to make is that when we construct a test of two simple hypothesis within the framework of the NP lemma, we know that we are constructing the most powerful test of those hypotheses. On the other hand, while the LRT is generally a powerful test, given the composite nature of one (or both) of the hypotheses it comes with no guarantee of being the most powerful test. For instance, perhaps an alternative like the score test (also known as the Lagrange multiplier test) or the Wald test would provide the most powerful test in a given analysis situation, although one should keep in mind that it is usually the case that the power of these tests are effectively identical. A reader who is interested in learning more about them should look at section 10.3 of Casella and Berger (2002) and at Buse (1982), while noting that the latter implicitly describes two-tail tests only.

How does using the likelihood ratio test play out in practice? Let’s look at some possible use cases. For simplicity, below we will assume that we have sampled data from an exponential-family distribution, and thus that we can reduce the data to, e.g., a single-number sufficient statistic for \(\theta\). If we were to sample data according to a non-exponential-family distribution, we could, as we did in Chapter 3, fall back upon the use of simulations to determine the empirical distribution of the statistic \(\lambda_{LR}\) (or \(\log\lambda_{LR}\)) under the null and use that distribution to estimate, e.g., the \(p\)-value.

- We have one freely varying parameter \(\theta\) and we wish to test \(H_o : \theta = \theta_o\) versus \(H_a : \theta \neq \theta_o\).

In this situation, we identify a sufficient statistic (or a one-to-one function of it) for which we know the sampling distribution and use it to define a two-tail test as we have done previously, utilizing the hypothesis test reference tables.

- We have one freely varying parameter \(\theta\) and we wish to define a one-sided alternative hypothesis, e.g., \(H_a : \theta < \theta_o\).

Here, the null hypothesis would be the complement of \(H_a\), i.e., \(H_o : \theta \geq \theta_o\). The distribution of the ratio \[ \lambda_{LR} = \frac{\mbox{sup}_{\theta \geq \theta_o} \mathcal{L}(\theta \vert \mathbf{x})}{\mathcal{L}(\hat{\theta}_{MLE} \vert \mathbf{x})} \] is thus not uniquely specifiable: it is a function of \(\theta\), and our null does not state a specific value for \(\theta\). So how would we determine \(y_{\rm RR}\)? It turns out the most conservative rejection-region boundary we can specify, which would be the one that is associated with the highest \(p\)-value we can observe, is the one that we would derive assuming that \(\theta = \theta_o\)…which means that to define an LRT, we would simply assume \(\theta_o\) as our null value, identify a sufficient statistic (or a one-to-one function of it) for which we know the sampling distribution, and define a one-tail test as we have done previously.

At this point, it is important to note that the way that we have defined one-tail hypothesis tests in previous chapters (e.g., \(H_o : \theta = \theta_o\) versus \(H_a : \theta < \theta_o\)) is the conventional way to define them but not necessarily the correct way. A more precise definition of the null would indicate that it is composite (e.g., \(H_o : \theta \geq \theta_o\)), but for the reason given above we would still insert \(\theta_o\) itself into the \(p\)-value equation, etc. For further discussion, see Jamshidian & Jamshidian (2026).

- We have two (or more) freely varying parameters, or alternatively, we have one freely varying parameter for a non-exponential-family distribution.

In this context, we will have joint sufficient statistics that are sampled according to a multivariate sampling distribution that will be difficult, though not necessarily impossible, to work with directly. In such a situation, we have historically fallen back on Wilks’ theorem, although where possible we should utilize simulations like we did in the context of the NP lemma (as we will demonstrate below). (That’s because Wilks’ theorem utilizes the large-sample approximation that the data are jointly distributed according to a multivariate normal distribution. As this is only an approximation, simulations will yield superior results in terms of precision and accuracy.)